Table of Contents

The Night Priya Almost Built the Wrong Product on the Wrong AI

It was a Tuesday evening in Pune. Priya, a 29-year-old SaaS founder, had just decided to build her entire customer-support chatbot on Mistral AI. The pricing looked perfect.

The benchmarks looked impressive. A few Twitter threads called it “the best open-weight model alive” — at least based on early takes around mistral ai strengths weaknesses 2026.

She spent three weeks integrating it. Wrote hundreds of lines of code. Set up the infrastructure.

Then, during her first real-world pilot with a client, the chatbot started giving inconsistent answers — exposing real concerns in mistral ai strengths weaknesses 2026. Long-form reasoning collapsed. The model lost context midway through complex support threads. And when she needed it to handle a multi-file product document, it simply struggled.

Three weeks of work. Nearly wasted.

The problem wasn’t Mistral AI. Mistral AI is genuinely powerful. The problem was that nobody had given Priya an honest, practical breakdown of mistral ai strengths weaknesses 2026 — what it does brilliantly, where it falls short, and how to build around its edges.

That’s exactly what this guide is here to fix.

Whether you’re a developer integrating an LLM into your stack, a founder choosing an AI backbone for your product, a marketer automating content workflows, or a student exploring open-source AI — understanding the real mistral ai strengths weaknesses 2026 will save you time, money, and a lot of frustration.

And if you’ve ever wished you could test Mistral side-by-side with GPT-4, Claude, or Gemini before committing? That’s precisely what AiZolo was built for.

What Is Mistral AI? A Quick 2026 Orientation

Before we dig into the Mistral AI strengths weaknesses 2026 landscape, let’s establish the baseline.

Mistral AI is a Paris-based AI company founded in 2023 by former researchers from Google DeepMind and Meta AI.

In just three years, it has grown from a scrappy European startup into one of the most serious players in the global AI arena — a rise that has made mistral ai strengths weaknesses 2026 a critical discussion for anyone evaluating modern AI tools, with an ARR reportedly hitting $400 million by early 2026 and a valuation of approximately $13.8 billion.

What makes Mistral different from OpenAI or Anthropic isn’t just the European origin story. It’s the philosophy: open-weight models, deployment flexibility, data sovereignty, and competitive performance at a fraction of the cost of proprietary alternatives — all central to understanding mistral ai strengths weaknesses 2026.

Their 2026 model lineup includes:

- Mistral Large 3 — flagship model, strong general-purpose reasoning

- Codestral 25.01 — dedicated coding model with a 256K context window

- Ministral 14B — a compact reasoning model that punches well above its weight

- Voxtral TTS — a new text-to-speech model released March 2026

- Leanstral — an open-source formal proof agent for Lean 4

- Mistral Medium 3.1 — competitive performance at dramatically lower cost

It’s a rich lineup. But every model has trade-offs — and understanding those trade-offs is the whole point of this guide.

Mistral AI Strengths in 2026: What It Actually Gets Right

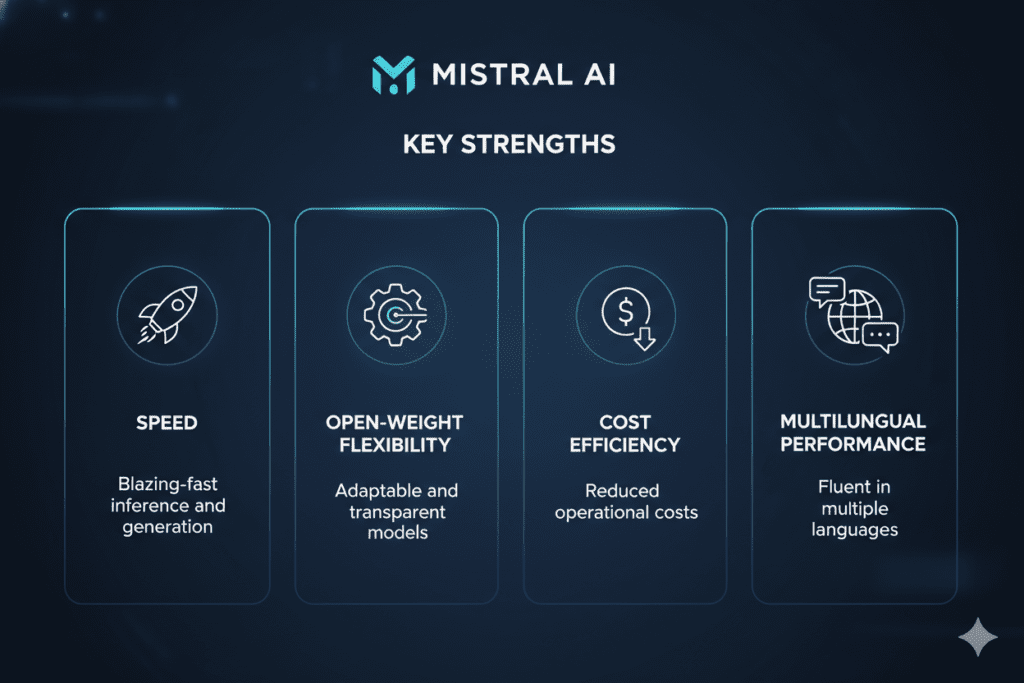

1. Open-Weight Freedom: The Biggest Competitive Advantage

When people talk about mistral ai strengths weaknesses 2026, the open-weight architecture is the first thing serious builders bring up — and for good reason.

Unlike GPT-4 or Claude, many of Mistral’s core models are released under the Apache 2.0 license — meaning you can download them, modify them, fine-tune them on your proprietary data, and deploy them entirely on your own infrastructure. No API dependency. No usage caps. No vendor lock-in — a defining advantage highlighted in mistral ai strengths weaknesses 2026.

For regulated industries — healthcare, finance, legal, government — this is not a nice-to-have. It’s a requirement. When patient data or financial records can’t leave your servers, Mistral’s open-weight approach makes it the only viable frontier-grade LLM option — a critical insight in mistral ai strengths weaknesses 2026.

For SaaS builders, this translates into real product differentiation — a key advantage highlighted in mistral ai strengths weaknesses 2026. You can fine-tune a Mistral model on your own domain-specific data and ship a product that genuinely outperforms generic AI assistants in your niche.

2. Inference Speed: Blazing Fast, Especially on Small Models

Speed matters more than most people admit — a key factor in mistral ai strengths weaknesses 2026. When you’re building real-time applications — chatbots, coding copilots, live content tools — latency can make or break user experience.

Mistral’s smaller models, particularly Mistral 7B and Ministral 3B, achieve sub-100ms latency on A10G GPUs — a standout advantage often highlighted in mistral ai strengths weaknesses 2026. That’s not a theoretical benchmark — that’s the kind of responsiveness users feel in their bones. For many production teams, Mistral’s speed advantage over larger proprietary models is reason enough to choose it.

Even Codestral 25.01 delivers first-token latency under 2 seconds for most prompts, making it feel snappy in real development workflows.

3. Cost Efficiency: 40–60% Cheaper Than GPT-4 Turbo

One of the most consistent mistral ai strengths weaknesses 2026 discussions centers on pricing — specifically, how much cheaper Mistral is per million tokens compared to OpenAI‘s and Anthropic’s top models.

For high-volume applications — think a content platform processing thousands of articles weekly, or a SaaS product handling thousands of user queries per day — the cost difference is enormous, a key theme in mistral ai strengths weaknesses 2026. Running Mistral Medium 3.1 instead of GPT-4o can mean the difference between a sustainable unit economics model and an AI bill that kills your margins.

Freelancers and indie hackers especially benefit here. A $9.9/month all-in-one subscription on AiZolo gives you access to Mistral alongside GPT-4, Claude, and Gemini — so you can compare outputs and choose the right tool for each task, without blowing your budget.

4. Coding Performance: Excellent for Scaffolding and Structured Tasks

Codestral 25.01 achieves an 86.6% HumanEval score — impressive for a 22B parameter model, and a strong highlight in mistral ai strengths weaknesses 2026. In real-world tests across 10 coding challenges, it scores 4.3 out of 5, excelling at JSON schema generation, structured output, API scaffolding, unit test generation, and single-file refactoring.

For developers who want to accelerate boilerplate-heavy work — generating REST APIs, writing test suites, producing documentation — Mistral’s Codestral is genuinely strong, a key highlight in mistral ai strengths weaknesses 2026. The 256K context window also makes it unusually capable for large codebase analysis.

5. Multilingual Excellence, Especially French

One underappreciated strength in mistral ai strengths weaknesses 2026 is its multilingual performance — particularly in French. This isn’t surprising given the company’s Parisian roots and the deliberate effort to train high-quality multilingual data.

For businesses operating in Europe or building for non-English-speaking markets, Mistral Large produces noticeably more natural, culturally fluent French text compared to GPT-4o or Claude — a key observation in mistral ai strengths weaknesses 2026. For Spanish, Portuguese, German, and Italian, performance is competitive with top-tier alternatives.

6. European Data Sovereignty and GDPR Compliance

In 2026, data sovereignty isn’t just a compliance checkbox — it’s a competitive advantage, a key theme in mistral ai strengths weaknesses 2026. Mistral‘s French origins mean it operates under European data protection frameworks, and its private deployment options make it the preferred choice for European enterprises navigating GDPR requirements.

For founders building for the European market, or businesses dealing with sensitive customer data, this is a genuine strategic strength — a key takeaway from mistral ai strengths weaknesses 2026 — that American-centric AI providers simply cannot match structurally.

Mistral AI Weaknesses in 2026: Where It Falls Short

Now for the part that most cheerleader posts skip over. Understanding Mistral AI strengths weaknesses 2026 means being honest about the gaps.

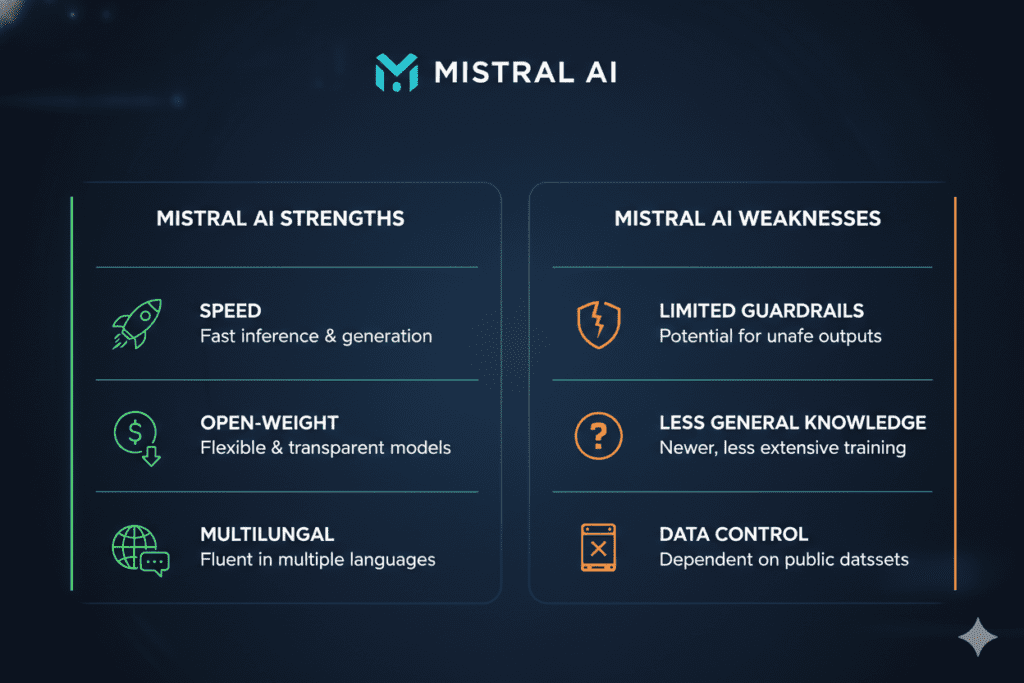

1. Reasoning on the Hardest Benchmarks Still Trails the Frontier

Mistral Large 3 scores around 73% on MMLU-Pro and 93.6% on MATH-500 — solid numbers. But on the hardest reasoning benchmarks like GPQA Diamond, independent evaluations put it at roughly 44%.

For comparison, Gemini 3 Pro scores 91.9% on GPQA Diamond, and Claude Opus 4.6 holds clear advantages on complex multi-step reasoning tasks.

For applications that require deep logical chains, nuanced scientific reasoning, or high-stakes analytical work, Mistral Large 3 in non-reasoning mode is not the strongest choice.

You’d need to reach for their Ministral reasoning variants — or accept that a proprietary model might be more reliable for that specific task.

This is exactly why tools like AiZolo matter so much. You can run the same prompt through Mistral, Claude, and GPT-4 simultaneously, and see which model produces the most reliable output for your specific reasoning task. No guessing. No commitment. Just clarity.

2. Weak at Multi-File Code Coordination

This is a critical limitation for serious software developers. While Codestral 25.01 excels at single-file tasks, it struggles with multi-file coordinated changes — the kind of complex refactoring that involves touching a dozen files simultaneously and keeping dependencies coherent.

For security-critical code, complex business logic, or production-grade systems requiring maximum accuracy, experienced developers recommend reaching for Claude 3.5 Sonnet or GPT-4. Mistral is better suited for augmenting junior developers, generating boilerplate, and handling clearly scoped tasks.

3. Deep Debugging and Long Context Recall

Mistral‘s smaller models begin to falter on deep debugging tasks and long-context recall beyond 32K tokens. When a conversation thread gets long, or when a codebase context becomes complex, outputs can become inconsistent — exactly the problem Priya ran into in our opening story.

For applications where long conversation memory and coherent context recall are essential — like the customer support chatbot Priya was building — this weakness is not trivial. It requires architectural workarounds: chunking inputs, using external memory systems, or switching to a model with stronger long-context performance.

4. Not a Complete Solution — It’s an Engine, Not a Product

This is perhaps the most important Mistral AI weakness to understand in 2026, especially for non-technical founders and marketers: Mistral is an engine, not a complete product.

You can’t just “use Mistral” the way you can open ChatGPT or Le Chat and start working. Using Mistral via API means building everything else yourself — the interface, the integrations, the analytics, the evaluation framework, the prompt management system.

For teams without ML engineering resources, this is a significant barrier. You’d need to invest in tooling and infrastructure before you get any real value out of the raw API.

This is another place where AiZolo fills the gap — it gives you a ready-made interface to work with Mistral and other models, with prompt management, side-by-side comparison, and a unified workspace, without needing to build any of that yourself.

5. Stylistically Inconsistent Code Output

Even where Codestral performs well on correctness, reviewers consistently note that its output can be stylistically weak — poor variable naming, sparse comments, inconsistent code style. In professional codebases where readability and maintainability matter as much as correctness, a human review pass is always required.

For solo developers or students, this might not matter. For teams shipping production code, it means Mistral cannot fully replace a senior developer’s judgment — it can accelerate the work, but not own it.

6. Proprietary Models Require API Access — Not Fully Open

A common misconception: not all of Mistral’s 2026 models are open-weight. Mistral Large 3, Codestral, and Voxtral TTS are proprietary and accessible only via API or Le Chat. Only Mistral 7B, Mixtral 8x7B, Mixtral 8x22B, and Mistral Nemo remain fully open-source under Apache 2.0.

Voxtral, their new audio model, is released under CC BY-NC 4.0 — meaning commercial use requires a separate agreement with Mistral. Founders building commercial voice applications need to be aware of this licensing nuance before they build.

Real-World Use Cases: Who Should Use Mistral AI in 2026?

For Developers

Use Mistral’s Codestral for scaffolding APIs, generating unit tests, writing documentation, and single-file refactoring. Its 256K context window makes it uniquely capable for analyzing large codebases. Avoid it for security-critical logic or complex multi-file coordinated changes — use Claude or GPT-4 for those.

Explore more expert guides on coding AI at Aizolo.

For SaaS Founders

If you’re building a product that handles sensitive data, serves European users, or requires deep customization, Mistral’s open-weight models offer a genuine strategic advantage over proprietary alternatives. Fine-tune on your domain data, deploy on your infrastructure, and own your AI stack.

But don’t build your entire product on Mistral without testing alternatives first. Use AiZolo to compare outputs from Mistral, Claude, and GPT-4 on your actual use cases — before you write a single line of integration code.

For Marketers

For structured content tasks — writing product descriptions, generating SEO briefs, translating content into European languages — Mistral Medium 3.1 offers strong output at a fraction of the cost of GPT-4. The key word is “structured.” Open-ended, deeply creative work still benefits from Claude‘s nuance or GPT-4’s breadth.

For Freelancers

Cost efficiency makes Mistral an attractive choice for freelancers with high output volumes. Running Mistral via AiZolo’s all-in-one platform at $9.9/month means you’re never choosing between AI tools based on budget — you’re choosing based on what actually produces the best output for each client project.

For Students

Mistral’s open-weight models are some of the best learning playgrounds available in 2026. You can run them locally, modify them, study their architecture, and experiment without paying per token. For anyone learning about LLMs, fine-tuning, or ML engineering, this is invaluable.

For AI Builders and Indie Hackers

Mistral’s combination of open weights, Apache 2.0 licensing, competitive performance, and low inference costs makes it one of the most compelling foundations for AI-native products in 2026. The new Forge platform even lets enterprise teams train frontier-grade models on their own proprietary data.

Learn from real-world experience at Aizolo.

How to Compare Mistral AI Against Other Models Without the Guesswork

Here’s the honest reality of working with AI in 2026: no single model wins across all tasks. Mistral AI strengths weaknesses 2026 are real — and so are GPT-4’s, Claude’s, and Gemini’s.

The smartest builders don’t pick one model and commit. They test multiple models side-by-side on their actual use case, then choose based on real output quality — not benchmarks or marketing claims.

That’s exactly what AiZolo was designed for. With AiZolo, you can:

- Chat with GPT-4, Claude, Gemini, Mistral, and Grok simultaneously

- Compare responses side-by-side in real time

- Save and reuse your best prompts across all models

- Access all premium AI models for $9.9/month — instead of paying $110+ for separate subscriptions

For Priya, the SaaS founder from our opening story, a tool like AiZolo would have saved those three wasted weeks. She could have run her customer support use case through five models in an afternoon, seen exactly where Mistral‘s context recall broke down, and made a data-driven decision before writing a single line of code.

Start building smarter with Aizolo.

Mistral AI Strengths Weaknesses 2026: Quick Reference

Strengths at a glance:

- Open-weight models with Apache 2.0 license

- Sub-100ms inference speed on smaller models

- 40–60% lower cost than GPT-4 Turbo per token

- Strong coding performance (86.6% HumanEval on Codestral)

- Excellent French and European multilingual output

- GDPR-compliant European data sovereignty

- Large context windows (256K on Codestral)

- Active product velocity — 6 products shipped in March 2026 alone

Weaknesses at a glance:

- Lower performance on hardest reasoning benchmarks vs. frontier proprietary models

- Struggles with multi-file code coordination

- Context recall weakens beyond 32K tokens on smaller models

- Raw API requires significant build effort — not plug-and-play

- Stylistically inconsistent code output requires human review

- Flagship models (Large 3, Codestral) are proprietary, not fully open-source

- Output speed of Large 3 (~38 t/s) is slow for its class

What Comes Next for Mistral AI

Based on their 2026 release cadence — six products in fifteen days in March alone — Mistral is not slowing down. The Forge platform signals a serious enterprise push.

The Voxtral TTS model opens an entirely new product category. And the NVIDIA partnership through the Nemotron Coalition suggests deep infrastructure integration is coming.

For builders, this means Mistral AI‘s weaknesses today may look very different six months from now. The reasoning gap could close. Long-context performance could improve. New modalities could unlock new use cases entirely.

Which is exactly why staying informed — and staying flexible in your AI choices — matters so much. Follow Aizolo for practical tech and startup insights as the landscape evolves.

Conclusion: Know Your Tool Before You Build With It

The Mistral AI strengths weaknesses 2026 story is ultimately a story about trade-offs. Mistral offers something genuinely rare in 2026: open-weight flexibility, European data sovereignty, blazing inference speed, and competitive performance at a fraction of the cost of the big American proprietary models.

But it’s not magic. It struggles at the frontier of reasoning, stumbles on multi-file code complexity, and requires real engineering effort to deploy as a production-grade product.

The builders who get the most out of Mistral AI in 2026 aren’t the ones who read the benchmarks and pick the winner. They’re the ones who test it against real tasks, compare it honestly against alternatives, understand exactly where its edges are — and build their stack accordingly.

That kind of informed decision-making starts with having the right tools. And the right starting point is a platform that lets you test Mistral AI strengths weaknesses for yourself, in real time, against every other model that matters.

Explore more insights on Aizolo — and start comparing AI models today.

Suggested Internal Links (from Aizolo Blog)

- Best AI Coding Models 2026 Comparison — relevant for the coding strengths/weaknesses section

- Claude AI Strengths Compared to Other Models 2026 — natural companion piece for readers comparing Mistral vs Claude

- Best AI Models by Category 2026 — relevant for the use-case sections

- AI Model Benchmarks Comparison 2026 — relevant for the reasoning benchmark discussion

- Side by Side AI Comparison 2026 — directly relevant for the AiZolo comparison section

Suggested External Links

- Mistral AI Official Documentation — for readers wanting to explore the API

- Mistral AI Le Chat — for readers who want to try the free interface

- HuggingFace Mistral Models — for developers wanting open-weight downloads

- Stack Overflow Developer Survey 2024 — cited for developer AI adoption stats

- Gartner GenAI Spending Forecast — for the $644B GenAI spending projection