Table of Contents

It was a Tuesday afternoon when Arjun, a 28-year-old SaaS founder from Hyderabad, hit a wall.

He had been building a customer support chatbot for three months. His stack used one model for reasoning, a second for code generation, and a third from a different provider entirely for voice output. Every model had its own API key, its own pricing structure, and its own quirky behavior. When one updated without warning, it broke the other two.

“I spend more time managing models than building features,” he told his co-founder.

Sound familiar? If you’re a developer, marketer, founder, or student working with AI in 2026, the explosion of Mistral AI models 2026 has both solved that problem and made it more complex at once. Mistral has released more models, tools, and platforms in the first quarter of 2026 than most AI companies ship in a year. Understanding what each model does — and how to access them intelligently — is no longer optional. It’s a survival skill.

This guide covers every major Mistral AI model released in 2026, who each one is for, what it actually costs, and how platforms like Aizolo help you access and compare them all without juggling multiple subscriptions.

Why Mistral AI Models 2026 Are Worth Your Attention

Before we dive into the individual Mistral AI models 2026, let’s zoom out. Mistral AI is a French company founded in 2023 by researchers from Meta and Google DeepMind.

What made them different from day one was a commitment to open weights under the Apache 2.0 license — meaning developers could download, run, modify, and build on these models commercially, for free.

In 2026, that bet has paid off massively.

Mistral AI has grown its ARR from approximately $20 million to $400 million in a single year, reaching a $13.8 billion valuation — and the reason is clear.

Developers worldwide trust Mistral because the models are transparent, open, and consistently punching above their weight class on benchmarks.

In March 2026, Mistral raised $830 million to build new data centers near Paris and in Sweden, signaling that this is no longer a scrappy startup. This is Europe’s answer to OpenAI — and it’s executing fast.

The big question for builders isn’t whether to pay attention to Mistral AI models 2026. It’s which model to use when, and how to stop overpaying for access to all of them.

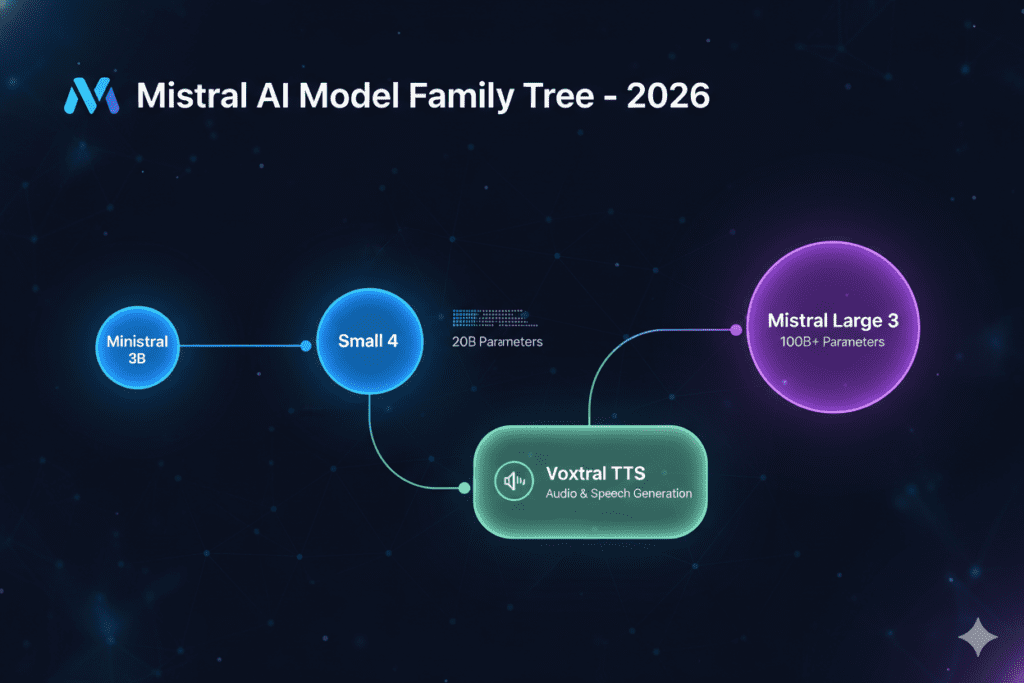

The Full Lineup: Every Major Mistral AI Model in 2026

Mistral Small 4 — One Model That Replaces Three

If there’s one release from the Mistral AI models 2026 lineup that’s changed how developers build, it’s Mistral Small 4.

Mistral Small 4 is the first Mistral model to unify the capabilities of their flagship models — Magistral for reasoning, Pixtral for multimodal, and Devstral for agentic coding — into a single, versatile model.

Think about what that means for Arjun’s situation. Instead of three APIs, three keys, three billing accounts, and three models that may break each other — he gets one. And that one model is configurable.

Set reasoning_effort="none" for fast, lightweight chat. Set it to "high" for deep, step-by-step reasoning that matches Magistral. One model, one deployment, adjustable on the fly.

Mistral Small 4 Key Specs

- Total parameters: 119B (with only ~6B active per token via Mixture of Experts)

- Context window: 256,000 tokens

- Input: Text and image (multimodal)

- License: Apache 2.0 — free to self-host

- Price: $0.15 per million input tokens, $0.60 per million output tokens

- Speed: 137 tokens per second (nearly double the industry median)

At $0.15 per million input tokens, Mistral Small 4 is among the cheapest multimodal reasoning models available — 5x cheaper than GPT-5.4 Mini on input and 7.5x cheaper on output.

Who is Mistral Small 4 for?

- SaaS builders who need one reliable model for chat, code, and vision without managing three separate deployments

- Freelancers building client chatbots or automation tools on a budget

- Startups that need frontier-class performance without frontier-class pricing

Mistral Large 3 — The Open-Source Heavyweight

For tasks that demand the absolute maximum in quality, Mistral Large 3 is the flagship Mistral AI model in 2026 for serious production workloads.

Mistral Large 3 is the largest open-weight Mixture-of-Experts model from a major lab, trained with 41 billion active parameters and 675 billion total parameters, released under the Apache 2.0 license.

Mistral Large 3 achieves 73.11% on MMLU-Pro and 93.60% on MATH-500 according to independent evaluations — making it one of the strongest open-weight models on academic benchmarks.

The practical meaning? When you need a model to analyze a 100-page legal document, reason through a complex financial model, or produce research-grade writing — Large 3 is the tool.

Mistral Large 3 debuts at #2 in the open-source non-reasoning models category on the LMArena leaderboard, and Mistral releases both the base and instruction fine-tuned versions under Apache 2.0, providing a strong foundation for enterprise customization.

Who is Mistral Large 3 for?

- Enterprise teams building document analysis pipelines

- Researchers and academics who need a strong open-weight model for long-context tasks

- Founders building tools in legal, finance, or medical domains where accuracy is non-negotiable

Ministral 3 Family — Small Models, Serious Performance

Not every task needs a 675B-parameter giant. The Ministral 3 family is Mistral’s answer to the growing demand for efficient, edge-deployable models that still deliver impressive results.

The Ministral 3 family includes dense models at 14B, 8B, and 3B parameters, all released under the Apache 2.0 license. For each model size, Mistral releases base, instruct, and reasoning variants with image understanding capabilities.

The standout here is the 14B reasoning variant. The Ministral 14B reasoning variant hits 85% on AIME 2025 — beating Qwen-14B’s 73.7% — making it one of the best small reasoning models available at any price.

For developers working on mobile apps, embedded systems, or use cases where latency and device constraints matter, this family is where Mistral AI models 2026 get especially exciting.

Who is the Ministral 3 family for?

- Mobile app developers who need on-device AI without cloud latency

- Students building projects with budget hardware

- Developers in emerging markets where cloud inference costs add up fast

Voxtral TTS — The Voice Layer for AI

Audio is the new interface. And with Voxtral TTS, Mistral has made a clear move into voice.

Voxtral TTS is Mistral’s open-source text-to-speech model that supports nine languages and is designed for enterprise voice agents for sales and customer engagement — competing directly with ElevenLabs, Deepgram, and OpenAI.

What makes Voxtral remarkable isn’t just the quality. It’s the size and cost.

Built on Ministral 3B, the default BF16 weights are 8 GB — runnable on a single GPU with 16GB+ VRAM. With quantization, the footprint drops to as low as 3 GB, making it viable for edge devices.

Voxtral TTS is available now via API at $0.016 per 1,000 characters — a fraction of what ElevenLabs charges for comparable quality.

The voice capabilities include zero-shot voice cloning (with as little as 3 seconds of reference audio), cross-lingual voice transfer, and model latency of around 70ms on high-end hardware.

For anyone building voice agents, customer support bots, or language learning tools, these Mistral AI models 2026 benchmarks change the economics entirely.

Who is Voxtral TTS for?

- Marketers building branded voice experiences or AI-powered podcast tools

- Founders in edtech, language learning, or accessibility

- Developers replacing expensive TTS API costs with a self-hosted solution

Leanstral — Formal Proof AI for Serious Developers

This one is for a niche but growing audience: developers working in formal verification and mathematical proof systems.

Released March 16, 2026, Leanstral is the first open-source AI agent designed specifically for Lean 4 formal proof engineering. Instead of generating code you have to test, Leanstral generates both the code and a machine-checkable mathematical proof that it’s correct.

Leanstral beats Claude Sonnet by 8 points at pass@16 while costing 15x less.

If you’re building in aerospace, cryptography, financial modeling, or any domain where a single bug carries serious consequences — Leanstral is a tool worth knowing. Among Mistral AI models 2026, it’s one of the most technically specialized releases.

Mistral Forge — Enterprise AI Training Platform

Forge is Mistral’s enterprise-tier platform, and it’s aimed squarely at large companies that want to build models trained on their own proprietary data.

Unlike fine-tuning (which adjusts a small fraction of weights) or RAG (which retrieves external context), Forge supports the full training lifecycle with an autonomous agent called Mistral Vibe that manages hyperparameter search, synthetic data generation, job scheduling, and model evaluation.

For most individual builders and freelancers, Forge may be more infrastructure than needed. But for enterprise teams — especially those in regulated industries who can’t use off-the-shelf models — this is the most important Mistral AI model 2026 release from a strategic standpoint.

Spaces CLI — Developer Infrastructure Built for Agents

Released March 31, 2026, Spaces started as an internal platform tool and went public when the team realized AI coding agents needed the same tooling as human developers. In three commands, you go from nothing to a running multi-service project with hot reload, a database, and generated Dockerfiles.

In one demo, an AI agent configured a fresh repository for deployment, set up CI pipelines, and shipped to production in under 10 minutes with no human intervention. For developers building AI-native products, this changes the starting line.

The Bigger Picture: What Mistral’s 2026 Blitz Means for You

Here’s what the avalanche of Mistral AI models 2026 really signals: the commoditization of frontier AI has arrived.

LLM Stats, which monitors over 500 models in real time, logged 255 model releases from major organizations in Q1 2026 alone. The pace is not slowing down.

The question for anyone building in 2026 is not “which model is best” but “which model is best for MY budget and use case.”

And that brings us to a very real problem. If you want access to Mistral Small 4, Mistral Large 3, Voxtral TTS and also compare them against GPT-5.4, Claude, Gemini, and others before committing — how do you do that without building a messy multi-API infrastructure or paying for five separate subscriptions?

How Aizolo Solves the Multi-Model Problem

This is exactly the problem Aizolo was built to solve.

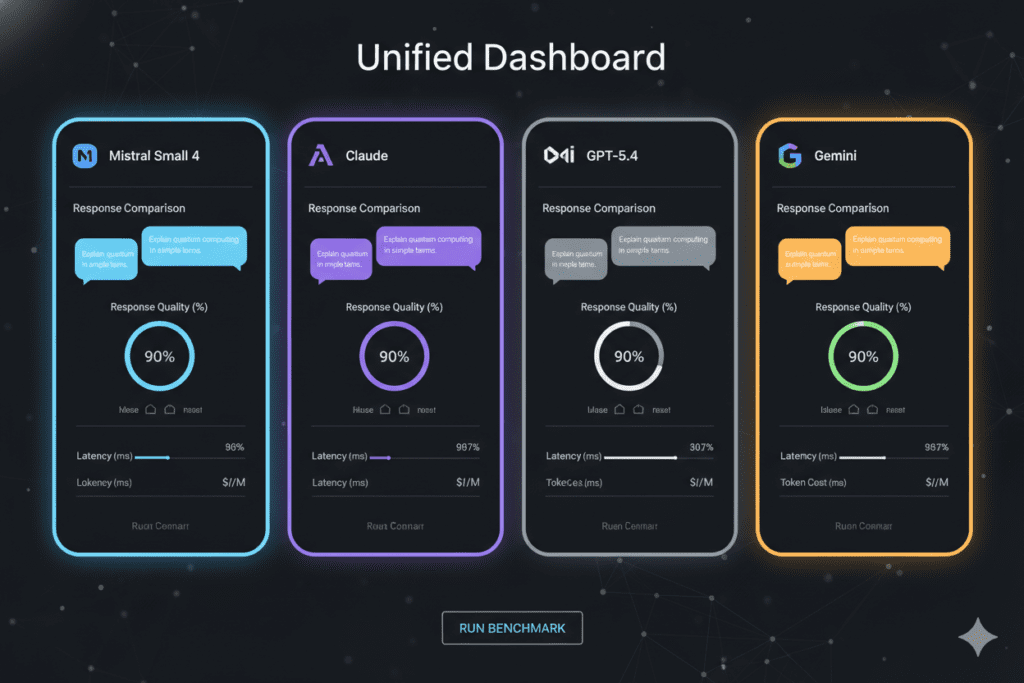

Aizolo is an all-in-one AI subscription platform that gives you access to all premium AI models — including the latest Mistral AI models 2026 lineup — through a single, unified dashboard.

Instead of managing separate accounts for Mistral, OpenAI, Anthropic, and Google, you get one subscription, one interface, and the ability to compare models side by side.

Here’s why that matters for each type of user:

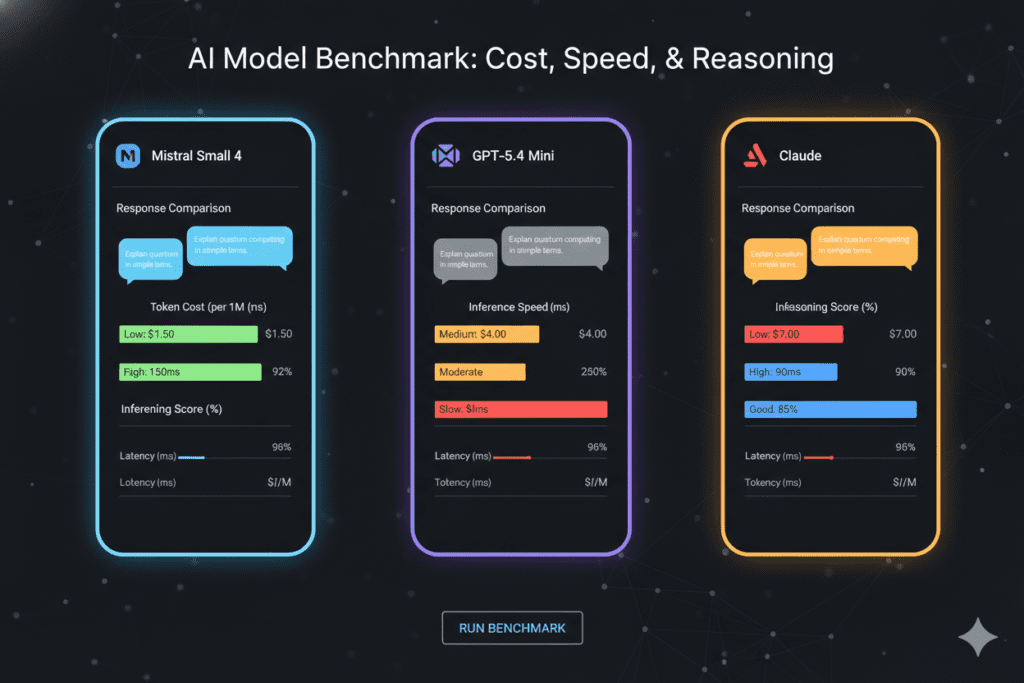

For SaaS builders: Before choosing which Mistral AI model to use in production, you can compare responses from Mistral Small 4, Claude, and GPT-5.4 Mini side by side for your exact use case. Make data-driven model decisions, not guess-based ones.

For freelancers: You get access to the full Mistral AI models 2026 range plus every other premium model for $9.90/month — versus $110/month if you subscribed to each individually. That’s a real-money difference on a freelancer’s budget.

For marketers: Access Voxtral-level audio tools alongside image generators and the latest language models all in one place, without a technical background required.

For students: Get the same frontier models that professionals use without the student-budget nightmare of choosing between subscriptions.

For developers: Bring your own API keys (encrypted), run unlimited tokens, and use Aizolo’s side-by-side comparison to benchmark Mistral AI models 2026 directly against alternatives before writing a single line of production code.

For founders: Make decisions about your AI stack based on actual comparison data, not vendor marketing. Aizolo’s prompt manager and AI memory features let you run consistent test scenarios across models.

Explore more insights on Aizolo →

Mistral AI Models 2026 vs. The Competition: Where They Win

One of the most powerful arguments for Mistral AI models 2026 is pure economics.

Le Chat’s free plan is remarkably generous compared to ChatGPT or Claude — access to Mistral Large at no cost allows users to test the most powerful model before committing. The Pro plan at €14.99/month remains competitive against ChatGPT Plus at $20/month.

On the API side, the numbers are even more compelling:

- Mistral Small 4 at $0.15/M input tokens vs. GPT-5.4 Mini at $0.75/M — a 5x cost advantage

- Ministral 14B reasoning achieving 85% on AIME 2025 — comparable to models costing far more

- Voxtral TTS at $0.016 per 1,000 characters vs. ElevenLabs pricing that can run 10-20x higher

For French language quality and European data sovereignty (GDPR compliance), Mistral produces more natural text with fewer anglicized phrasings than American competitors — a real advantage for any builder serving European markets.

The tradeoffs are honest: proprietary models like Gemini 3 Pro and Claude Opus 4.6 still hold clear leads on the hardest reasoning benchmarks. But for most production use cases — chat, coding, document analysis, image understanding — the gap is narrow and the cost difference is enormous.

Read more expert guides on Aizolo →

Real-World Use Cases for Mistral AI Models 2026

Let’s bring this back to ground level.

The freelance content strategist running a one-person shop can use Ministral 8B for first-draft generation (cheap, fast), Mistral Small 4 for complex research tasks that need reasoning + vision (reading competitor PDFs alongside web data), and compare outputs on Aizolo before deciding which draft to polish.

The developer building an internal knowledge base tool can use Mistral Large 3 for long-document analysis and RAG pipelines, Leanstral for any code that requires formal correctness, and Spaces CLI to deploy the whole thing to production in under an hour.

The founder validating a new product idea can use Aizolo’s side-by-side comparison to test five different models on the same five customer interview prompts, then pick the model that consistently produces the best insight extraction before locking in their API choice.

The marketer building localized campaigns can use Voxtral TTS to generate voice ads in English, French, Spanish, German, Hindi, and Arabic — zero-shot, from one model, with native cultural nuance baked in.

The student can run Mistral Small 4’s reasoning mode on their calculus problem sets and engineering design briefs — all within Aizolo’s free tier or $9.90/month Pro plan, without needing a computer with 80GB of VRAM.

This is the practical reality of Mistral AI models 2026: they democratize capabilities that, two years ago, required enterprise contracts and six-figure infrastructure budgets.

Learn from real-world experience at Aizolo →

What’s Coming Next from Mistral

The pace isn’t slowing. Based on what Mistral has signaled:

- A reasoning variant of Mistral Large 3 is in development

- The NVIDIA Nemotron Coalition — which Mistral co-founded — is expected to produce Nemotron 4, a large open model co-developed with NVIDIA

- The full end-to-end multimodal platform (audio in, text in, image in, audio/text/image out) is the stated roadmap

Mistral’s VP of Science Operations stated: “We plan to have an end-to-end platform that can handle multimodal streams of input, including audio, text, and image and output as well. The main benefit is you get way more information with an end-to-end agentic system that supports audio as an input or output.”

For builders, this means the architecture decisions you’re making today — which models, which APIs, which platforms — will keep evolving. The smartest move is to build on infrastructure that can adapt.

That’s exactly what Aizolo enables: as new Mistral AI models 2026 and beyond get released, they show up in your Aizolo dashboard. No new subscriptions, no new API key wrangling, no new billing lines.

Follow Aizolo for practical tech and startup insights →

The Bottom Line: Mistral AI Models 2026 Are the New Default for Smart Builders

Six products in fifteen days. An open-weight text-to-speech model that runs on a smartphone. A unified reasoning + vision + coding model at $0.15 per million tokens. A formal proof agent that beats Claude at a fraction of the cost. An enterprise training platform. A developer CLI built for AI agents.

The Mistral AI models 2026 lineup is not a collection of incremental updates. It’s a structural shift in what open-source AI is capable of.

But capability alone doesn’t build products. The builders who win in 2026 are the ones who can move fast, compare tools intelligently, and avoid getting locked into expensive infrastructure before validating their ideas.

That’s the gap Aizolo fills — giving you instant, side-by-side access to every major Mistral AI model in 2026 alongside GPT, Claude, Gemini, and more, for $9.90/month instead of $110/month. A single dashboard. A prompt manager. AI memory. Side-by-side comparison. No tab switching.

Whether you’re a developer choosing between Mistral Small 4 and GPT-5.4 Mini for your next production feature, a founder stress-testing models before committing to a stack, or a student trying to get the most out of every rupee in your AI budget — the smartest place to explore Mistral AI models 2026 is inside a platform that gives you all of them at once.

Start building smarter with Aizolo → aizolo.com

Suggested Internal Links (Aizolo Blog)

- The Best Multi-Model AI Subscription in 2026 — Relevant: readers comparing Mistral to other models will want this guide

- AI Subscription Price Comparison Table 2026 — Directly supports cost comparisons made in this article

- Best AI Subscription Deals 2026 — Supports the value proposition of using Aizolo vs. individual subscriptions

- Affordable AI for Freelancers and Small Teams 2026 — Relevant to the freelancer and founder use cases in this post

Suggested External Links

- Mistral AI Official News — Primary source for all model announcements

- Mistral Documentation — Models — Official model specs and API reference

- Mistral 3 Announcement — Direct source for Large 3 and Ministral 3 details

- TechCrunch: Voxtral TTS Release — Credible third-party coverage of Voxtral

- LLM Stats — AI Updates April 2026 — Independent tracking of model releases and benchmarks