Table of Contents

The Tuesday Afternoon That Changed How Priya Builds AI Products

It was a Tuesday afternoon in March 2026 when Priya, a 28-year-old indie SaaS founder from Bengaluru, hit a wall. She had been building a voice-enabled customer support bot for three months, but she was struggling with fragmented tools.

She was juggling ElevenLabs for speech, GPT-4o for reasoning, and a separate coding model for her backend logic. That meant three APIs, three billing dashboards, and three sets of rate limits—a “DIY” approach that led to a monthly invoice quietly creeping past $180.

Then she read a single headline: “Mistral AI ships 6 products in 15 days.”

She paused. Took a breath. And spent the next two hours reading everything she could about the Mistral AI latest models 2026—and realized she had been building the hard way all along.

She didn’t need more tabs; she needed a best multi AI platform that offered multiple AI models in one subscription. By switching to an all in one AI subscription, she could access the best value AI subscription 2026 has to offer without the overhead.

If you are looking for what AI brands are known for affordable pricing and want to find the best AI subscription services 2026 provides, you’re in the right place.

If you’re a developer, founder, marketer, or student trying to figure out which Mistral AI latest models 2026 actually matter—and how to access them without juggling multiple subscriptions—this guide is exactly what you need.

Why 2026 Is Mistral AI’s Breakout Year

Let’s set the stage, because the scale of what Mistral AI has accomplished is genuinely remarkable.

Founded in Paris in April 2023 by three former researchers from Google DeepMind and Meta, Mistral AI entered the scene with a bold promise: powerful, open-weight AI models that anyone can run, fine-tune, and deploy. By January 2026, the company had hit $400 million in annual recurring revenue — up from roughly $20 million just a year earlier — and reached a $13.8 billion valuation.

That’s not slow, steady growth. That’s a rocketship fueled by the demand for a best multi AI platform that doesn’t break the bank.

And the product velocity matches the business momentum. Between March 16 and March 31, 2026 alone, Mistral shipped six major products. That’s one significant release every two and a half days.

This rapid pace is why users are flocking to find the best AI subscription services 2026 offers, seeking a way to access multiple AI models in one subscription rather than managing them individually.

Understanding the Mistral AI latest models 2026 isn’t just about staying informed. It’s about finding the best value AI subscription 2026 has available to transform how you build, write, code, and compete. For those wondering what AI brands are known for affordable pricing, Mistral’s efficiency combined with an all in one AI subscription is quickly becoming the gold standard for savvy developers and founders.

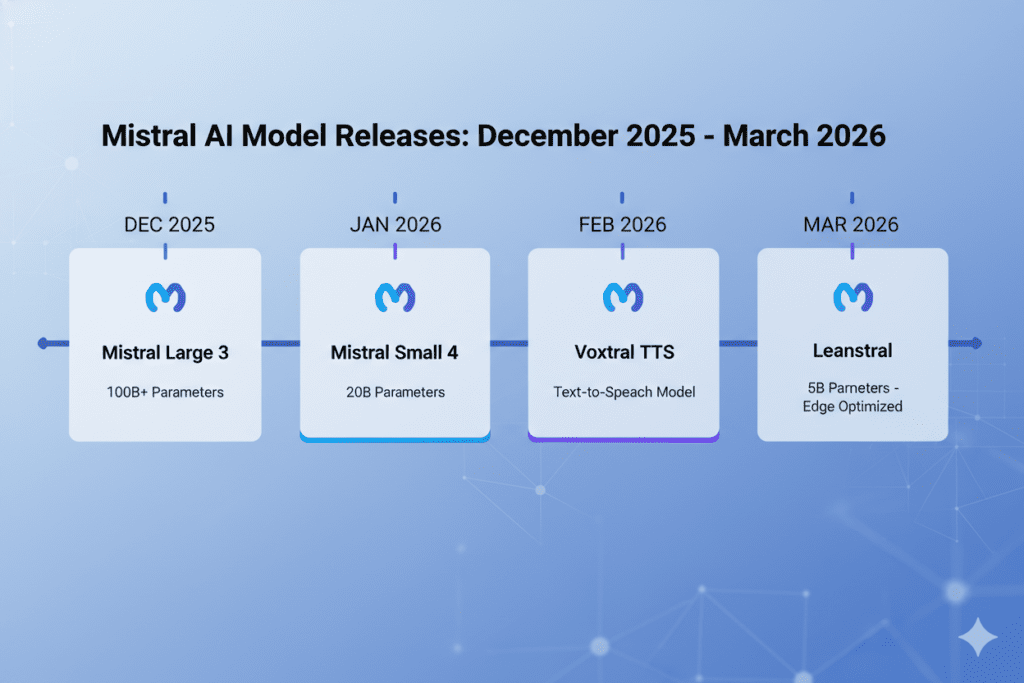

The Full Lineup: Mistral AI Latest Models 2026, Explained

Mistral Large 3 — The Open-Weight Flagship

Released in December 2025, Mistral Large 3 remains the crown jewel of the Mistral AI latest models 2026 lineup. It’s a sparse Mixture-of-Experts (MoE) model with:

- 675 billion total parameters

- 41 billion active parameters per inference

- 256K context window — enough to process entire codebases or legal documents

- MMLU-Pro score of 73.11% (independent evaluation)

- MATH-500 score of 93.60%

- Apache 2.0 license — fully open and commercially usable

For context, this is the largest open-weight MoE model among the mistral ai latest models 2026 from any major lab. It was trained on 3,000 of NVIDIA’s H200 GPUs and, at launch, debuted at #2 in the open-source non-reasoning model category on the LMArena leaderboard.

While many users compare chatgpt plus claude pro gemini advanced pricing 2026 to find value, this model offers a self-hosted alternative that competes with the best ai api subscription services 2026 provided by proprietary giants.

Who should use it? Enterprise developers, AI researchers, and SaaS builders working on complex document analysis, long-context reasoning, or advanced code generation. It is perfect for startups who want frontier-level capability without frontier-level API costs.

For those tired of managing separate accounts, using an all in one ai platform or the best all-in-one ai workspace allows you to harness this power alongside others. It is especially effective on platforms where multiple ai models answer the same question, allowing for real-time output validation.

Where it falls short: Output speed runs around 38 tokens per second — slower than lighter models — and proprietary models like Claude Opus 4.6 and GPT-5.4 still outperform it on the hardest reasoning benchmarks. But for open-weight flexibility within the mistral ai latest models 2026 ecosystem, nothing else comes close at this scale.

Mistral Small 4 — One Model to Replace Three

This is the Mistral AI latest model in 2026 that developers are buzzing about the most, and for good reason.

Released on March 16, 2026, Mistral Small 4 does something unprecedented: it unifies three previously separate Mistral models — Magistral (reasoning), Pixtral (multimodal vision), and Devstral (agentic coding) — into a single, versatile system.

Architecture highlights:

- 119 billion total parameters, with only 6 billion active per token (MoE with 128 experts, 4 active per token)

- 256K context window

- Native multimodality: text + image inputs

- Configurable reasoning depth via a

reasoning_effortparameter - 137+ tokens per second output speed — nearly 2x faster than the class median

- $0.15 per million input tokens — among the cheapest multimodal reasoning models available

That last point deserves emphasis. At 5–7x cheaper than comparable proprietary models, Mistral Small 4 demolishes the cost-performance tradeoff that has historically forced builders to choose between quality and affordability.

What makes Small 4 special is its MoE efficiency. Think of it like a consulting firm with 128 specialist departments — for each task, only the 4 most relevant specialists are called. The result is 95% less compute per token compared to a dense 119B model, but with the knowledge depth of a much larger system.

Real-world use cases:

- For developers: Build agentic pipelines that handle reasoning, code generation, and image parsing within a single API call. No more routing logic between three models.

- For founders: Reduce infrastructure complexity dramatically. One model, one endpoint, one cost line.

- For marketers: Use its multimodal capabilities to process visual content alongside text briefs in the same workflow.

- For students: Access a genuinely powerful reasoning model at a fraction of what GPT-4o costs.

Ministral 3 Series — The Efficiency Champions

Alongside Large 3, Mistral also released the Ministral 3 family — three dense models at 14B, 8B, and 3B parameters, all under Apache 2.0.

The 14B reasoning variant is a standout among the Mistral AI latest models 2026. It scores 85% on AIME 2025, beating Qwen-14B (which scores 73.7%) — making it one of the best small reasoning models available anywhere, open or proprietary.

These models are designed for:

- Edge device deployment — the 3B model can run on smartphones and tablets

- Cost-sensitive production environments — token output is often an order of magnitude shorter than comparable models

- Fine-tuning — their compact size makes domain-specific adaptation fast and affordable

For freelancers and solopreneurs: The Ministral 3B is an especially compelling model. It’s small enough to run locally, powerful enough for real tasks, and free to deploy.

Voxtral TTS — The Voice Revolution

If you thought the Mistral AI latest models 2026 were only about text, Voxtral TTS will change your perspective entirely.

Released on March 23, 2026, Voxtral TTS is Mistral’s first text-to-speech model — and it’s a direct challenge to ElevenLabs and OpenAI’s voice stack.

Key specs:

- Built on Ministral 3B — only 4 billion parameters

- Runs on a single GPU with 16GB VRAM; with quantization, footprint drops to 3GB

- Supports 9 languages: English, French, German, Spanish, Dutch, Portuguese, Italian, Hindi, and Arabic

- Zero-shot voice cloning with as little as 3 seconds of reference audio

- Real-time streaming with 70ms model latency on H200 hardware

- Priced at $0.016 per 1,000 characters via API

- Open weights available on Hugging Face under CC BY NC 4.0

Voxtral doesn’t just recite text — it interprets it. The model captures emotional register, natural pauses, rhythm, intonation, and even subtle vocal fillers. Human evaluators found it comparable to ElevenLabs Flash v2.5 and approaching parity with the larger v3 model.

Who needs Voxtral TTS?

- SaaS builders creating voice-enabled customer support bots (exactly like Priya from our opening story)

- Developers building multilingual voice assistants for global markets

- Content creators who need fast, natural-sounding voiceovers without ElevenLabs pricing

- Startups building voice AI products who want data sovereignty by running the model on their own infrastructure

Leanstral — Formal Proof Engineering

Released March 16, 2026, Leanstral is one of the most niche but fascinating Mistral AI latest models 2026. It stands out as the first open-source AI agent built specifically for Lean 4 formal proof engineering—meaning it generates code and a machine-checkable mathematical proof that the code is correct. According to Mistral, Leanstral beats Claude Sonnet 4.6 by 8 points at pass@16 while costing 15x less.

For developers working in safety-critical systems, cryptography, or verified software, this model is a total game-changer. However, managing specialized models like Leanstral alongside others can get expensive. Many builders are now looking for one subscription for all AI models to streamline their workflow. Instead of paying for five different platforms, using AI that combines all AI allows you to access niche tools like Leanstral and flagships like GPT-5 in one place.

Choosing a single subscription for multiple AI models is currently one of the best ways to save on AI model subscriptions, ensuring you have the right tool for the job—whether it’s formal verification or creative writing—without the massive overhead.

Mistral Forge — Enterprise AI Training

Announced at NVIDIA GTC on March 17, 2026, Forge is Mistral’s play for the enterprise training market.

Unlike fine-tuning (which adjusts a fraction of model weights) or RAG (which retrieves context), Forge supports the full training lifecycle on proprietary data. The centerpiece is Mistral Vibe, an autonomous agent that manages hyperparameter search, synthetic data generation, and job scheduling.

Early enterprise adopters include ASML (semiconductor manufacturing optimization) and Ericsson (5G network management). This is infrastructure-level AI for organizations that need custom frontier models without building them from scratch.

Why So Many Builders Struggle to Keep Up With Mistral AI Latest Models 2026

Here’s the honest truth: the pace of AI development in 2026 is brutal.

Mistral shipped six products in 15 days. OpenAI, Google, and Anthropic are all releasing major updates on similarly compressed timelines. For any individual developer, founder, or marketer trying to stay current — and actually use these models in their work — the cognitive overhead is enormous.

The problem isn’t access. Most of the Mistral AI latest models 2026 are available via API. The problem is fragmentation:

- You need a Mistral API key for Large 3 and Small 4

- A different platform for ElevenLabs if you haven’t switched to Voxtral yet

- ChatGPT Plus for GPT-5.4 access

- Claude Pro for Anthropic’s models

- Gemini Advanced for Google’s stack

And if you want to compare how different models handle the same task? You’re copying and pasting between browser tabs like it’s 2022.

This is exactly the problem that Aizolo was built to solve.

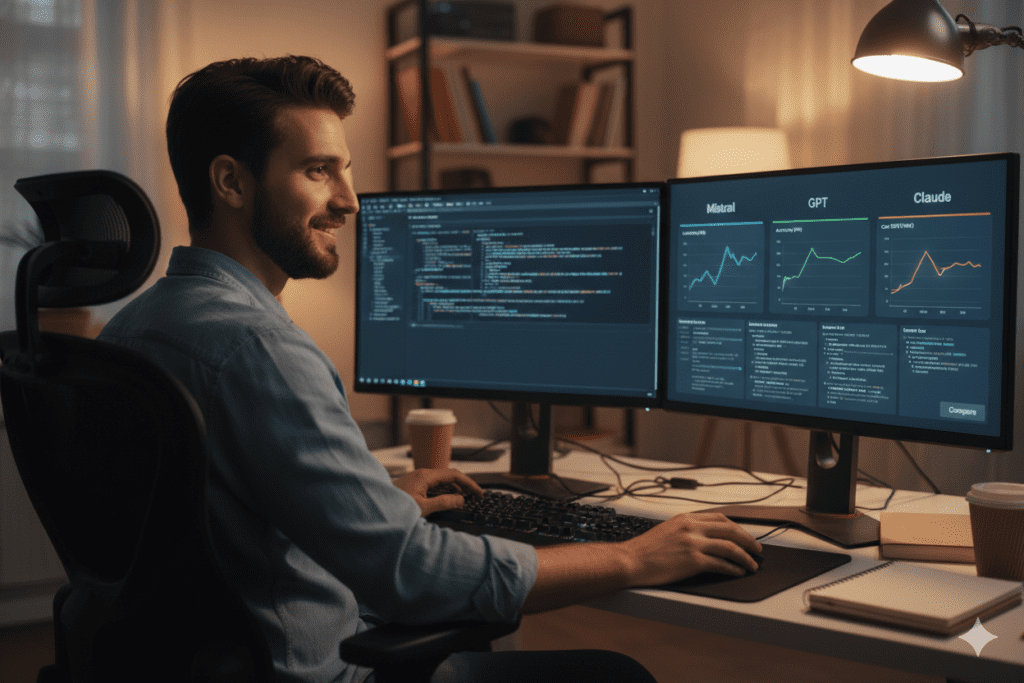

How Aizolo Helps You Work With Mistral AI Latest Models 2026 (and Every Other Model)

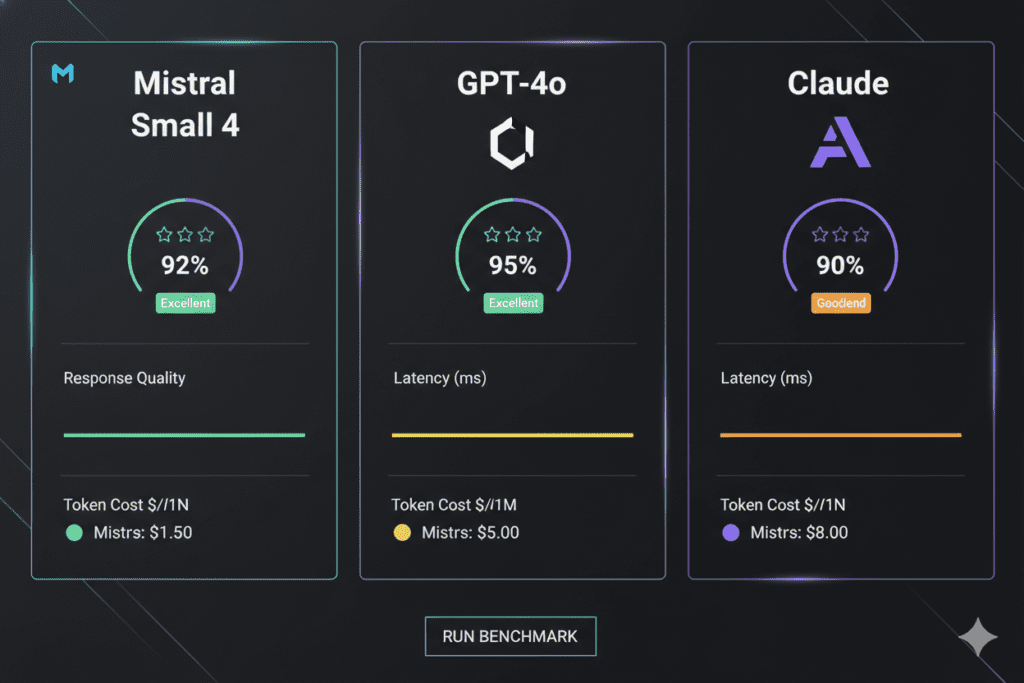

Aizolo is an all-in-one AI subscription platform that gives you access to every major AI model — including the Mistral AI latest models 2026 — from a single dashboard, for $9.90 per month.

Instead of juggling five different subscriptions that total over $110 per month, Aizolo consolidates everything into one workspace with powerful features built specifically for builders, creators, and curious professionals.

What Aizolo gives you:

Side-by-side model comparison. Ask Mistral Large 3, GPT-4o, and Claude the same question simultaneously and compare responses in real time. This is invaluable when you’re deciding which model to use for a specific task — reasoning, creative writing, coding, data analysis.

Immediate Access to the Cutting Edge

As Mistral AI latest models 2026 roll out—and they are rolling out fast—Aizolo updates its model library instantly. Whether it’s the latest flagship or a niche release like Leanstral, you gain access without the friction of hunting for new API keys every time Mistral ships an update.

In an era where users are constantly evaluating which ai companies offer the best value for money, Aizolo simplifies the decision-making process.

You no longer have to manually research is claude cheaper than chatgpt for every specific task; instead, you can leverage one of the premier platforms to ask same question to multiple ai models simultaneously. This ensures you get the most cost-effective and accurate results across the entire 2026 model landscape from a single interface.

Stop Reinventing the Wheel

Save your most effective prompts across all models. When you perfect a workflow that leverages Mistral Small 4’s unique reasoning mode (which unifies Magistral, Pixtral, and Devstral into one efficient engine), you can save it to your library and deploy it instantly across every future session.

Persistent AI Memory

Aizolo remembers your preferences, project context, and past conversations. This eliminates the “cold start” problem, ensuring you aren’t re-explaining your technical requirements every time you open a new chat window. It’s the ultimate feature for those using platforms to ask same question to multiple ai models, as it keeps your context consistent across every engine you test.

Bring Your Own Keys (BYOK)

Maintain total control over your billing. Aizolo’s encrypted key storage allows you to plug in your existing Mistral API keys, letting you use the Mistral AI latest models 2026 within our unified interface without ever compromising your credentials.

Smart Savings & Comparison

In a landscape where users often ask is claude cheaper than chatgpt, Aizolo provides the answer through transparency. By consolidating access, we offer one of the best ways to save on ai model subscriptions—costing as little as $9.90/month compared to the $90+ you would spend on individual premium plans. When deciding which ai companies offer the best value for money, you no longer have to choose just one; you can access them all through a single, secure dashboard.

Multi-Modal Mastery

Go beyond the text box. Aizolo integrates high-end Image, Video, and Audio generation (including DALL-E and Midjourney-style models) directly into your dashboard. From formal proof engineering to creative asset generation, it is all accessible from a single, streamlined workspace.

Real-World Workflows: Mistral AI Latest Models 2026 + Aizolo

Let’s make this concrete. Here’s how different types of users are combining the Mistral AI latest models 2026 with Aizolo’s platform:

For SaaS Founders (The “Value” Seekers): Use Mistral Small 4 via Aizolo for your main product pipeline—it handles reasoning, vision, and code in one API call. When deciding which AI companies offer the best value for money, you can directly compare its output against Claude and GPT-4o before committing. With one subscription for all AI models, you can finally stop paying for three separate, expensive platform fees.

For Developers Building Voice Products: Voxtral TTS is now accessible without a dedicated ElevenLabs contract. Aizolo is one of the few platforms to ask same question to multiple AI models, allowing you to test Voxtral’s voice output against competing models side-by-side before deciding on your production stack.

For Marketers Handling Multilingual Content: Ministral 3’s multilingual capabilities (especially its 14B variant) handle content generation across 9+ languages. If you’ve ever wondered is Claude cheaper than ChatGPT for translation, use Aizolo’s comparison feature to find the most cost-effective model for your specific language pair.

For Students on Tight Budgets: At $9.90/month, Aizolo is one of the best ways to save on AI model subscriptions. You get access to Mistral AI latest models 2026 like Mistral Large 3 and Small 4, alongside GPT-4o, Claude, and Gemini—all of which would cost $110+/month individually. This single subscription for multiple AI models ensures you have the best research tools without the heavy financial burden.

For Freelancers & Agencies: Use Aizolo’s Prompt Manager to save your best prompts for different client types. As an AI that combines all AI, Aizolo lets you compare how Mistral Small 4 handles a brief versus Claude or GPT-4o, ensuring you deliver the highest quality output rather than just the easiest one to access.

For Educators and Researchers: Mistral Large 3’s 256K context window—accessible through Aizolo—lets you process entire research papers or datasets in a single conversation. It’s the ultimate way to utilize the Mistral AI latest models 2026 for deep-dive analysis without the need for complex data chunking.

Explore more expert guides on Aizolo →

The Open-Source Advantage: Why Mistral AI Latest Models 2026 Matter Beyond the Benchmarks

One thing that gets lost in benchmark comparisons is what open-source actually means for practical builders.

Every major Mistral AI latest model in 2026 — Large 3, Small 4, Ministral 3, Voxtral TTS, and Leanstral — is released under the Apache 2.0 license (or equivalent permissive terms). This means:

- No vendor lock-in. You can self-host, fine-tune, and modify without permission.

- Data sovereignty. Enterprises with GDPR or data privacy requirements can run these models entirely on their own infrastructure.

- Cost control. When you self-host, you pay only for compute — not per-token API markups.

- Community acceleration. Apache 2.0 models attract open-source contributors who create fine-tuned variants, quantized versions, and integrations far faster than any single company’s engineering team.

This is fundamentally different from the proprietary model ecosystem. When Mistral releases a model under Apache 2.0, they’re not just publishing a tool — they’re seeding an entire ecosystem.

For the developer community, Mistral Small 4 is already available on vLLM, llama.cpp, SGLang, Hugging Face Transformers, and NVIDIA NIM. You can run it locally, on cloud infrastructure, or via the Mistral API. The choice is yours.

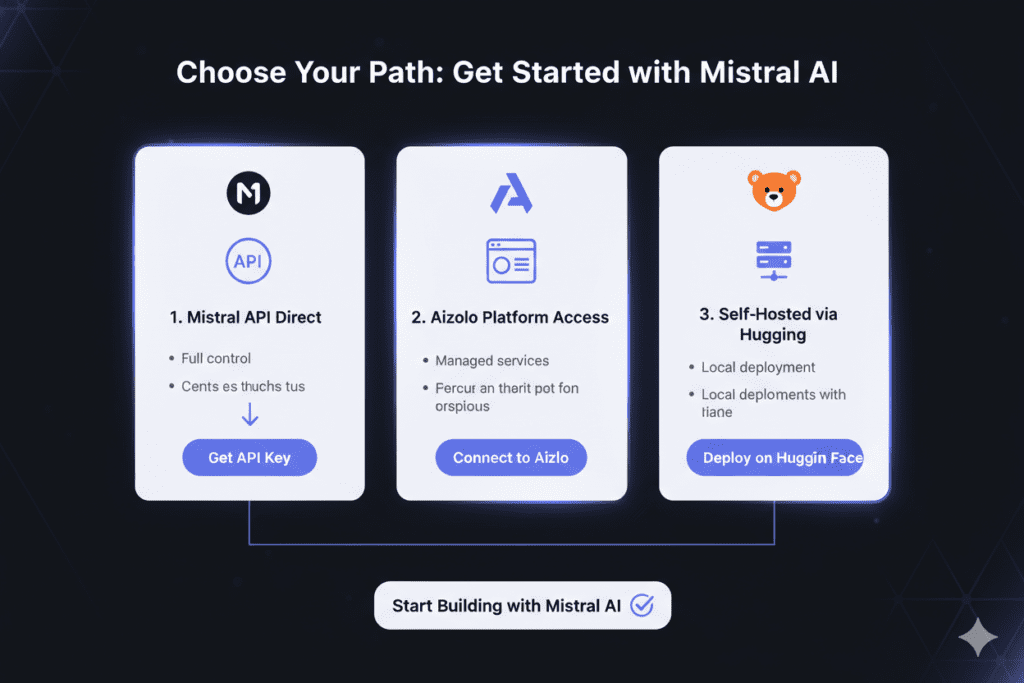

How to Actually Get Started With Mistral AI Latest Models 2026

Getting hands-on with the Mistral AI latest models 2026 is simpler than you might think:

Path 1: Direct API Access Head to Mistral AI’s platform and sign up for an API key. Models are available on Mistral Studio, and pricing for Small 4 starts at $0.15 per million input tokens.

Path 2: All-in-One via Aizolo If you want to compare Mistral models against GPT, Claude, and Gemini without managing multiple API keys or subscriptions, start free on Aizolo. The Pro plan at $9.90/month gives you access to all premium models, the comparison dashboard, Prompt Manager, and AI Memory in one place.

Path 3: Self-Hosted Open Source All major Mistral 2026 models are available on Hugging Face. If you have the hardware (or budget for cloud compute), you can deploy models like Small 4 or Ministral 14B on your own infrastructure with full control.

Path 4: Cloud Marketplaces Mistral models are available on Amazon Bedrock, Azure AI Foundry, IBM WatsonX, Together AI, Fireworks, and NVIDIA NIM. If you’re already building in a cloud-native environment, this is often the lowest-friction path.

Read more expert guides on Aizolo →

What to Watch Next: The Mistral Roadmap

Based on what Mistral has shipped so far in 2026 and their stated direction, here’s what smart builders should watch for:

Mistral Large 3 Reasoning Variant: The official blog post explicitly mentioned a reasoning version “coming soon.” Given the 14B Ministral reasoning model’s impressive AIME scores, a reasoning-optimized Large 3 could be a serious competitor to OpenAI’s o-series.

End-to-End Voice Platform: Mistral’s VP of Science explicitly mentioned building a platform that handles multimodal streams of audio, text, and image input/output. Voxtral TTS is the first piece — the full suite is coming.

Forge Enterprise Expansion: With ASML and Ericsson as anchor customers, Forge’s full training platform is positioned to capture significant enterprise AI budget. Expect more integrations and potentially a SaaS pricing model.

NVIDIA Nemotron Coalition: Mistral is a founding member of NVIDIA’s new Nemotron Coalition, which should drive continued optimization of Mistral models on NVIDIA hardware — better performance, lower inference costs, wider deployment options.

For anyone building with AI in 2026, staying current with the Mistral AI latest models 2026 isn’t optional — it’s a competitive advantage. The models that seemed cutting-edge six months ago are rapidly becoming the baseline.

Follow Aizolo for practical tech & startup insights →

Conclusion: Mistral AI Latest Models 2026 Are Rewriting the Rules — Don’t Get Left Behind

Remember Priya from the beginning of this article? She eventually made the switch. She replaced her three-model, three-subscription pipeline with Mistral Small 4 — one model, one API, one cost line.

Her monthly AI spend dropped by more than 70%. Her pipeline simplified dramatically. And she gained capabilities (multimodal, reasoning, and code) she hadn’t even been planning for.

That’s the real story of the Mistral AI latest models 2026. This isn’t just about new model releases or benchmark improvements. It’s about a fundamental shift in what’s possible — and what’s affordable — for builders at every level.

The Mistral AI latest models in 2026 include:

- Mistral Large 3 — the most capable open-weight MoE model at 675B parameters

- Mistral Small 4 — a unified reasoning + vision + coding model at a budget price

- Ministral 3 series — compact, efficient models for edge and cost-sensitive deployment

- Voxtral TTS — open-weight voice AI with zero-shot voice cloning

- Leanstral — formal proof engineering for verified software

- Mistral Forge — enterprise-grade custom model training

And with Aizolo, you don’t have to choose between these models and the rest of the AI ecosystem. You can access, compare, and build with all of them — including every Mistral AI latest model in 2026 — from a single platform for less than $10 a month.

The race is moving fast. Start building smarter with Aizolo — before the next 15 days bring six more releases you haven’t had time to read about yet.

Suggested Internal Links (from Aizolo Blog)

- Best Multi-Model AI Subscription in 2026 — Relevant for readers comparing Mistral to GPT and Claude access strategies

- Best AI Subscription Deals 2026 — Helps readers understand cost savings when consolidating subscriptions

- Most Affordable AI Subscription Plans 2026 — For budget-conscious readers evaluating Mistral API vs. all-in-one access

- Affordable AI for Freelancers and Small Teams — Directly relevant to the freelancer and indie founder use cases in this post

Suggested External Links

- Mistral AI Official News — mistral.ai/news — Primary source for all model announcements

- Mistral Small 4 Official Announcement — Direct source for Small 4 architecture and benchmarks

- Mistral 3 Official Announcement — Source for Large 3 and Ministral 3 details

- Hugging Face — Mistral AI Models — For developers wanting to access open-weight models directly

- Artificial Analysis — Mistral Small 4 Benchmarks — Independent performance and pricing data

- TechCrunch — Voxtral TTS Coverage — High-authority external source on Voxtral

- Mistral API Documentation — For developers integrating Mistral models directly