Table of Contents

The Day Nisha Realized Her AI Was Lying to Her — Not With Facts, But With Framing

Nisha is a 32-year-old content strategist based in Bengaluru. She runs a boutique agency that creates research-backed content for SaaS companies, think tanks, and NGOs across South Asia — work that often involves a least biased ai model 2026 comparison. Her work depends on accuracy. Nuance. Balance.

One afternoon in early 2026, she was using her primary AI assistant to draft a briefing on regulatory policy for a client in the financial sector — part of her ongoing least biased ai model 2026 comparison work. The AI’s response was confident, well-structured, and eloquent. It even cited frameworks and positions. It felt authoritative.

But something felt off. Nisha noticed the AI had subtly framed every counterargument as weaker, less logical, less credible — even when the opposing viewpoint was held by respected economists, a critical insight in her least biased ai model 2026 comparison. It wasn’t lying. It wasn’t hallucinating. It was doing something arguably more dangerous: it was consistently nudging the narrative in one direction.

Nisha had just encountered AI bias — and she hadn’t even been looking for it.

This is the real, quiet crisis at the center of the least biased AI model 2026 comparison conversation. Not whether AI gets facts wrong. But whether AI is framing your thinking — for research, for decisions, for content, for business strategy — without you realizing it.

If you’ve ever wondered which AI you can actually trust to stay neutral, this guide is for you.

Why AI Bias Is the Most Underrated Problem of 2026

When most people talk about choosing the right AI model, they compare speed, context window size, coding ability, and benchmark scores. These matter. But there’s a dimension that almost nobody talks about in public: political and ideological bias baked into the model itself.

In 2026, AI chatbots influence critical decisions for over 800 million weekly users worldwide — from business strategy to personal advice to policy research, a reality driving the need for a least biased ai model 2026 comparison.

Yet multiple independent university studies have revealed that these systems carry measurable political, cultural, and even gender biases that directly shape the information users receive.

This isn’t theoretical. A study published through IEEE found that different frontier AI models show distinctly different ideological leanings based on their training data and human feedback processes — a core concern in any least biased ai model 2026 comparison.

Another major research effort from Stanford University showed that users perceive most popular AI systems as having a left-leaning political slant — a key insight in any least biased ai model 2026 comparison — and that just a small adjustment in prompting could shift responses toward greater neutrality.

For everyday users — marketers, students, founders, developers — this matters enormously, and it’s exactly why a least biased ai model 2026 comparison is becoming essential. When you ask an AI for a market analysis, a competitive overview, a policy summary, or a strategic recommendation, you want an answer that reflects reality — not one filtered through an invisible ideological lens.

The least biased AI model 2026 comparison is not a political debate. It’s a question of professional reliability.

What Does “Bias” Actually Mean in AI Models?

Before we compare models in a least biased ai model 2026 comparison, let’s define what we’re actually measuring. AI bias shows up in several different ways:

Political bias is the most studied form — a key dimension in any least biased ai model 2026 comparison — does a model consistently favor one political orientation over another when discussing policy, governance, or social issues?

Framing bias is subtler and arguably more consequential for professionals — a critical factor in any least biased ai model 2026 comparison. Even when a model gets the facts right, does it frame certain positions as more credible, more logical, or more mainstream than others?

Demographic and gender bias refers to how models respond differently to users based on perceived gender, cultural background, or linguistic cues. A UC Santa Cruz study published in 2025 found that GPT-4o showed measurable gender-based empathy differences in certain response patterns.

Recency and source bias refers to whether a model systematically draws more heavily from certain types of sources — academic vs. mainstream media, Western vs. global perspectives, corporate vs. independent voices — another key dimension in any least biased ai model 2026 comparison.

Understanding these different forms of bias is the starting point for a real, useful least biased AI model 2026 comparison.

The Least Biased AI Model 2026 Comparison: Breaking Down the Major Players

Here’s what the evidence actually shows in 2026 — a foundation for any least biased ai model 2026 comparison. Note that no AI model is perfectly neutral — that’s an impossible standard. What we’re comparing is the degree of measured bias and the transparency with which each lab addresses it.

Claude (Anthropic) — The Most Transparent About Even-Handedness

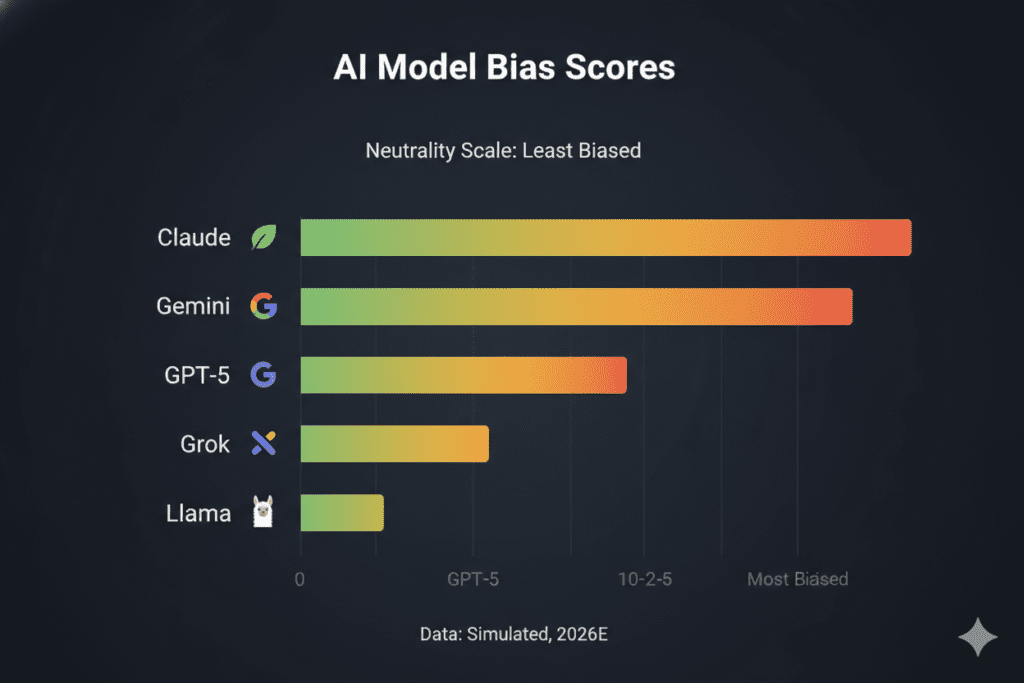

Anthropic has published some of the most rigorous public research on political bias of any AI lab — a key reference point in any least biased ai model 2026 comparison. Their internal evaluations, using 1,350 pairs of politically sensitive question pairs, tested Claude Sonnet 4.5 alongside GPT-5, Gemini 2.5 Pro, Grok 4, and Llama 4 Maverick.

The results showed Claude Sonnet 4.5 performing at a high level of even-handedness — a key finding in any least biased ai model 2026 comparison — scoring comparably to Grok 4 and Gemini 2.5 Pro, while GPT-5 and Llama 4 scored lower in the same evaluation. Anthropic’s Claude Opus 4.1 achieved 95% neutrality scores and Claude Sonnet 4.5 reached 94% in separate research tracking political even-handedness.

Perhaps more importantly, Anthropic open-sourced its evaluation methodology — a crucial factor in any least biased ai model 2026 comparison. That’s a significant act of transparency. It means external researchers can reproduce the findings, challenge the methodology, and contribute to shared standards for measuring political bias in AI.

An independent study by Promptfoo placed Claude Opus 4 as the most centrist AI model in their 7-point Likert scale analysis — scoring 0.646 out of 1 where 0.5 represents true center. Claude Opus 4 also achieved the highest proportion of centrist responses at 16.1%, compared to Grok’s 2.1%, GPT-4.1’s 6.0%, and Gemini’s 5.5%.

For the least biased AI model 2026 comparison, Claude is the strongest performer on political and framing neutrality.

Gemini 2.5 Pro (Google DeepMind) — Centrist in Multiple Studies

Gemini has consistently appeared near the top in least biased AI model 2026 comparison evaluations. An IEEE-published study examining ChatGPT-4, Perplexity, Google Gemini, and Claude found that Gemini adopted the most centrist stances of the four models tested, while ChatGPT-4 and Claude showed more liberal lean in that particular dataset.

A separate study confirmed Google’s model tends toward centrist positions on politically charged prompts. Gemini 2.5 Pro performed comparably to Claude in Anthropic‘s own even-handedness evaluation. For users who need geopolitical or policy-oriented research, Gemini is a strong choice when neutrality on these specific topics is the priority.

GPT-5 (OpenAI) — Capable but Less Neutral

GPT-5 is arguably the most powerful general-purpose AI model of 2026 in many task categories — but the least biased AI model 2026 comparison does not favor it on neutrality metrics. Anthropic‘s evaluation found GPT-5 scored about 30% lower on political even-handedness than Claude. A Stanford study found users perceive OpenAI models as having four times greater left-leaning slant than Google models.

This doesn’t make GPT-5 a bad model — it makes it less suited for tasks where strict ideological neutrality matters, like policy research, competitive analysis, and academic writing.

Grok 4 (xAI) — The Wild Card

Grok 4 is the most polarizing entry in any least biased AI model 2026 comparison. The Promptfoo study placed Grok close to Claude on average political centrism — but revealed something important in the distribution: only 2.1% of Grok’s responses were actually centrist. Most responses swung between extremes, which averaged out to a centrist score while actually being anything but consistently moderate.

For users who need predictable neutrality — not just average neutrality — Grok is the least reliable option in this comparison.

Llama 4 (Meta) — Open Source with Notable Gaps

Llama 4 scored the lowest in Anthropic‘s even-handedness evaluation, at 66% compared to Claude’s 94–95%. For developers building custom tools using open-source models, this is worth factoring in. The flexibility of Llama is a genuine advantage, but it comes with less built-in guardrails around ideological balance.

Quick Reference: Least Biased AI Model 2026 Comparison at a Glance

| AI Model | Political Neutrality | Framing Balance | Transparency | Best For |

|---|---|---|---|---|

| Claude (Anthropic) | ★★★★★ | ★★★★★ | ★★★★★ | Research, policy, academic work |

| Gemini 2.5 Pro | ★★★★☆ | ★★★★☆ | ★★★☆☆ | Geopolitical analysis, factual Q&A |

| GPT-5 | ★★★☆☆ | ★★★☆☆ | ★★★☆☆ | General tasks, creative work |

| Grok 4 | ★★★☆☆ | ★★☆☆☆ | ★★☆☆☆ | Experimental use, tech topics |

| Llama 4 | ★★☆☆☆ | ★★☆☆☆ | ★★★★☆ | Custom-built, fine-tuned applications |

Why You Can’t Rely on One Model Alone — and What Smart Professionals Do Instead

Here’s the insight that most least biased AI model 2026 comparison articles miss entirely.

Even if you identify the “least biased” model today, bias is not a fixed property. It shifts with every update, every fine-tuning cycle, every system prompt configuration. Anthropic itself notes that system prompts can appreciably influence model even-handedness — meaning the same base model can behave quite differently depending on how it’s deployed.

Smart professionals in 2026 don’t bet everything on a single model. They compare outputs across models.

Think about what Nisha could have done differently. Instead of relying on one AI to write her policy brief, she could have run the same prompt through Claude, Gemini, and GPT-5 simultaneously — and compared where their responses diverged. Those divergences are exactly where bias hides. Where models agree, you have higher confidence. Where they differ, you have a signal to investigate.

This is the real competitive advantage: not choosing the “best” model, but using a system that lets you compare models intelligently, side by side, in real time.

How Aizolo Turns Bias Detection Into a Daily Practice

This is where Aizolo enters the picture — not as a replacement for good judgment, but as the infrastructure that makes good judgment scalable.

Aizolo is an all-in-one AI platform that gives you access to all major AI models — Claude, GPT-5, Gemini, Grok, Perplexity, and more — in a single unified workspace, for just $9.90 per month. You don’t need five separate subscriptions. You need one smart platform.

But here’s the part that directly addresses the bias problem: Aizolo’s side-by-side comparison feature lets you run the same prompt through multiple models simultaneously and see their responses next to each other. This transforms bias detection from a theoretical exercise into a practical daily habit.

Instead of trusting one model’s framing of a geopolitical issue, you see all three framings at once. Instead of assuming one model’s take on a competitor analysis is neutral, you can spot where they diverge — and investigate why. That divergence is where the bias lives.

This is exactly the workflow that researchers, policy analysts, senior marketers, and data-driven founders are adopting in 2026. And Aizolo makes it accessible without requiring five separate subscriptions totalling over $110 per month.

Explore more insights on Aizolo

Real-World Use Cases: Who Needs the Least Biased AI Model and Why

For Researchers and Academics

A researcher studying public health policy needs AI that doesn’t subtly discount one body of evidence in favor of another. Using Aizolo’s side-by-side comparison, they can run the same literature review prompt through Claude and Gemini — two models that score highest on neutrality — and flag where the interpretations differ before drawing their own conclusions.

For Founders and SaaS Builders

A founder using AI to analyze competitor positioning or market trends needs outputs that reflect actual market dynamics — not outputs colored by the AI’s implicit assumptions about what business models are “better.” Running competitive analysis through multiple models and comparing results helps surface where framing differs and where objective data actually speaks for itself.

Learn from real-world experience at Aizolo

For Developers

A developer integrating AI into a product that touches social or policy topics — a news summarization tool, a legal research assistant, a civic tech platform — cannot afford to ship a model with strong ideological leanings. The least biased AI model 2026 comparison data is essential for model selection in these contexts. Aizolo’s platform lets developers test the same use case across models quickly, without managing multiple API keys and interfaces simultaneously.

For Marketers and Content Teams

A marketer writing for a diverse global audience needs content that doesn’t inadvertently alienate readers with subtle political framing. Comparing outputs across Claude and Gemini — both strong performers on neutrality — helps identify and eliminate framing that feels loaded or one-sided before it reaches the audience.

For Students

A student writing a thesis or policy paper who uses AI for research assistance should understand which models are most likely to present multiple perspectives fairly. Claude’s high even-handedness scores make it a strong default for academic use cases. But cross-referencing with Gemini adds an extra layer of confidence — and Aizolo makes that cross-referencing effortless.

For Freelancers

A freelance journalist or consultant who uses AI for research and drafting needs to protect their professional credibility. Using a single model that leans in a particular direction can quietly introduce framing that undermines their reputation for objectivity. The solution isn’t to stop using AI — it’s to use AI smarter by comparing outputs. Read more expert guides on Aizolo.

Practical Strategies for Reducing AI Bias in Your Workflow

Understanding which model is least biased is only the first step. Here are actionable strategies you can implement right now:

Run the same prompt across at least two models. For any high-stakes output — research, analysis, strategic recommendations — compare Claude and Gemini side by side as your baseline. Note where they agree and where they diverge.

Ask models explicitly to present multiple perspectives. Prompting an AI with “give me the three strongest arguments on each side of this issue” tends to surface more balanced outputs than open-ended prompts, regardless of the model’s baseline bias.

Use Aizolo’s comparison workspace to make multi-model comparison your default. The platform is built precisely for this workflow — run a single prompt through multiple frontier models simultaneously, see responses side by side, and identify where framing differs.

Flag responses where one position is consistently presented as obviously correct. If an AI consistently phrases one side of a nuanced issue as “clearly” or “obviously” true, that’s a signal worth examining. Good reasoning acknowledges complexity.

Stay current on bias research. The landscape shifts with every model update. Resources like the Anthropic blog, the Promptfoo research team, and IEEE publications regularly publish new bias evaluations. Follow Aizolo for practical tech and startup insights — the blog covers new model releases and their implications in real time.

Why the “Least Biased” Answer Changes Over Time

One of the most important — and humbling — truths about the least biased AI model 2026 comparison is that it’s a moving target.

Anthropic updates Claude regularly. Google updates Gemini. OpenAI releases GPT updates. Each update potentially changes the model’s behavior on politically sensitive topics. System prompts, deployment configurations, and fine-tuning all influence how biased or balanced any given interaction feels.

This is precisely why Anthropic’s decision to open-source their political even-handedness evaluation methodology matters so much. If shared standards exist, the entire industry can be held accountable over time — not just in one snapshot comparison, but across the ongoing evolution of these systems.

It also means that the right workflow isn’t to pick one model and trust it forever. It’s to stay informed, compare regularly, and use a platform built for comparison as part of your professional toolkit.

The Bigger Picture: Bias, Trust, and the Future of AI-Assisted Work

We are at a pivotal moment. AI is no longer a novelty for early adopters — it’s infrastructure for professionals across every sector. Researchers, founders, marketers, developers, students, freelancers — everyone is using AI daily to inform decisions.

The question of which AI is least biased is, at its core, a question about trust. Can you trust the output you’re reading? Can you trust the framing of the analysis? Can you trust that the perspective your AI is presenting reflects reality rather than an invisible tilt in the training data?

In 2026, the honest answer is: you can partially trust any model, and you should fully trust none of them in isolation. The least biased AI model 2026 comparison shows that Claude and Gemini lead on measurable neutrality metrics — but the smartest users don’t stop there. They compare. They question. They use tools built for exactly that purpose.

That’s the Aizolo philosophy: not which AI is best in absolute terms, but which AI — or combination of AIs — gives you the most accurate, reliable, and trustworthy result for your specific task, your specific context, your specific professional standards.

Start building smarter with Aizolo

Conclusion: The Least Biased AI Model in 2026 Is the One You Compare, Not Just Use

Let’s bring Nisha back for a moment. After that policy briefing incident, she changed her workflow. She stopped relying on a single AI. She started running her most consequential prompts through Claude and Gemini simultaneously using Aizolo’s comparison interface — then reading both responses side by side before drawing conclusions.

Her clients noticed. The quality of her research briefs improved. The balance of her analysis deepened. And she had something even more valuable than better content: she had confidence in her process.

The least biased AI model 2026 comparison ultimately isn’t about finding one perfect, neutral oracle. It’s about building a professional workflow that treats AI outputs with appropriate critical thinking, uses the most reliable models available, and leverages comparison as a built-in quality control step.

Claude leads on documented political even-handedness in 2026, with Gemini performing strongly as well. Both are far ahead of GPT-5 on neutrality metrics. Grok is inconsistent. Llama 4 lags significantly.

But the real answer is: use them together, side by side, with the intelligence to ask why they differ.

That’s what Aizolo is built for. And in 2026, that’s the professional standard that separates people who use AI from people who use AI well.

Explore more insights on Aizolo — and start your free trial at chat.aizolo.com to experience multi-model comparison for yourself.

Suggested Internal Links

- Best AI Models by Category 2026 — Compare models across different capability dimensions

- Side by Side AI Comparison in 2026 — Full guide to multi-model comparison workflows

- Claude AI Strengths Compared to Other Models 2026 — Deep dive into Claude’s unique advantages

- AI Model Benchmarks Comparison 2026 — Understanding what benchmark scores actually mean

- Benefits of Comparing AI Models — Why comparison is the missing step in most AI workflows

Suggested External Links

- Anthropic’s Political Even-Handedness Research — Official methodology and results from Anthropic’s bias evaluation

- Promptfoo: Grok 4 Political Bias Study — Independent open-source analysis of political bias across frontier models

- Stanford Research: AI Bias Perception Study — Stanford’s research on perceived political slant in LLMs

- IEEE: Political Bias in Large Language Models — Peer-reviewed comparative analysis of bias across ChatGPT, Gemini, Perplexity, and Claude

- LLM Stats Leaderboard — Live performance and evaluation tracking for 500+ AI models