Table of Contents

The $110 Monthly Bill That Started Everything

It was a Tuesday morning in Hyderabad when Rohan, a 31-year-old SaaS founder, opened his credit card statement and felt his stomach drop while searching for the best multimodal ai model 2026 gemini vs others.

He was paying for four separate AI subscriptions — ChatGPT Plus, Gemini Advanced, Claude Pro, and a Perplexity plan — because his product needed the best multimodal ai model 2026 gemini vs others. His marketing team needed AI-generated video scripts. His developer needed a model that could parse code alongside images.

His researcher was drowning in PDFs and audio files. And he needed the best multimodal ai model 2026 gemini vs others to process all of it — text, image, video, audio — in one intelligent loop.

“I just need the best multimodal ai model 2026 gemini vs others has to offer,” he told his co-founder. “One model that does it all.”

The problem? He had four subscriptions, zero clear answer, and a credit card bill that looked like a small monthly car payment.

If you’re asking the same question — what is the best multimodal AI model 2026 has released — you’re not alone. And you’re not overthinking it. In 2026, the answer genuinely matters. Multimodal AI is no longer a premium feature. It’s the baseline for anyone building serious products, running research, or trying to stay competitive.

This guide breaks down the best multimodal ai model 2026 gemini vs others landscape — Gemini vs GPT-5.4 vs Claude vs Grok vs others — with real benchmarks, real use cases, and a smarter way to compare them without spending $110 a month.

Why the Best Multimodal AI Model 2026 Question Is Harder Than It Looks

A year ago, asking “what’s the best multimodal ai model 2026 gemini vs others” had a simpler answer. GPT-4V handled images. Claude was text-first. Gemini was still finding its footing.

That era is over.

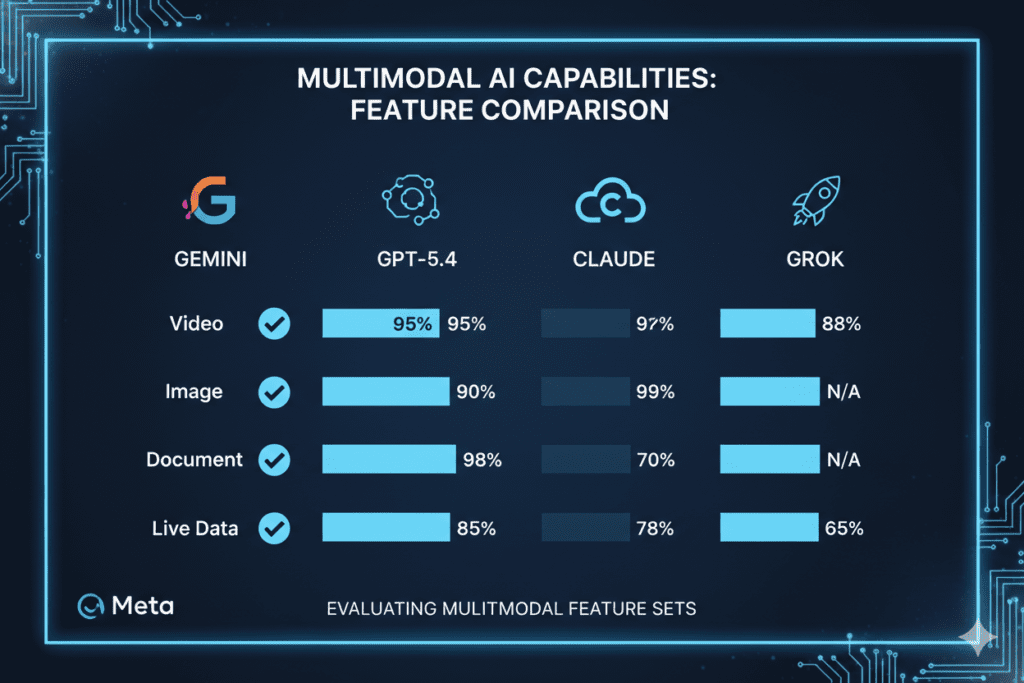

In 2026, nearly every frontier model claims multimodal capability. But here’s what most comparison articles miss: multimodal doesn’t mean the same thing across models.

Some models handle text + images only. Others process video natively. Some understand audio. A few can reason across all of them simultaneously in the best multimodal ai model 2026 gemini vs others — and that gap is massive in practice.

When you’re building a product that needs to:

- Parse a customer’s uploaded PDF and a screenshot together

- Generate a video from a text brief

- Transcribe a meeting and summarize it with visual context

- Analyze a codebase alongside architectural diagrams

…you’re not looking for a model that “supports images.” You’re looking for the best multimodal ai model 2026 gemini vs others offers — one that treats multiple input types as first-class citizens of its reasoning process.

Here’s why most people struggle with this question:

- Benchmark confusion. Labs report different scores using different tests. Gemini leads GPQA. Claude leads SWE-bench. GPT leads on some composite scores. Comparing them apples-to-apples requires context.

- Marketing vs. reality. “Multimodal” appears in every model’s description. The depth of capability varies enormously.

- Cost fragmentation. Testing the best multimodal AI model 2026 has to offer — across Gemini, Claude, GPT, and Grok — means paying $80–$110/month in separate subscriptions just to run your own comparison.

- Use-case mismatch. The best multimodal AI model 2026 for a researcher is not the same as the best one for a product marketer or a SaaS developer.

Let’s cut through all of it.

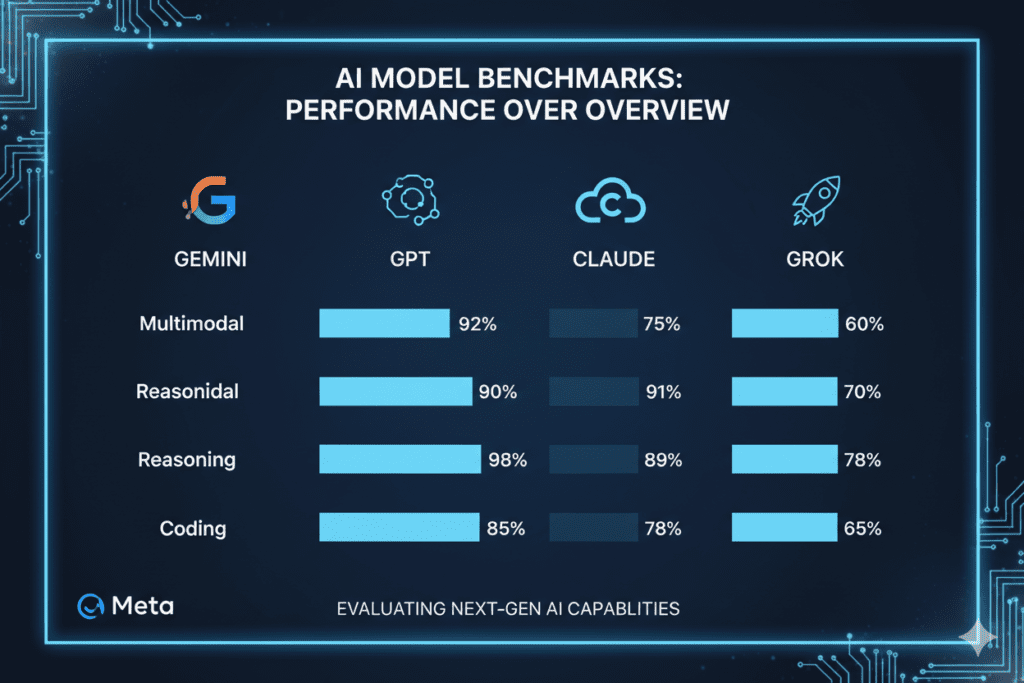

The Leading Multimodal AI Models in 2026: A Benchmark-First Overview

Gemini 3.1 Pro — The Multimodal Benchmark Leader

When you’re evaluating the best multimodal ai model 2026 gemini vs others, Gemini 3.1 Pro is the model that earns that title on paper — and often in practice.

Google’s Gemini 3.1 Pro entered 2026 as the clear leader in multimodal reasoning benchmarks in the best multimodal ai model 2026 gemini vs others comparison. It scored 94.3% on GPQA Diamond (expert-level physics, chemistry, and biology questions), 77.1% on ARC-AGI-2 (more than double its predecessor), and leads on MMMU-Pro — a benchmark specifically designed for mixed-media understanding.

What makes Gemini 3.1 Pro exceptional for multimodal workflows:

- Native support for text, image, audio, video, PDFs, code repositories, and function calling — all within a single 1-million-token context window. This is not a bolted-on feature. Google built multimodality into Gemini’s architecture from the ground up.

- Video understanding at scale. Gemini 3.1 Pro can process multi-hour video files and extract structured insights — something no competing model matches at this context length.

- Tiered thinking levels (Low / Medium / High) let developers tune cost vs. quality per task — a practical advantage for production deployments.

- Deep Google ecosystem integration. Gmail, Docs, Drive, Meet, NotebookLM, Chrome, and developer APIs are all native touchpoints, meaning multimodal inputs flow naturally in and out of existing workflows.

- Best price-to-performance ratio among frontier models. At roughly $2 input / $12 output per million tokens, Gemini 3.1 Pro is significantly more affordable than Claude Opus 4.6 ($15/$75) and competitive with GPT-5.4 ($2.50/$15).

For research-heavy workflows, scientific analysis, enterprise document processing, and any task that mixes media types at scale, Gemini 3.1 Pro is the strongest single answer to the best multimodal AI model 2026 question.

Weakness to know: Tool calling reliability has shown some inconsistency in production environments in the best multimodal ai model 2026 gemini vs others — important to test before deploying at scale.

GPT-5.4 — The All-Rounder With Strong Multimodal Chops

GPT-5.4 is the model most professionals reach for first — and for good reason. It’s the best all-around frontier model in 2026, combining strong multimodal capabilities with the deepest ecosystem, the broadest plugin support, and a reputation for reliability.

On multimodal benchmarks in the best multimodal ai model 2026 gemini vs others, GPT-5.4 trails Gemini 3.1 Pro on blended scores (53.9 vs. 90.4 on the OfficeQA Pro benchmark), but it holds its own on image understanding and document analysis.

More importantly, it excels at combining multimodal inputs with complex reasoning and tool use — making it the best multimodal AI model 2026 has for general-purpose agent systems.

Key multimodal strengths of GPT-5.4:

- Vision + audio processing in a unified interface

- Computer use capability (interacting with real desktop environments)

- Strongest all-round benchmark composite (92.8% GPQA Diamond)

- DALL-E integration for native image generation alongside analysis

- Canvas editor — the most polished editing environment for document and content work

For founders and product teams that need a reliable, do-everything model in the best multimodal ai model 2026 gemini vs others — and don’t want to context-switch between tools — GPT-5.4 remains the safest default. It’s not the multimodal leader by benchmark, but it’s the most battle-tested in production.

Claude Opus 4.6 — The Writing and Coding Powerhouse With Vision

Claude Opus 4.6 is not the first name you’d list when evaluating the best multimodal ai model 2026 gemini vs others in the traditional sense. But underestimating it is a mistake.

Claude handles text + image inputs with exceptional reasoning depth. It doesn’t yet match Gemini’s native video processing, but for tasks that combine document analysis, code understanding, and vision — like reviewing a UI screenshot alongside a codebase, or parsing a technical diagram within a research paper — Claude Opus 4.6 produces the most thoughtful, nuanced responses of any model.

With a 128K output capacity and 1M token context window (beta), Claude Opus 4.6 is also the best multimodal AI model 2026 offers for long-form tasks: processing a 200-page PDF alongside supporting documents, reasoning across an entire codebase, or generating structured long-form analysis from mixed inputs.

For SaaS developers, technical writers, and anyone building document-heavy or code-heavy workflows, Claude deserves a serious place in your best multimodal ai model 2026 gemini vs others evaluation.

Grok 4 — Real-Time Multimodal With Live Data

Grok 4 brings something genuinely unique to the best multimodal AI model 2026 conversation: real-time data access.

It supports vision inputs and processes text + images with solid performance in the best multimodal ai model 2026 gemini vs others comparison. But its defining advantage is live access to data from X (formerly Twitter) and the web.

For marketers, social strategists, and anyone building products that need to combine multimodal inputs with current events in the best multimodal ai model 2026 gemini vs others — Grok 4 is the only model in this class that delivers freshness alongside image and text understanding.

For content teams and real-time research workflows, Grok 4 earns its place in any best multimodal ai model 2026 gemini vs others shortlist.

DeepSeek V4 and Open-Source Challengers

The open-source picture in 2026 has changed dramatically in the best multimodal ai model 2026 gemini vs others comparison. DeepSeek V4 now supports native multimodal inputs across text, images, and code with 1 trillion total parameters and a 40% memory reduction versus V3. For teams prioritizing data sovereignty, fine-tuning control, and cost at scale, DeepSeek V4 and Llama 4 are legitimate contenders in any best multimodal AI model 2026 evaluation.

The remaining gap between open-source and closed-source models in 2026 is narrowing in the best multimodal ai model 2026 gemini vs others — but closed models like Gemini still lead on video understanding, enterprise SLAs, and multimodal maturity.

Head-to-Head: Best Multimodal AI Model 2026 — Gemini vs Others

| Capability | Gemini 3.1 Pro | GPT-5.4 | Claude Opus 4.6 | Grok 4 |

|---|---|---|---|---|

| Image Understanding | ✅ Leader (MMMU-Pro 95) | ✅ Strong | ✅ Strong | ✅ Good |

| Video Processing | ✅ Leader (native, 1M ctx) | ⚠️ Limited | ❌ Not native | ❌ Not native |

| Audio Processing | ✅ Native | ✅ Native | ⚠️ Limited | ⚠️ Limited |

| Document + PDF | ✅ Excellent | ✅ Strong | ✅ Leader (128K output) | ✅ Good |

| Code + Vision | ✅ Strong | ✅ Strong | ✅ Leader | ✅ Good |

| Live/Real-Time Data | ⚠️ Search grounding | ⚠️ Plugin | ❌ No | ✅ Leader |

| Context Window | 1M tokens | 1M tokens | 1M tokens (beta) | Competitive |

| API Price (input/output) | $2/$12 | $2.50/$15 | $15/$75 (Opus) | $2/$15 |

| Reasoning Benchmark | 94.3% GPQA | 92.8% GPQA | 91.3% GPQA | Competitive |

The honest summary: For pure multimodal breadth — especially video and audio at scale — Gemini 3.1 Pro is the best multimodal AI model 2026 has produced.

For general-purpose reliability with solid multimodal support in the best multimodal ai model 2026 gemini vs others, GPT-5.4 is the safest bet. For deep document and code-plus-vision tasks, Claude Opus 4.6 outperforms. For real-time multimodal research, Grok 4 is unmatched.

Real-World Use Cases: Who Should Use Which Best Multimodal AI Model 2026?

For Founders and SaaS Builders

If you’re building a product that processes user-uploaded files — PDFs, images, voice memos, video clips — Gemini 3.1 Pro’s native multimodal architecture is the most complete foundation. Its 1M context window means you can process entire client folders in a single API call. Its pricing makes it viable at production scale.

For the product layer — UX copy, marketing assets, pitch decks — layering in GPT-5.4 or Claude in the best multimodal ai model 2026 gemini vs others via a unified platform gives you the best of both worlds.

For Developers

The best multimodal AI model 2026 for developers depends on the task. For vision + code workflows (reviewing UI screenshots alongside codebases, parsing architectural diagrams, analyzing error screenshots), Claude Opus 4.6 produces the most accurate and contextually rich analysis.

For multimodal API integrations at scale in the best multimodal ai model 2026 gemini vs others, Gemini 3.1 Pro’s structured output + function calling support is the most production-ready.

For Marketers

Video content is now an AI-native workflow. Gemini’s native video understanding lets you extract scripts, analyze competitor videos, and process raw footage with a text prompt. Grok 4’s real-time data access makes it the best multimodal AI model 2026 offers for social-native marketing teams that need to tie visual inputs to current trends.

For Researchers and Students

Gemini 3.1 Pro’s GPQA leadership (94.3%) reflects real-world scientific reasoning superiority. For researchers working with mixed-media academic sources — papers with charts, datasets, audio interviews, lab video — Gemini’s 1M context and native multimodal processing is transformative. Claude Opus 4.6 adds depth for long-form synthesis.

For Freelancers

The best multimodal AI model 2026 for freelancers in the best multimodal ai model 2026 gemini vs others is whichever one matches your client’s deliverable — and you can’t afford to subscribe to all of them at $20+ each. A unified platform is the practical answer (more on that below).

For Content Creators

GPT-5.4 with its Canvas editor and DALL-E integration gives creators the most polished text-to-image pipeline in the best multimodal ai model 2026 gemini vs others. Gemini’s video understanding makes it the best multimodal AI model 2026 has for video-first creators processing raw footage. Combine both without paying for both separately.

The Real Problem Nobody Talks About: The Comparison Tax

Here’s the uncomfortable truth about finding the best multimodal AI model 2026 for your workflow:

You can’t know which model is best for your specific use case without testing all of them.

But testing all of them means paying for all of them. That’s $20 for ChatGPT, $20 for Gemini Advanced, $20 for Claude Pro, $30 for Grok — $90–$110 per month just to run your own best multimodal AI model 2026 comparison.

Most people solve this by picking one and hoping in the best multimodal ai model 2026 gemini vs others. They read a benchmark article, subscribe to Gemini because it topped the multimodal charts, and never find out that Claude’s vision + code reasoning would have been 40% more accurate for their specific workflow.

This is what Rohan from Hyderabad was doing — paying for four subscriptions, still not sure if he had the right answer, still switching tabs every time his team’s needs shifted.

Then he found a smarter way.

How Aizolo Solves the Best Multimodal AI Model 2026 Problem

This is where Aizolo comes in — and it directly solves the most frustrating part of the best multimodal AI model 2026 search.

Aizolo is an all-in-one AI platform that gives you access to GPT-5.4, Claude, Gemini, Grok, Perplexity, and 2,000+ AI tools — all from a single dashboard — for $9.90 per month.

Think about what that means for your best multimodal AI model 2026 evaluation:

Instead of subscribing to Gemini Advanced ($20), Claude Pro ($20), ChatGPT Plus ($20), and Grok ($30) separately — spending $90–$110 per month — you run all of them side-by-side in Aizolo for $9.90.

You upload your PDF. You run it through Gemini and Claude simultaneously. You see which one extracts the structured data more accurately for your specific document type. You stop guessing. You know.

That’s not a minor convenience. That’s the difference between making an informed decision and making an expensive assumption.

What Aizolo gives you for your best multimodal AI model 2026 comparison:

- Side-by-side comparison across all major models — run the same multimodal prompt through Gemini, GPT, and Claude at once and see the difference

- AI Image Generator — multiple image models in one interface

- AI Video Generator — text-to-video with state-of-the-art models

- AI Audio Generator — voice synthesis, music generation, TTS

- Smart Prompt Manager — save and reuse your best multimodal prompts across all models

- AI Memory — your preferences and context persist across sessions

- Custom API Keys — bring your own keys (encrypted) for unlimited usage

- Import from ChatGPT or Claude — migrate your existing conversations instantly

- 2,000+ AI tools — new tools added weekly

For founders, developers, marketers, students, freelancers, and content creators — anyone trying to identify the best multimodal AI model 2026 has produced without breaking their budget — Aizolo is the practical solution.

Explore more insights on Aizolo → aizolo.com/blog

What the Benchmarks Don’t Tell You About the Best Multimodal AI Model 2026

Benchmarks are essential. But they’re also imperfect maps of real-world territory.

Here’s what the best multimodal AI model 2026 comparison research reveals that most articles miss:

1. Video is the biggest multimodal gap. Gemini 3.1 Pro’s native video processing isn’t just a feature — it’s a category. No other frontier model matches it at 1M context for video-native workflows. If your use case touches video, this comparison is effectively over.

2. Multimodal + reasoning depth is Gemini’s compound advantage. Winning GPQA at 94.3% means Gemini doesn’t just process multiple input types — it reasons about them at an expert level. For scientific research and complex analytical tasks involving charts, data, and text, this gap matters.

3. Claude’s document + code vision is underrated. Ask Claude Opus 4.6 to analyze a GitHub repository alongside a UI mockup and suggest improvements. The depth of that analysis will surprise you — and it’s one of the clearest examples of multimodal + language understanding operating at the frontier.

4. Benchmarks age fast. The AI landscape in 2026 is moving so quickly that Q1 rankings may not reflect Q2 reality. The best multimodal AI model 2026 answer you get from a March article may already be outdated by May. This is exactly why testing multiple models live — rather than relying on static rankings — is the smart strategy.

5. The right answer depends on your prompt, not just your use case. Two developers doing “code review with screenshots” may get dramatically different results from Gemini vs. Claude depending on the programming language, the complexity of the codebase, and the nature of the screenshots. The only way to know is to test both.

Learn from real-world experience at Aizolo → aizolo.com/blog

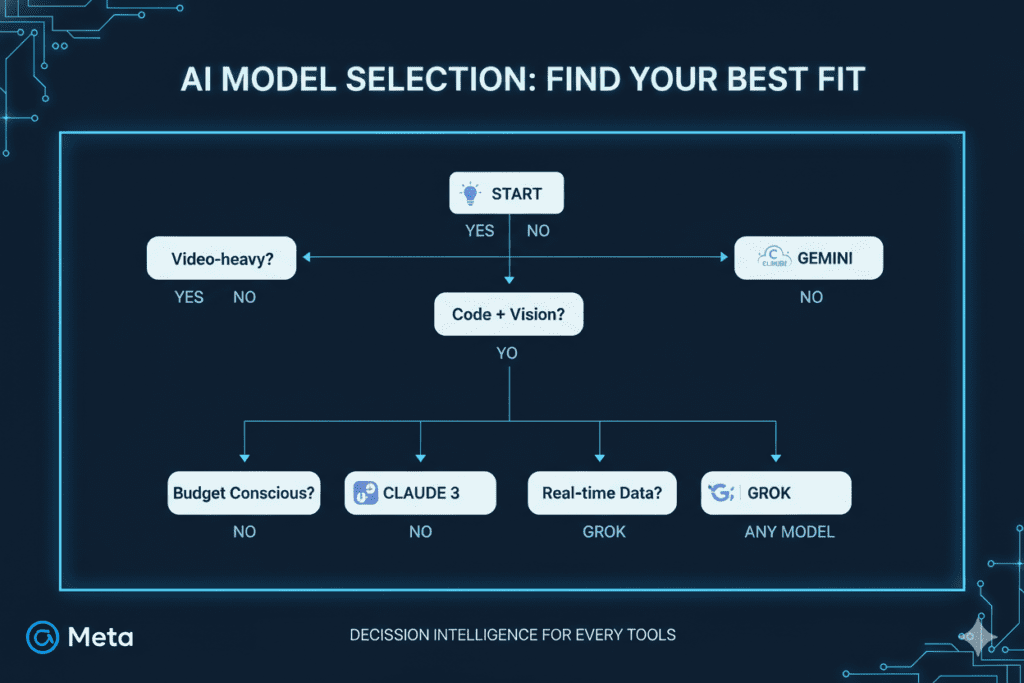

Actionable Framework: How to Choose the Best Multimodal AI Model 2026 for Your Workflow

Follow this framework before committing to any single model:

Step 1: Map your primary input types. Do you primarily work with: (a) text + images, (b) video, (c) audio, (d) documents/PDFs, (e) code + screenshots, or (f) all of the above? Your answer narrows the best multimodal AI model 2026 field immediately.

Step 2: Define your output requirement. Are you generating text, images, code, video, or structured data? The best multimodal AI model 2026 for input processing may not be the best for output generation.

Step 3: Set your budget. API users should note that Gemini is the most cost-efficient frontier option. For consumer plan users, a unified platform like Aizolo is the only rational choice for multi-model evaluation.

Step 4: Test with your actual prompts. Not benchmark prompts. Not general examples. Your specific workflow inputs. Use Aizolo’s side-by-side comparison to run Gemini vs. GPT vs. Claude on your real tasks.

Step 5: Reassess quarterly. The best multimodal AI model 2026 landscape is shifting monthly. Build flexibility into your stack — which is another reason a unified platform beats single-model lock-in.

Start building smarter with Aizolo → chat.aizolo.com

The Verdict: Best Multimodal AI Model 2026 — Gemini vs Others

There is no single best multimodal AI model 2026 for every person and every use case. That’s not a cop-out — it’s the honest, benchmark-supported truth.

But here’s the clearest summary you’ll find:

- Gemini 3.1 Pro is the best multimodal AI model 2026 has produced for breadth of input types, video processing, reasoning benchmarks, and cost-at-scale. It’s the default recommendation for research-heavy, enterprise, and mixed-media workflows.

- GPT-5.4 is the best all-around model for general-purpose multimodal use with the strongest ecosystem reliability.

- Claude Opus 4.6 is the best multimodal AI model 2026 offers for document + code + vision depth — and the best choice for long-form analytical outputs.

- Grok 4 is the best for real-time multimodal research where freshness matters alongside visual inputs.

The smartest move in 2026 isn’t to pick one and commit. It’s to access all of them through a single platform, compare them on your real workflow, and make a decision based on data — not marketing.

That’s exactly what Aizolo was built for.

Conclusion: Stop Guessing. Start Comparing.

Rohan, our Hyderabad founder from the opening of this guide, eventually found his answer. Not by reading more benchmark articles.

By running Gemini and Claude side-by-side on his actual document processing workflow — and seeing, in real time, which model handled his specific PDF + screenshot combination more accurately.

He canceled three of his four subscriptions. Switched to Aizolo. And now runs all four frontier models — including Gemini, GPT, Claude, and Grok — from a single dashboard, for less than a tenth of what he was spending.

The best multimodal AI model 2026 question doesn’t have to be expensive or confusing. It has to be practical.

Follow Aizolo for practical tech and startup insights → aizolo.com/blog

Read more expert guides on Aizolo — including deep dives into AI model comparisons, SaaS builder strategies, and multimodal workflow optimization.

The tools exist. The knowledge is available. The only question is whether you’ll test intelligently or guess expensively.

Start your free trial at Aizolo →

Suggested Internal Links

- Most Intelligent AI Model 2026 Comparison: GPT-5, Claude Opus, Gemini, and Grok Tested Side-by-Side

- Smartest AI Model 2026 Comparison: The Only Guide You’ll Actually Need

- Best AI Models for Product Research and Comparison 2026

- AI Comparison 2026: Ultimate Guide to Choose the Right Model

Suggested External Links

- Google DeepMind — Gemini 3 Official Page — for Gemini 3.1 Pro capability references

- Artificial Analysis LLM Leaderboard — independent benchmark rankings

- BenchLM.ai — ChatGPT vs Claude vs Gemini 2026 — third-party comparison data

- Anthropic Claude Documentation — official Claude capability reference