Table of Contents

The $110-a-Month Problem Nobody Talks About Honestly

It was a Tuesday evening in Pune when Rohan, a 26-year-old SaaS developer, sat down at his desk with four browser tabs open — ChatGPT, Claude, Gemini, and Grok — and a sinking feeling in his stomach.

He’d just checked his bank statement. Four separate AI subscriptions. $110 a month, gone, before he’d even launched a single feature. And the worst part? He still wasn’t sure which model was actually worth it. He’d been switching between them based on Reddit threads and YouTube thumbnails, not data.

Sound familiar?

This is the story of millions of builders, founders, freelancers, and marketers in 2026. The AI model cost vs performance comparison 2026 landscape is more complicated than ever — and most people are making expensive decisions based on incomplete information, outdated benchmarks, or marketing spin from the very companies they’re comparing.

This guide is different. We’re going to break down the real AI model cost vs performance comparison 2026 — what each model actually costs, what it actually delivers, where it genuinely excels, and how platforms like Aizolo are changing the game by giving you access to every premium AI model for a fraction of the price.

Let’s get into it.

Why the AI Model Cost vs Performance Comparison 2026 Is So Hard to Get Right

Before we compare numbers, let’s understand the problem clearly.

Most AI model cost vs performance comparison 2026 articles do one of three things. They either focus purely on API pricing (which doesn’t help individual users or small teams), cherry-pick benchmarks that favor a particular model, or become outdated within weeks because the AI landscape is moving that fast.

Here’s what makes a fair ai model cost vs performance comparison 2026 genuinely difficult:

- Models have different tiers. Claude Opus 4.6 and Claude Haiku 4.5 are wildly different in capability and price. Comparing “Claude” to “Gemini” without specifying the tier is meaningless.

- Benchmarks don’t always reflect real-world use. A model might score brilliantly on GPQA reasoning benchmarks but feel clunky for your specific workflow.

- Pricing is a moving target. In 2026, most major models have dropped API costs significantly compared to 2024, but consumer subscription pricing has stayed flat or gone up.

- The “right” model depends on what you’re building. A solo founder writing marketing copy has entirely different needs from a developer building an autonomous coding agent.

This is exactly why the ai model cost vs performance comparison 2026 requires nuance — and why tools like Aizolo exist. More on that in a moment.

The 2026 AI Landscape: Who’s Playing and What They Cost

Let’s establish the playing field for our ai model cost vs performance comparison 2026. These are the major frontier models you’re most likely choosing between:

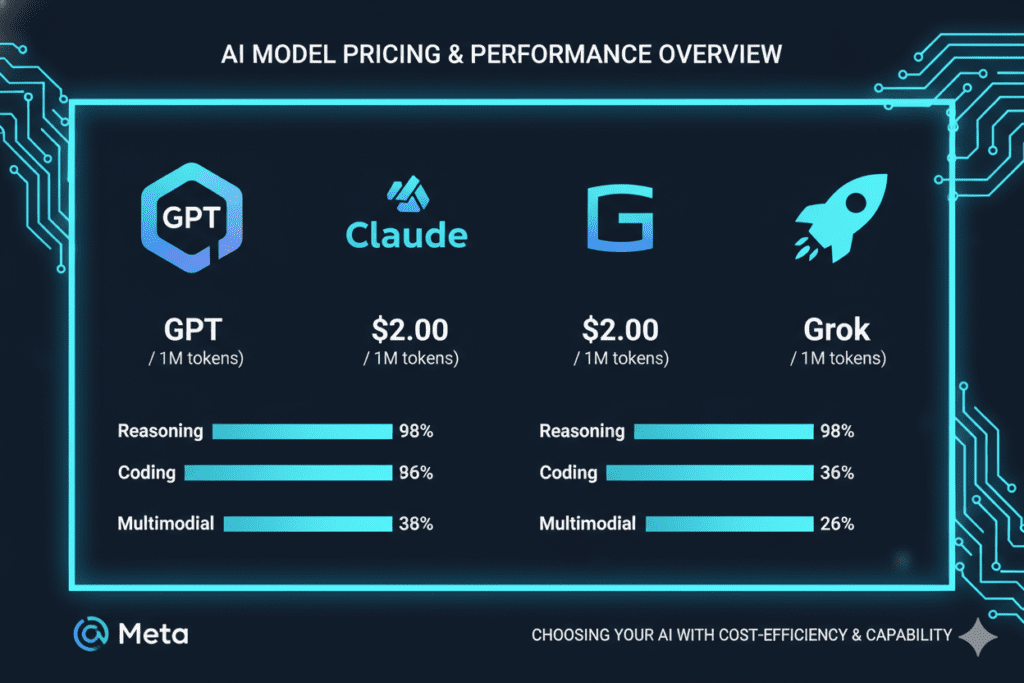

GPT-5.4 (OpenAI)

OpenAI’s flagship in 2026 is GPT-5.4 — a strong all-rounder that scores well across coding, reasoning, and creative writing. On SWE-bench (the real-world software engineering benchmark that matters most to developers), it hits around 74.9%. For consumer users, ChatGPT Plus is $20/month. For API access, you’re looking at roughly $2.50 per million input tokens and $15 per million output tokens.

GPT-5.4 is the safe, ecosystem-rich choice. It has the largest library of integrations, plugins, and community resources. But it’s not the cheapest, and it doesn’t dominate any single category.

Claude Opus 4.6 (Anthropic)

Anthropic’s Claude Opus 4.6 is the current leader in natural language output quality. It produces the most human-sounding, nuanced prose of any frontier model — a critical advantage for content creators, marketers, and anyone building AI-powered writing tools. It also hits 74%+ on SWE-bench and powers popular developer tools like Cursor and Windsurf.

The cost tradeoff is real: Claude Opus 4.6 sits at roughly $5 input / $25 output per million tokens on the API — making it the most expensive flagship tier. Consumer plans are $20/month for Pro. For heavy users, Claude Max plans go up to $200/month.

The lighter Claude Haiku 4.5 offers a budget-friendly alternative at around $1 input / $5 output per million tokens — excellent for high-volume tasks where you don’t need the full Opus-level reasoning.

Gemini 3.1 Pro (Google)

Google’s Gemini 3.1 Pro leads on two fronts: pure reasoning benchmarks (94.3% GPQA) and context window size (1 million tokens — unmatched in the industry). At $2 input / $12 output per million tokens, it’s also the most affordable flagship tier.

For developers working with massive codebases, or researchers who need to analyze entire document archives in a single pass, Gemini’s context window is genuinely a superpower. The budget-friendly Gemini Flash tiers make it the go-to choice for high-volume API usage where cost matters.

Grok 4 (xAI)

Grok 4 is the dark horse of our ai model cost vs performance comparison 2026. It leads SWE-bench at 75%, making it technically the top-ranked model for coding. It also has real-time access to X/Twitter data — a unique advantage for marketers and researchers tracking live trends. SuperGrok costs $30/month standalone, with enterprise tiers going much higher.

DeepSeek V3 (Open Source)

No ai model cost vs performance comparison 2026 is complete without mentioning DeepSeek V3. It’s open-source, can be self-hosted, and scores competitively on benchmarks for a fraction of the API cost. For startups comfortable with infrastructure, it offers remarkable price-to-performance — but it comes with the complexity of self-hosting and no commercial support.

The Real AI Model Cost vs Performance Comparison 2026: A Breakdown by Use Case

Here’s where the ai model cost vs performance comparison 2026 gets practical. Instead of abstract benchmarks, let’s match models to real people.

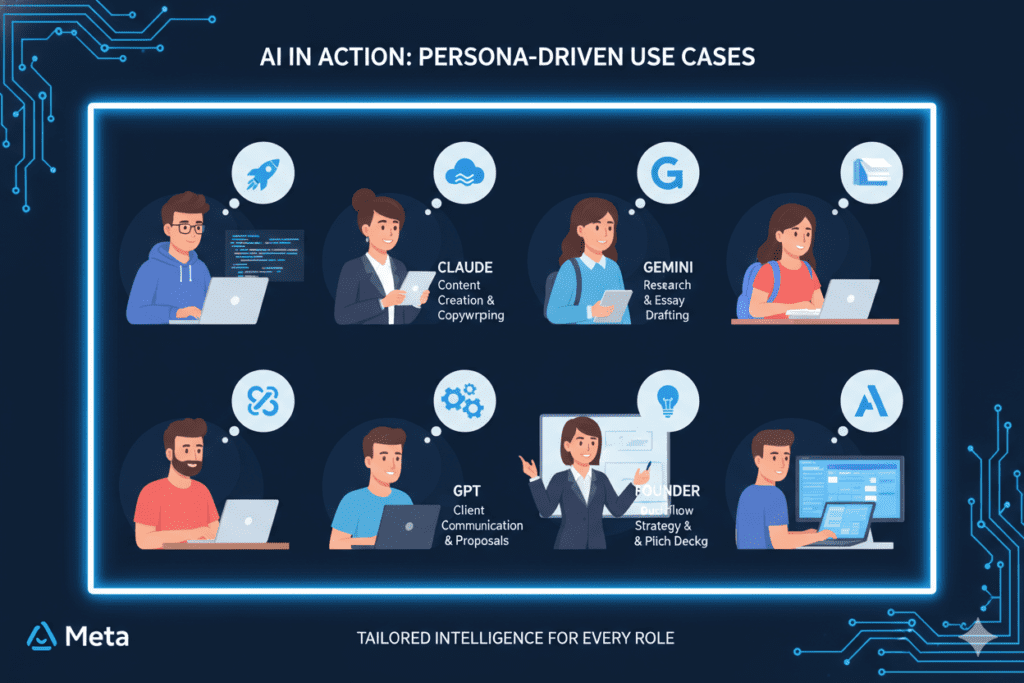

For Developers and SaaS Builders

If you’re writing code every day and need the highest benchmark accuracy, the ai model cost vs performance comparison 2026 points clearly to Grok 4 and Claude Opus 4.6 for complex tasks. Grok leads raw SWE-bench numbers; Claude dominates the developer tooling ecosystem (Cursor, Windsurf, Claude Code).

For cost-sensitive high-volume tasks — automated testing, CI/CD pipelines, documentation generation — Gemini Flash tiers are unbeatable. You get strong performance at $0.50 input / $3 output per million tokens.

Real-world scenario: Arjun, a 28-year-old SaaS founder from Hyderabad, was spending $110/month juggling four separate tabs to test which model handled his Python debugging and API documentation best. After switching to Aizolo, he runs the same prompt across Claude, GPT, and Gemini simultaneously — side by side — and picks the best output in seconds. His monthly AI spend? $9.9.

Explore more insights on Aizolo about how developers are building smarter: aizolo.com/blog

For Marketers and Content Creators

The ai model cost vs performance comparison 2026 for marketing work tips heavily toward Claude. It produces the most natural, flowing prose. Its 128,000-token output window means it can generate full-length content assets in a single pass — something GPT and Gemini still struggle with for very long outputs.

That said, GPT-5.4’s Canvas editor is the best environment for collaborative content editing. If your workflow involves a lot of revision cycles and real-time editing rather than pure generation, GPT wins on UX.

Real-world scenario: Meera, a freelance content strategist in Mumbai, was spending three hours per article switching between Claude for drafting and ChatGPT for rewrites. On Aizolo’s side-by-side comparison view, she now runs her brief through both simultaneously, compares outputs, and cuts her drafting time in half.

For Founders and Product Teams

If you’re a founder doing the ai model cost vs performance comparison 2026, you need a model for everything — strategy documents, investor decks, product specs, customer research, and email replies. No single model wins every task.

This is where the ai model cost vs performance comparison 2026 reveals its biggest insight: the answer isn’t one model, it’s the right model for each job.

GPT-5.4 for ecosystem and tool integration. Claude Opus 4.6 for nuanced long-form reasoning and writing. Gemini 3.1 Pro for research, analysis, and tasks requiring massive context. Grok 4 for real-time market intelligence and trend monitoring.

The problem? Managing four subscriptions costs $90-$110/month and kills your productivity with constant context switching.

Aizolo solves this with a single $9.9/month subscription that gives you access to all of them — in one dashboard, side by side.

For Students and Freelancers

Budget is the primary constraint for most students and freelancers in the ai model cost vs performance comparison 2026 conversation. The good news: there are more high-quality, affordable options than ever.

Claude Haiku 4.5 and Gemini Flash tiers are genuinely excellent for everyday tasks at a fraction of flagship pricing. For consumer subscriptions, the $20/month tiers across ChatGPT Plus, Claude Pro, and Gemini AI Pro all offer competitive access to their mid-tier models.

But here’s the real budget play in 2026: if you’re a student or freelancer who needs multiple models for different tasks, Aizolo’s Pro plan at $9.9/month gives you access to all premium AI models, image generation, video generation, audio generation, and a smart prompt manager — for less than the cost of a single ChatGPT Plus subscription.

Learn from real-world experience at Aizolo: aizolo.com/blog

The Hidden Dimension Most AI Model Cost vs Performance Comparisons Miss

Here’s something the average ai model cost vs performance comparison 2026 article doesn’t discuss: the cost of context switching.

Every time you copy a prompt from one tab, paste it into another, compare outputs manually, and try to synthesize which answer was better — you’re spending cognitive energy that doesn’t show up in any benchmark. But it adds up to hours every week for heavy AI users.

The models that score highest on benchmarks aren’t always the models that save you the most time in practice. Sometimes the biggest performance gain comes from eliminating friction — from having everything in one place.

This is where the ai model cost vs performance comparison 2026 starts to look different when you factor in the full workflow:

- Individual subscriptions to ChatGPT + Claude + Gemini + Grok = approximately $90–$110/month + hours of manual switching

- Aizolo’s All-in-One AI platform = $9.9/month with side-by-side comparisons, unified memory, prompt management, and access to 2,000+ AI tools

The ai model cost vs performance comparison 2026 isn’t just about which model scores better on GPQA. It’s about which setup actually makes you more productive — and keeps you in budget.

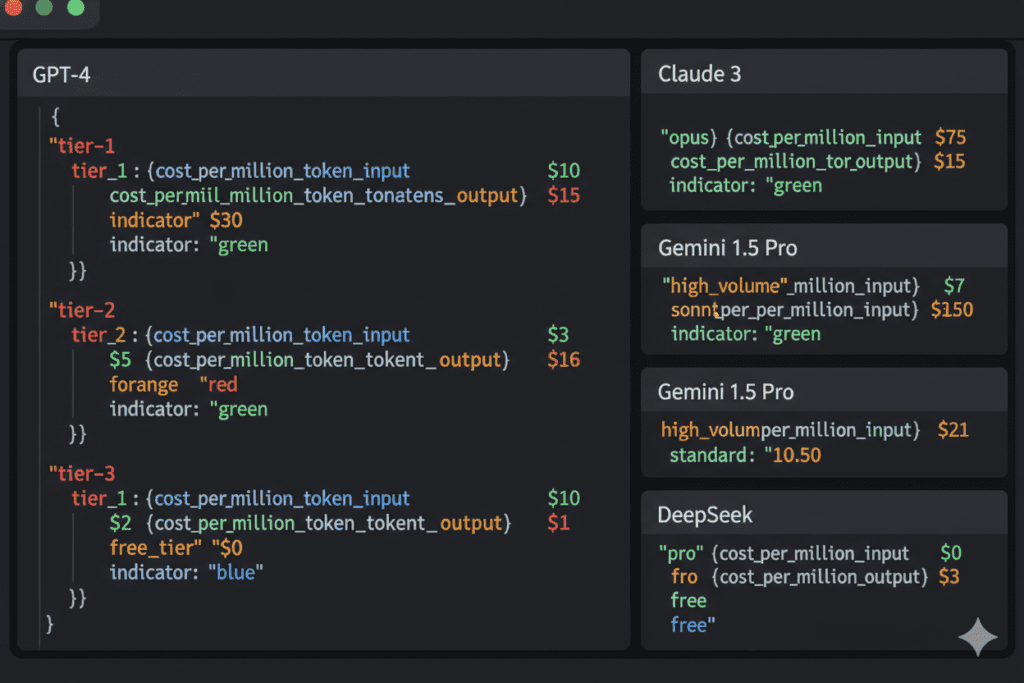

2026 API Pricing: The Full Picture for Developers Building on AI

For developers integrating AI via API — where the ai model cost vs performance comparison 2026 directly impacts your monthly infrastructure bill — here’s the honest breakdown:

Budget tier (high volume, simple tasks):

- Gemini 2.5 Flash-Lite: ~$0.10 input / $0.40 output per million tokens

- Gemini 3.1 Flash: ~$0.50 input / $3.00 output

- Claude Haiku 4.5: ~$1 input / $5 output

- DeepSeek V3: ~$0.27 input / $1.10 output

Mid-tier (balanced performance and cost):

- GPT-4o Mini: ~$0.15 input / $0.60 output

- GPT-5.4: ~$2.50 input / $15 output

- Gemini 3.1 Pro: ~$2 input / $12 output

Premium tier (maximum capability):

- Claude Opus 4.6: ~$5 input / $25 output

- GPT-5.2 Pro (extended thinking): Up to $168 output per million tokens

The key insight from this ai model cost vs performance comparison 2026 pricing breakdown: for mission-critical tasks like legal reasoning, complex architecture decisions, or high-stakes content creation, the premium tier is worth it. For bulk processing, documentation, or anything high-volume and time-insensitive, the budget tiers deliver excellent ROI.

Developers can also bring their own API keys to Aizolo — encrypted and stored securely — to get the best of both worlds: Aizolo’s unified interface and your own API pricing.

Start building smarter with Aizolo: aizolo.com

The Benchmark That Actually Matters in 2026: Real-World Performance

Benchmark scores are useful, but they’re not the full story in any ai model cost vs performance comparison 2026. Here’s what real-world usage tells us in 2026:

For coding: Grok 4 leads SWE-bench at 75%, followed closely by GPT-5.4 at 74.9% and Claude Opus 4.6 at 74%+. In practice, Claude dominates actual developer workflows because of its ecosystem integration (Cursor, Windsurf) and superior reasoning in extended, multi-turn agent tasks.

For reasoning: Gemini 3.1 Pro leads pure benchmark scores at 94.3% GPQA. But Claude Opus 4.6 catches up quickly when real-world tool use is involved.

For writing and content: Claude is the clear winner. Its output reads more naturally than any other frontier model. GPT-5.4’s Canvas environment is excellent for editorial workflows.

For research with live data: Grok 4 (with real-time X access) and Perplexity (search-native) lead when you need current information.

For massive context tasks: Gemini 3.1 Pro’s 1 million token window is unmatched. No other frontier model comes close for analyzing large codebases or entire document libraries.

The conclusion from this ai model cost vs performance comparison 2026: no single model wins everything. The most productive builders use different models for different tasks — and the most cost-effective way to do that is through a platform that gives you all of them.

Read more expert guides on Aizolo: aizolo.com/blog

How Aizolo Solves the AI Model Cost vs Performance Problem

Aizolo was built for exactly this moment in AI history — when the ai model cost vs performance comparison 2026 landscape is rich with options, but the cost and complexity of accessing them all is a real barrier for individuals and small teams.

Here’s what Aizolo gives you for $9.9/month:

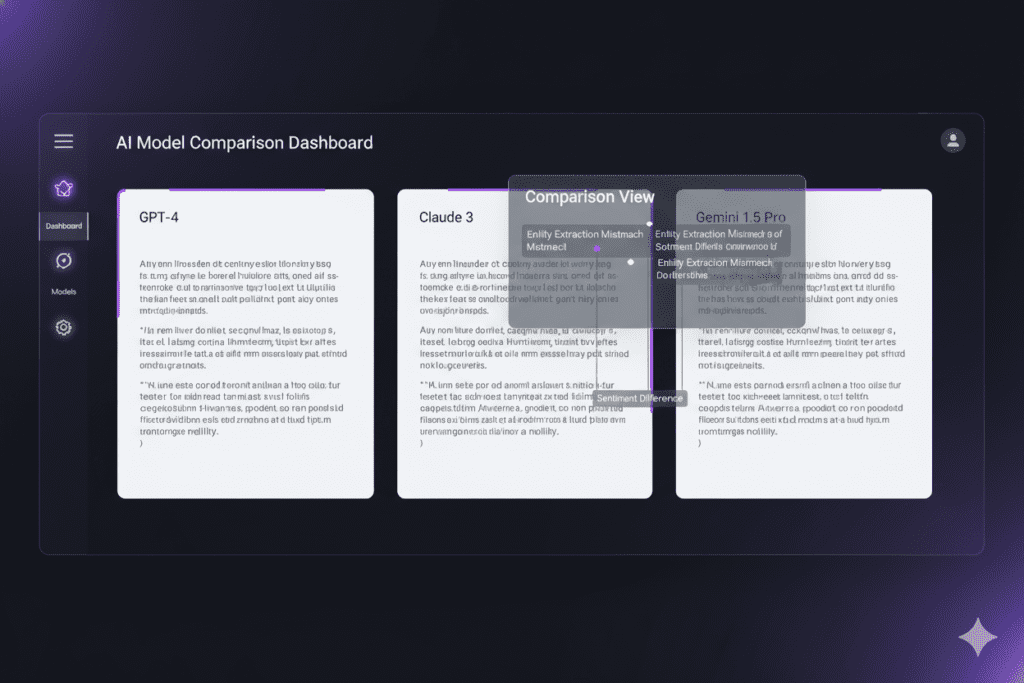

Side-by-side AI comparison. Run the same prompt across GPT-4, Claude, Gemini, and more simultaneously. See which model gives you the best answer for your specific task — no tab switching, no manual copying.

Access to all premium models. Instead of paying $110/month across four subscriptions, you get one dashboard with access to every major frontier model.

AI image, video, and audio generation. Creative professionals get access to image generators, video generation, and voice synthesis — all in one subscription.

Smart Prompt Manager. Save and organize your best prompts across all models. Build a personal library of what actually works.

AI Memory. Context that persists. Your AI workspace remembers your preferences, past conversations, and project context — so you don’t start from zero every time.

Custom API keys support. Bring your own API keys (encrypted) for unlimited token usage on your own billing. Aizolo’s interface, your API budget.

Import from ChatGPT or Claude. Migrate your existing conversation history with one click.

For any serious user doing an ai model cost vs performance comparison 2026, the math is simple. One Aizolo subscription replaces four separate ones — and adds comparison features that none of those individual subscriptions offer.

Follow Aizolo for practical tech and startup insights: Instagram | X | Telegram

What the AI Model Cost vs Performance Comparison 2026 Tells Us About the Future

The AI model cost vs performance comparison 2026 isn’t a static picture. It’s a signal about where things are heading.

Costs are converging. The premium tier gap is narrowing. Models that cost $0.002 per 1,000 tokens in 2023 are now at $0.0001 or less for budget tiers. As inference costs drop, more capability becomes accessible to smaller teams and individual builders.

Specialization is winning. The 2026 AI model cost vs performance comparison shows that no model leads across all categories. This trend will continue. Expect more specialized models for coding, reasoning, creative work, and real-time data — and more importance placed on knowing which tool to reach for.

The interface layer matters more. As underlying model performance converges, the platform experience — how you access, compare, organize, and switch between models — becomes the real differentiator. This is exactly the problem Aizolo is built to solve.

Open-source is closing the gap. Models like DeepSeek V3 and Meta’s Llama 4 are delivering competitive performance at zero API cost for those willing to self-host. The ai model cost vs performance comparison 2026 has a new axis: proprietary vs open-weight — and the gap is narrower than it’s ever been.

Conclusion: Stop Guessing, Start Comparing

The ai model cost vs performance comparison 2026 has one clear takeaway: the best model is the one you’ve actually tested against your specific task — at a price that doesn’t break your budget.

Every model in this guide has genuine strengths. GPT-5.4 is the ecosystem king. Claude Opus 4.6 writes the most natural prose and excels in agentic coding. Gemini 3.1 Pro leads reasoning benchmarks and context length. Grok 4 leads SWE-bench and has real-time data access. Budget tiers from Gemini and Claude make high-volume AI integration accessible to everyone.

But the ai model cost vs performance comparison 2026 also reveals the trap: if you’re paying full price for each of these separately, you’re spending $90–$110/month and losing hours every week to tab-switching and manual comparison.

Aizolo gives you the full ai model cost vs performance comparison 2026 experience in practice — not as a spreadsheet you read once, but as a live workspace where you run prompts side by side and pick the winner for every task.

For $9.9/month.

That’s the answer to the ai model cost vs performance comparison 2026. Not a single best model — a smarter way to access all of them.

Start building smarter with Aizolo → aizolo.com

Read more expert guides on Aizolo → aizolo.com/blog

Suggested Internal Links

- Most Intelligent AI Model 2026 Comparison: GPT-5, Claude Opus, Gemini, and Grok Tested Side-by-Side

- Smartest AI Model 2026 Comparison: The Only Guide You’ll Actually Need

- Best AI Models for Product Research and Comparison 2026

- Which AI Brands Are Known for Affordable Pricing in 2026?

- Anthropic vs Mistral AI Comparison 2026

Suggested External Links

- Vellum LLM Leaderboard 2026 — live benchmark data across frontier models

- BenchLM AI Pricing Tracker — updated API pricing across all major providers

- Anthropic Claude Documentation — official Claude API and model documentation

- OpenAI Platform Pricing — official GPT API pricing

- Google AI Studio — Gemini API access and documentation