Table of Contents

The $110 Problem and the Speed Trap Nobody Talks About

It was a Monday morning in Hyderabad. Arjun, a 27-year-old SaaS founder, had a deadline in four hours. His client needed a 40-page legal document summarized, a pitch deck drafted, and three API integration scripts debugged — all before lunch.

He opened ChatGPT. Pasted the document. Waited.

Then opened Claude in another tab. Same document. Waited.

Then Gemini. Grok. Each one in a different browser tab, paying separate subscriptions, getting wildly different response times and quality levels.

By the time he had his answers, the deadline was ninety minutes away.

Arjun’s problem wasn’t that AI was slow. His problem was that he had no idea which AI model was actually the fastest AI model for his specific task — and he was paying over ₹9,000 a month to stay confused.

Sound familiar?

If you’re a developer, founder, freelancer, or marketer trying to figure out the fastest AI model 2026 comparison, you’re not alone. In 2026, we have more AI models than ever — and more confusion than ever about which one to actually use.

This guide cuts through the noise. We’ll break down every major model’s speed, use cases, pricing, and real-world performance — and show you exactly how platforms like Aizolo make the fastest AI model 2026 comparison not just possible, but effortless.

Let’s go.

Why Speed Matters More Than Ever in 2026

When we talk about the fastest AI model 2026, we’re not just talking about who wins a benchmark race. Speed in AI has two very distinct dimensions — and confusing them leads to bad decisions.

1. Time to First Token (TTFT): How long before the model starts responding. For chat apps, customer-facing tools, and interactive products, TTFT under one second feels instant. Over three seconds? Users start refreshing the page.

2. Tokens Per Second (tok/s): How fast the model generates its full output once it starts. A model at 200 tokens per second produces roughly 150 words per second — fast enough for real-time streaming. Models below 50 tokens per second may feel sluggish in interactive applications.

Here’s the twist: reasoning models — the ones that think deeply before answering — are often the “slowest” by raw speed metrics, yet they solve harder problems more accurately. Reasoning models like o3, GPT-5, and Gemini Deep Think use chain-of-thought processing, generating internal “thinking” tokens before producing the final answer. This adds significant TTFT latency — often 10 to 150 seconds — but can dramatically improve accuracy on complex tasks.

So when someone asks you, “Which is the fastest AI model in 2026?”, the honest answer is: it depends entirely on what you’re trying to do.

That’s exactly the insight most fastest AI model 2026 comparison guides miss — and exactly what we’re going to fix right now.

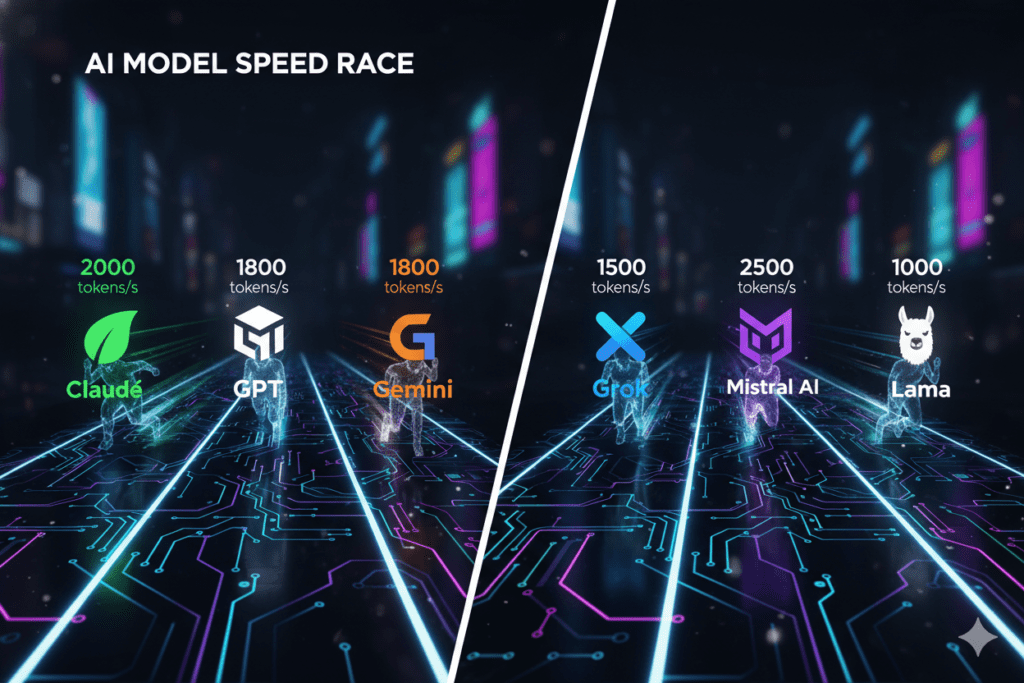

The 2026 AI Speed Landscape: Who’s Actually Winning?

The AI landscape in 2026 is more crowded, capable, and nuanced than ever. Here’s the honest breakdown of the fastest AI model 2026 comparison, segmented by what actually matters.

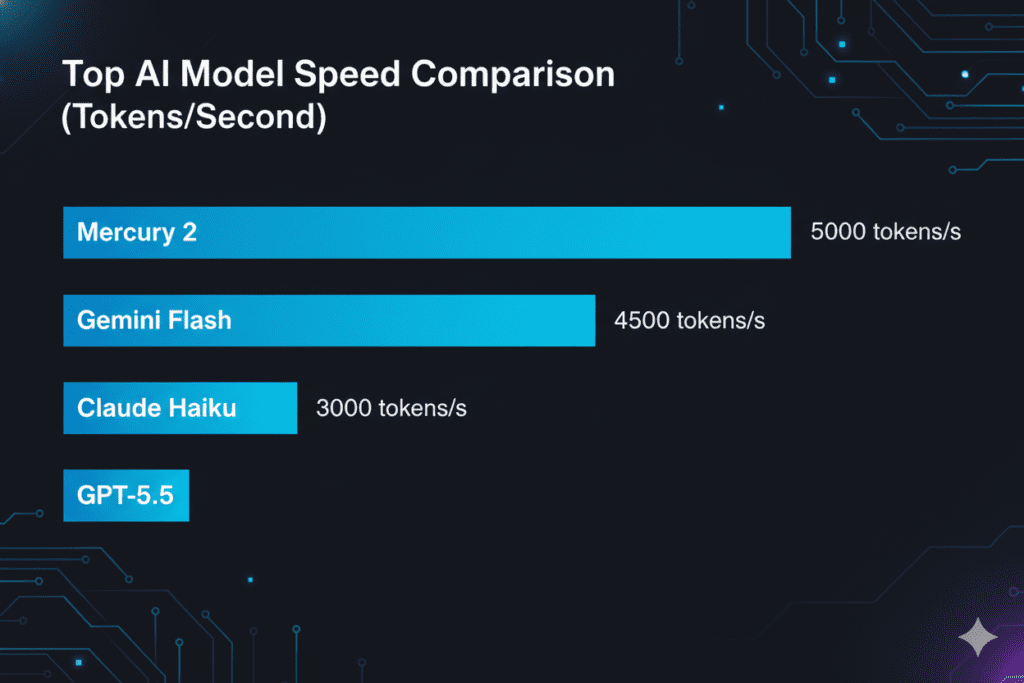

Raw Speed Champions: The Throughput Kings

If you need sheer output velocity — think high-volume content pipelines, automated workflows, or real-time data processing — raw tokens-per-second is your metric.

Mercury 2 at 782 tokens per second and Granite 4.0 H Small at 363 tokens per second are the fastest models in 2026, followed by Granite 3.3 8B and Gemini 3.1 Flash-Lite Preview.

These aren’t household names, but for engineering teams running high-volume inference, they represent a different class of speed entirely.

ServiceNow’s Apriel-v1.5-15B-Thinker achieves the lowest latency at just 0.18 seconds to first token, followed by NVIDIA’s Llama Nemotron Super 49B v1.5 at 0.23 seconds — with sub-0.25-second TTFT representing a meaningful user experience threshold.

For most teams, though, the real fastest AI model 2026 comparison happens at the frontier — between GPT-5.5, Claude Opus 4.7, and Gemini 3.1 Pro.

Frontier Model Speed: The Three-Way Race

OpenAI‘s GPT-5.5, Anthropic’s Claude Opus 4.7, and Google’s Gemini 3.1 Pro all launched within weeks of each other in April 2026, and the benchmark wars are finally settling. No single model dominates every category — each flagship leads in a different lane.

Here’s how the fastest AI model 2026 comparison shakes out at the frontier:

GPT-5.5 — The Agentic Speed King

GPT-5.5 pulls ahead on agentic workflows, research tasks, and terminal automation. Terminal-Bench 2.0 at 82.7% is GPT-5.5’s most decisive win, testing real command-line workflows including planning, iteration, and tool coordination. GPT-5.4’s previous score was 75.1%, while Claude Opus 4.7 sits at 69.4%.

GPT-5.5 has an edge in raw speed and tool call efficiency. If you’re running high-volume agentic pipelines where latency and token cost matter, GPT-5.5 tends to be cheaper to operate.

Claude Opus 4.7 — The Coding Precision Champion

Claude Opus 4.7 dominates software engineering benchmarks and tool orchestration, with moderate inference speed. The premium pricing reflects its coding depth and tool orchestration capabilities.

Claude Opus 4.7 produces more careful output with better handling of edge cases and uncertainty. For high-stakes code where correctness and reviewability matter more than speed, Opus 4.7 is typically the better choice.

Gemini 3.1 Pro — The Fast and Affordable Powerhouse

Gemini 3.1 Pro is the budget leader — at two dollars and twelve dollars per million tokens, it is less than half the cost of GPT-5.5 on output. It is also very fast, which matters if you are running high-volume analytics or need real-time responses. The two-million-plus-token context window is the largest of the three, useful for long documents or multi-file codebases.

In the fastest AI model 2026 comparison, Gemini 3.1 Pro wins the speed-per-dollar race at the frontier tier.

Claude Haiku 4.5 — The Hidden Speed Weapon

Most fastest AI model 2026 comparison guides ignore this one. Big mistake.

Claude Haiku 4.5 offers the fastest response times in the Claude family, ideal for simple tasks and high-volume processing, at just one dollar per million input tokens and five dollars per million output tokens.

For startups and SaaS builders who need fast, reliable, affordable AI inference at scale — Haiku 4.5 is often the smartest pick.

The Real Problem: No One Is Picking the Right Model

Here’s the fastest AI model 2026 comparison truth that nobody wants to hear:

Most people — developers, founders, marketers, students — are using the wrong model for their task. Not because they’re uninformed, but because:

- They subscribed to one AI tool and stuck with it

- They don’t have a way to test models side by side in real time

- They’re paying $110+ a month across multiple subscriptions and still guessing

There is no single best model — there is the best model for your specific combination of intelligence requirements, latency tolerance, volume, and budget.

This is where most fastest AI model 2026 comparison guides end. And it’s exactly where Aizolo begins.

How Aizolo Solves the Fastest AI Model Problem — For Real

Aizolo is an all-in-one AI platform built for exactly this moment — the moment when you realize that picking the fastest AI model isn’t a one-time decision. It’s a daily, task-by-task judgment call.

Instead of maintaining five separate subscriptions and switching tabs all day (like Arjun was doing), Aizolo puts every major AI model — GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, Grok, Perplexity Sonar Pro, and more — in a single dashboard for just $9.90/month.

More importantly, it gives you the tool that actually makes the fastest AI model 2026 comparison actionable: side-by-side comparison mode.

You type your prompt once. All your models respond simultaneously. You see which one is fastest for your use case, which gives the most accurate answer, and which you should trust for that specific task.

That’s not a feature. That’s a workflow transformation.

What Aizolo Includes:

- Unlimited AI comparisons across all premium models

- Access to 3,000,000 tokens per month

- AI image, video, and audio generation

- Smart Prompt Manager to save and reuse your best prompts

- AI Memory that retains your preferences and context

- Custom API key support (encrypted) for unlimited personal usage

- Import your existing ChatGPT or Claude chat history

And yes — 2,000+ additional AI tools, with new ones added weekly.

Start building smarter with Aizolo →

Real-World Use Cases: Who Needs the Fastest AI Model in 2026?

Let’s get specific. The fastest AI model 2026 comparison looks different depending on who you are.

For Founders and SaaS Builders

You’re running lean. Every minute of latency in your AI-powered product costs you user experience points. You need the fastest AI model that won’t break your budget at scale.

Best for most use cases: Gemini 3.1 Flash or Claude Haiku 4.5 for high-volume inference. GPT-5.5 for agentic workflows. Claude Opus 4.7 for complex reasoning tasks that require precision.

With Aizolo, founders can test all three against their exact prompts before committing to any model in their product stack. The most productive developers in 2026 aren’t choosing one model — they’re using the right model for each task.

For Developers

You’re debugging at 11 PM and need a model that responds quickly and gets the code right. Speed matters, but a wrong answer that compiles is worse than a slow answer that’s correct.

As of early 2026, Gemini 3.1 Pro Preview leads SWE-bench at 78.80%, with Claude Opus 4.6 Thinking and GPT-5.4 both at 78.20%. The differences are real but narrow — which means the fastest AI model 2026 comparison for developers often comes down to workflow fit, not raw benchmark position.

Claude Sonnet 4.6 is the sweet spot: fast, smart, and priced for professional daily use.

For Marketers and Content Creators

You don’t need graduate-level reasoning. You need volume, creativity, and speed. For drafting ad copy, blog outlines, email sequences, and social posts at scale — the fastest AI model is the one that gets you from brief to output fastest.

For this use case: Gemini 3.1 Flash and GPT-5 Nano are your fastest AI model 2026 comparison winners. Low cost, high throughput, strong creative output.

For Students and Researchers

You’re working with long documents — papers, textbooks, lecture transcripts. You need a model with a large context window that can synthesize quickly.

Gemini 3.1 Pro is the only model with native multimodal input supporting text, image, audio, and video in a single model. For academic research, that versatility combined with its massive context window makes it a top contender in the fastest AI model 2026 comparison for this audience.

For Freelancers

You’re billing by the hour. Your clients don’t care which AI you use — they care how fast you deliver. A freelancer who uses the wrong AI for the wrong task wastes time. A freelancer who knows their fastest AI model 2026 comparison options wins more projects.

Aizolo’s side-by-side comparison lets you test prompts before client calls, so you always show up with the most accurate, fastest answer. That’s a competitive edge most freelancers haven’t discovered yet.

Explore more insights on Aizolo →

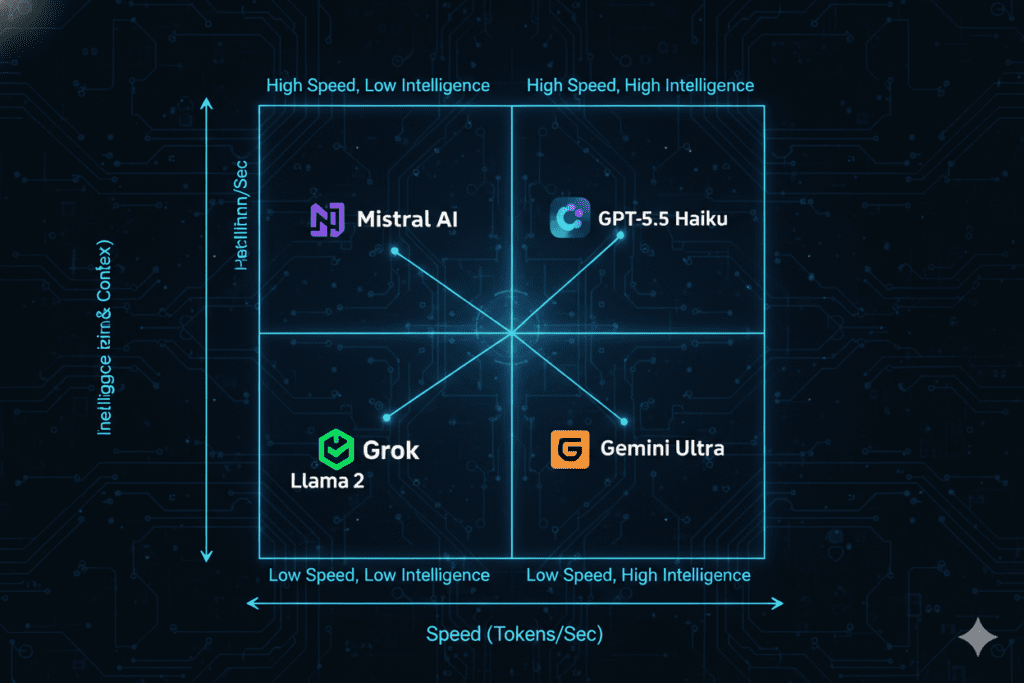

The Speed vs. Intelligence Trade-Off: What No One Explains Clearly

One of the most important insights in any honest fastest AI model 2026 comparison is this: speed and intelligence are on a spectrum, not a binary.

Here’s how to think about it:

High Speed, Lighter Intelligence: Gemini 3.1 Flash, Claude Haiku 4.5, GPT-5 Nano. These models respond in fractions of a second and handle most everyday tasks well. Perfect for real-time apps, customer support bots, content pipelines.

Balanced Speed and Intelligence: Claude Sonnet 4.6, Gemini 3.1 Pro. The workhorses. Fast enough for professional daily use, smart enough for complex tasks. The right fastest AI model 2026 comparison pick for most developers and founders.

High Intelligence, Slower Processing: Claude Opus 4.7, GPT-5.5 (extended thinking), Gemini 3.1 Pro Deep Think. These models take longer — sometimes much longer — but they solve harder problems more reliably. The era of one-size-fits-all models is over. The benchmarks show tight competition, with leads measured in single-digit percentage points.

The fastest AI model 2026 comparison, done right, means matching your task’s complexity to the right tier — not defaulting to the most famous name.

Pricing in the Fastest AI Model 2026 Comparison

Speed means nothing if the cost is unsustainable. Here’s the honest fastest AI model 2026 comparison across pricing tiers:

Premium Tier (Deep Reasoning, Slower Speed):

GPT-5.5 sits at $5 per million input tokens and $30 per million output tokens at current pricing. Claude Opus 4.7 is priced at $5 per million input tokens and $25 per million output tokens.

Mid Tier (Balanced Speed and Intelligence):

Claude Sonnet 4.5 is priced at $3 per million input tokens and $15 per million output tokens, representing the best balance of intelligence, speed, and cost.

Speed Tier (Maximum Throughput, Lowest Cost):

Gemini 3.1 Pro is available at two dollars per million input tokens and twelve dollars per million output tokens. Claude Haiku 4.5 is priced at just one dollar per million input tokens and five dollars per million output tokens, ideal for simple tasks and high-volume processing.

For individuals and small teams, paying API rates across all these models adds up fast. That’s exactly why Aizolo’s $9.90/month flat rate — with access to all these models and 3,000,000 tokens — represents extraordinary value in the fastest AI model 2026 comparison landscape.

How to Do Your Own Fastest AI Model 2026 Comparison (The Right Way)

Most people test AI models wrong. They paste the same generic prompt into each tool and judge by gut feel. Here’s a smarter process — and how Aizolo makes it effortless:

Step 1: Define your primary task type. Are you doing creative writing, coding, data analysis, or research? Each favors a different model.

Step 2: Set your speed baseline. For your use case, is TTFT more important (interactive chat) or throughput (batch processing)?

Step 3: Run the same prompt across multiple models simultaneously. This is where Aizolo’s side-by-side mode shines — you get all responses in one view, eliminating the tab-switching madness that wastes hours every week.

Step 4: Evaluate on accuracy, not just speed. The fastest AI model 2026 comparison only matters if the fast model is also correct for your use case.

Step 5: Track your results and build model preferences. Aizolo’s Smart Prompt Manager lets you save your best prompts with notes on which model performs best — so over time, you build institutional knowledge about your fastest AI model stack.

Learn from real-world experience at Aizolo →

What the Benchmarks Don’t Tell You

No fastest AI model 2026 comparison is complete without this warning: benchmarks measure what labs want to measure, not necessarily what you need.

The core takeaway is straightforward: there is no single best model — there is the best model for your specific combination of intelligence requirements, latency tolerance, volume, and budget.

A model that scores highest on GPQA Diamond (PhD-level science) may be terrible at writing B2B email copy. A model that leads SWE-bench (real GitHub issue resolution) may struggle with nuanced customer sentiment analysis.

The best fastest AI model 2026 comparison is always personal. It’s the one you run against your actual prompts, your actual tasks, and your actual workflows.

That’s a principle Aizolo is built on. Every feature — side-by-side comparison, AI memory, prompt manager — exists to help you discover your fastest AI model, not rely on someone else’s benchmark opinion.

Read more expert guides on Aizolo →

The Fastest AI Model 2026 Comparison: Quick Reference Guide

Here’s a practical summary for quick reference:

Mercury 2 — Raw speed champion at 782 tokens/second. Best for engineering teams running high-volume inference pipelines.

Gemini 3.1 Flash — Frontier-level fast model. Best for content creators, marketers, real-time apps, and cost-sensitive founders.

Claude Haiku 4.5 — Fastest Claude tier. Best for SaaS builders needing speed + reliability + Anthropic‘s safety standards.

Claude Sonnet 4.6 — The daily driver for developers and professionals. Best balance of speed, intelligence, and price.

GPT-5.5 — Fastest at agentic workflows. Best for DevOps automation, multi-tool pipelines, and research tasks.

Claude Opus 4.7 — Fastest for getting code right the first time. Best for senior developers and complex enterprise tasks.

Gemini 3.1 Pro — Fastest frontier model for multimodal tasks. Best for document analysis, research, and high-volume workloads.

Why Aizolo Is the Smartest Way to Navigate the Fastest AI Model 2026 Comparison

Let’s come back to Arjun for a moment.

After that chaotic Monday morning, he started using Aizolo. Now, instead of juggling five tabs and five subscriptions, he opens one dashboard. He types his prompt once. He sees which model responds fastest and most accurately for that specific task.

He uses Gemini 3.1 Flash for the legal document summaries — large context window, fast, cheap. Claude Opus 4.7 for the complex API debugging — precision matters more than speed there. GPT-5.5 for the agentic pitch deck workflow — it orchestrates multiple steps without hand-holding.

He went from $110/month and four hours of stress to $9.90/month and a 45-minute workflow.

That’s what a real fastest AI model 2026 comparison looks like in practice.

The AI models are getting faster every month. New benchmarks are published weekly. The landscape shifts constantly — and it will keep shifting. What won’t change is the value of having one place to run your fastest AI model 2026 comparison honestly, practically, and affordably.

That’s what Aizolo is built for.

Trusted by 5,000+ AI enthusiasts. 10+ premium AI models. One $9.90/month subscription.

Follow Aizolo for practical tech and startup insights →

Conclusion: Stop Guessing. Start Comparing.

The fastest AI model 2026 comparison isn’t a one-time exercise. It’s an ongoing practice — because the models keep improving, your tasks keep evolving, and the right answer today might be different in sixty days.

What matters right now:

- Fastest raw throughput: Mercury 2 and Gemini 3.1 Flash

- Fastest for coding precision: Claude Opus 4.7

- Fastest for agentic pipelines: GPT-5.5

- Fastest for balanced daily use: Claude Sonnet 4.6 and Gemini 3.1 Pro

- Fastest for budget-conscious volume: Claude Haiku 4.5

And the fastest way to find your fastest AI model 2026 comparison answer? Run the comparison yourself — on your prompts, your tasks, your workflows.

Aizolo makes that possible for less than the cost of a single premium AI subscription.

Start building smarter with Aizolo — Try for free →

Suggested Internal Links

- Most Advanced AI Models March 2026 — Related: advanced model landscape overview

- Best AI Models by Category 2026 — Related: categorizing models by task type

- AI Model Benchmarks Comparison 2026 — Related: benchmark methodology and interpretation

- Side by Side AI Comparison — Related: practical comparison workflow

Suggested External Links

- Artificial Analysis LLM Leaderboard — Independent speed and performance metrics

- Vellum LLM Leaderboard — Updated benchmark rankings across reasoning, coding, and math

- BenchLM Speed Rankings — Real-time tokens/second and TTFT comparison data

- Anthropic Claude API Docs — Official Claude model specifications and pricing