Table of Contents

It was a Tuesday evening in Hyderabad. Nisha, a 29-year-old SaaS product manager, had three browser tabs open: ChatGPT, Claude, and Gemini. She was copy-pasting the same product brief into each one — for the third time that week — trying to figure out which AI actually gave her the better output. She was paying nearly ₹9,000 a month across three subscriptions. And she still wasn’t sure which AI model to trust for which task.

Sound familiar?

You’re not alone. In 2026, with over 300 active AI models evaluated by platforms like Artificial Analysis and Vellum’s LLM Leaderboard, the real problem isn’t access to AI. It’s clarity. Choosing the right model for the right task — without a reliable ai model comparison table — is like navigating a city without a map.

This guide is that map.

At Aizolo, we’ve helped thousands of founders, developers, marketers, freelancers, and students cut through the noise. This is the most practical, human-first ai model comparison table you’ll find — built not just on benchmark scores, but on real-world use cases, pricing context, and the kind of nuanced insight that actually saves you time and money.

Why an AI Model Comparison Table Is Non-Negotiable in 2026

Here’s the uncomfortable truth: there is no single “best” AI model anymore.

In 2024, you could say “just use ChatGPT” and be mostly right. In 2026, that answer is lazy — and expensive. The frontier has fractured into specialization. GPT-5.4 leads for long-form writing and structured documents. Claude Opus 4.6 dominates for coding and nuanced reasoning. Gemini 3.1 Pro rules scientific research and multimodal tasks. Grok 4 is the only frontier model with real-time web and social data.

Picking wrong costs you hours. Picking right — guided by a solid ai model comparison table — builds a competitive edge.

Without an ai model comparison table, you’re:

- Paying for subscriptions you underuse

- Getting mediocre outputs because you’re using the wrong tool for the job

- Wasting hours re-prompting instead of building

- Making gut-feel decisions in a world that now requires data

The ai model comparison table is the foundation of what Aizolo calls intelligent AI workflow design — the practice of routing the right task to the right model, every time, with confidence.

The 2026 AI Landscape at a Glance

Before we get into the full ai model comparison table, here’s the honest state of play heading into May 2026.

As of Q1 2026, there were 255 model releases from major AI organizations — in a single quarter. Five frontier models are now clustered within a few benchmark points of each other. The gap between open-source and proprietary AI has nearly closed. And multi-model routing — the practice of directing different task types to different models — has become standard production architecture.

This is why the ai model comparison table matters more now than ever. Choosing one model and hoping for the best is no longer a strategy. It’s a liability.

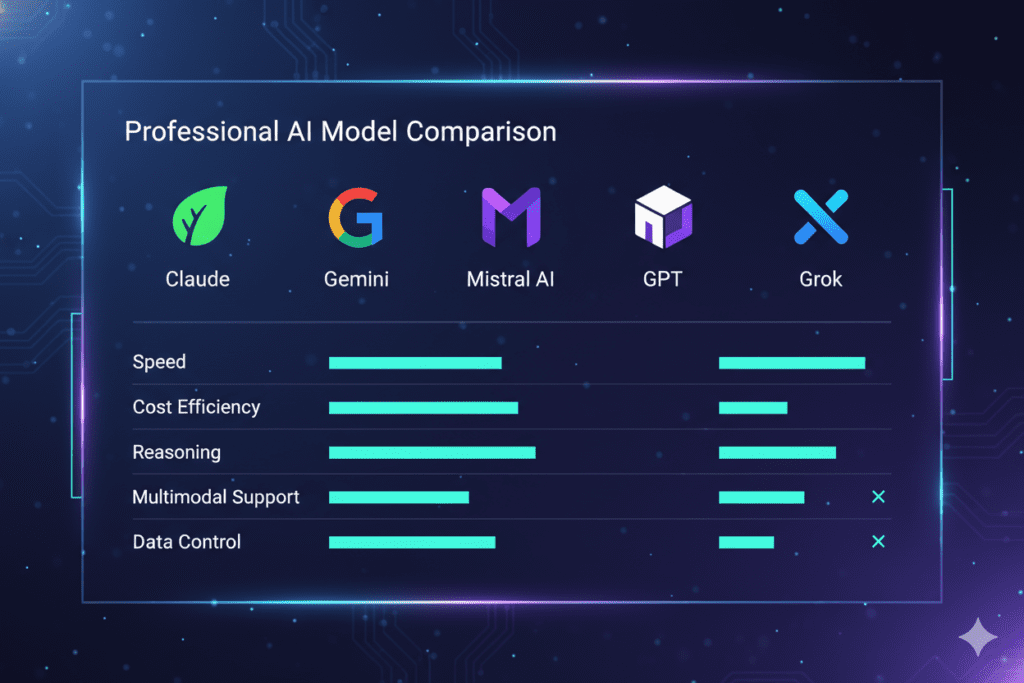

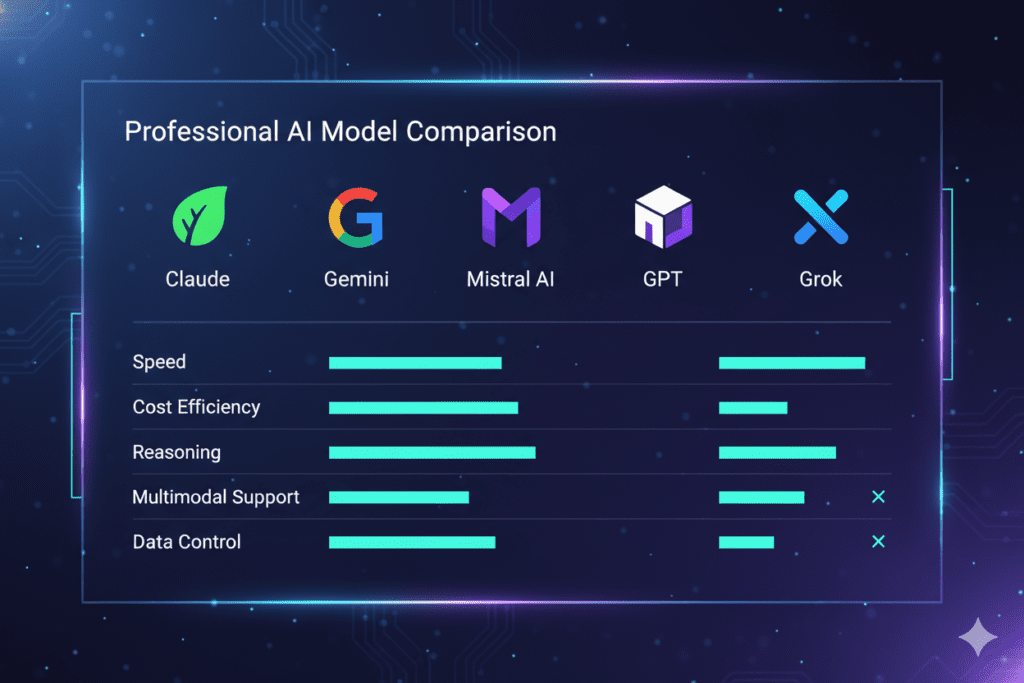

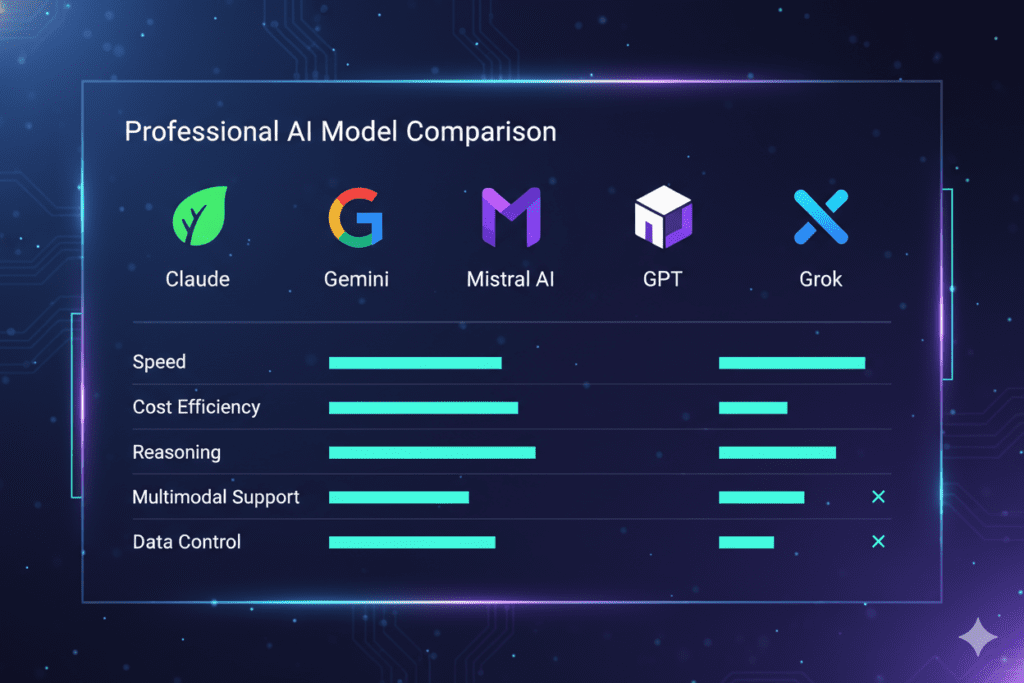

The Full AI Model Comparison Table: GPT-5.4 vs Claude Opus 4.6 vs Gemini 3.1 Pro vs Grok 4

| Feature | GPT-5.4 | Claude Opus 4.6 | Gemini 3.1 Pro | Grok 4 |

|---|---|---|---|---|

| Best For | Long-form writing, structured docs, analysis | Coding, reasoning, agentic tasks | Research, multimodal, science | Real-time data, social content |

| Context Window | 1M tokens | 1M tokens (beta) | 1M tokens (beta) | 2M tokens |

| Max Output | 128K tokens | 128K tokens | 128K tokens | — |

| SWE-bench Score | 74.9% | 74%+ | 78.8% | 75% |

| ARC-AGI-2 | — | — | 77.1% | — |

| Coding Ecosystems | — | Cursor, Windsurf, Claude Code | — | — |

| Real-Time Data | No | No | Limited | Yes (X/web) |

| Pricing (per 1M tokens) | Mid-range | Premium | $2/$12 (best value) | Mid-range |

| Multimodal | Yes | Yes | Yes (text, image, audio, video) | Yes |

| Open Weights | No | No | No | No |

This ai model comparison table gives you a snapshot. But real AI model selection requires more than benchmark numbers — it requires matching model strengths to your specific workflow.

Let’s do that properly.

Breaking Down the AI Model Comparison Table by Use Case

Founders and Startup Builders

If you’re a founder, the ai model comparison table looks different for you depending on the stage of your build.

At the ideation stage, you want broad, fluent reasoning. GPT-5.4 is the most versatile for generating pitch decks, investor memos, and product briefs in clean, structured prose. Its Canvas editing environment remains the best tool for iterating on long documents.

At the build stage, the ai model comparison table shifts. Claude Opus 4.6 powers both Cursor and Windsurf — the two most popular AI coding editors in 2026. If you’re writing, reviewing, or refactoring code, you’re almost certainly better served by Claude.

At the growth stage? Grok 4’s real-time access to X and web data makes it unbeatable for social listening, trend identification, and positioning content against what’s happening right now.

A founder who understands the ai model comparison table doesn’t pick one AI. They build a workflow that routes each task to the model that wins at that task.

The challenge? Managing three to five separate subscriptions to do this costs $110+ per month. Aizolo solves this by giving you every frontier model under one $9.90/month subscription — so you can run your own real-world ai model comparison table with your actual content, not just benchmark abstractions.

Developers and Engineers

For developers, the ai model comparison table is a technical decision, not just a convenience one.

Here’s what the data says as of April-May 2026:

- Claude Opus 4.6 scores 74%+ on SWE-bench Verified and powers the most widely-used AI coding editors

- Gemini 3.1 Pro leads at 78.8% on SWE-bench, making it the highest raw benchmark scorer for code tasks

- GLM-5.1, an open-source alternative, scored 94.6% of Claude Opus performance at a fraction of the cost — a serious budget option for high-volume teams

The ai model comparison table for developers also includes a dimension most guides skip: production routing architecture. In 2026, serious engineering teams don’t send all requests to a single frontier model. They route 70% of traffic to a fast, cheap model like DeepSeek V4-Flash, 25% to a mid-tier model like Claude Sonnet 4.6, and 5% to a frontier model like Claude Opus 4.6 for complex reasoning tasks.

This multi-model strategy delivers frontier-level output at roughly 15% of all-frontier cost. The ai model comparison table is the foundation for building that architecture intelligently.

Explore more expert technical guides on Aizolo’s blog.

Marketers and Content Creators

The ai model comparison table for marketing tells a nuanced story.

For long-form content, campaign copy, and natural prose, Claude Opus 4.6 produces the most fluent, human-feeling writing of any frontier model. Its 128K output capacity means you can generate a full content strategy document, brand voice guide, or product launch brief in a single pass.

For real-time content — trend-driven social posts, reactive campaigns, live event coverage — Grok 4 is the only model in the ai model comparison table with live access to what’s happening on the web and X right now.

For multilingual campaigns and scientific product claims, Gemini 3.1 Pro’s multimodal capabilities and 24-language voice support give it a specific advantage in the ai model comparison table for global brands.

For SEO-structured content with clear summaries, headers, and document organization, GPT-5.4’s Canvas environment and long-form structure tools remain the most practical choice.

A savvy content team uses all four. The ai model comparison table tells you when to switch.

Students and Researchers

The ai model comparison table for academic users has a clear leader: Gemini 3.1 Pro.

Its 77.1% score on ARC-AGI-2 — more than double the previous version’s result — reflects genuine architectural improvement in novel reasoning. For scientific research, academic writing, and multimodal analysis (text, image, audio), Gemini 3.1 Pro is the strongest model in the ai model comparison table for 2026.

Claude Opus 4.6 produces the clearest plain-language explanations of complex topics — valuable for turning dense research papers into digestible summaries or structured study notes.

GPT-5.4 is still the benchmark winner for structured study guides, formatted summaries, and standardized test preparation.

The problem is cost. Most students can’t sustain $110/month across four subscriptions. This is where the ai model comparison table becomes more than academic — it becomes a budget decision. Aizolo’s Pro plan at $9.90/month gives students access to every model in this ai model comparison table under one roof.

Freelancers

For freelancers, the ai model comparison table is a time-to-delivery question.

If you’re a copywriter, Claude wins for long-form, natural prose. If you’re a graphic designer using AI-assisted image generation, DALL-E models and Midjourney-style tools handle different visual aesthetics. If you’re a translator or multilingual content producer, Gemini 3.1 Pro’s language coverage wins.

The insight that most freelancer guides miss: you don’t need to choose one row in the ai model comparison table permanently. You need to be fluent enough in the table to switch models mid-project based on the task. That fluency is what separates freelancers who produce average AI-assisted work from those who produce genuinely client-ready outputs.

Read more expert guides on Aizolo to build that fluency.

SaaS Builders

The ai model comparison table for SaaS builders is the most complex use case in this guide — and also the most consequential.

SaaS products in 2026 need AI for customer support, onboarding, search, content generation, code review, and data analysis. No single model in the ai model comparison table wins all six categories. Which means SaaS builders who rely on a single model are leaving quality and efficiency on the table.

The strategic approach: use the ai model comparison table to define your routing architecture before you build. Decide upfront which model handles which category. Bake that routing logic into your infrastructure. Then test and iterate using a platform that lets you run side-by-side comparisons easily.

This is exactly what Aizolo is designed for. Its side-by-side comparison interface lets SaaS builders test real prompts — not theoretical benchmarks — across multiple models simultaneously. When you’re deciding whether Claude or Gemini better handles your specific customer support use case, you don’t want a static ai model comparison table. You want live results with your actual prompts.

Start building smarter with Aizolo.

What the AI Model Comparison Table Doesn’t Tell You (And What Aizolo Does)

Static benchmark tables — even comprehensive ones — have a fundamental limitation. They tell you how models perform on standardized test sets. They don’t tell you how models perform on your specific prompt, your specific use case, your specific context.

That gap matters enormously in practice.

Claude Sonnet 4.6, for example, delivers 98% of Claude Opus quality for many real-world tasks — at significantly lower cost. But you’d only discover that by comparing them directly on your actual work, not by reading a benchmark table.

GPT-5.4 might outperform Claude on one type of product description and underperform on another, depending on the industry, tone, and structure of your brief. A static ai model comparison table can’t capture that.

This is why Aizolo was built the way it was: not as a replacement for the ai model comparison table, but as a live testing environment that lets you build your own ai model comparison table from real data.

Aizolo’s features that go beyond the static ai model comparison table include:

- Side-by-side comparison: Send the same prompt to GPT-5.4, Claude, and Gemini simultaneously and compare outputs in real time

- Custom API keys: Bring your own keys (encrypted and secure) for unlimited usage at your own cost

- Smart Prompt Manager: Save your best-performing prompts and reuse them across models

- AI Memory: The platform remembers your preferences across sessions, so comparisons get more relevant over time

- One dashboard for all models: No more tab-switching, copy-pasting, or managing five separate subscriptions

For $9.90/month, you get every model in this ai model comparison table in one place. That’s not a subscription — it’s a workflow transformation.

How to Use This AI Model Comparison Table Practically

Here’s a simple decision framework for choosing the right model based on the ai model comparison table above:

Are you writing long-form content? Start with Claude Opus 4.6. Compare against GPT-5.4 if you want a more structured, document-like output.

Are you writing code? Start with Claude (Cursor/Windsurf ecosystem) or Gemini 3.1 Pro (highest SWE-bench score). Compare both directly on your codebase.

Are you doing research or academic work? Gemini 3.1 Pro is your ai model comparison table leader. Claude is the best for explaining and summarizing complex findings.

Do you need real-time data? Grok 4 is the only model in the ai model comparison table with live access to X and the web.

Are you budget-constrained? Use Aizolo’s all-in-one platform to access every model for $9.90/month, then use the platform‘s comparison tools to route your work to the strongest model per task.

Are you building a SaaS product? Don’t rely on a single row in the ai model comparison table. Build a multi-model routing architecture from day one, using the comparison tools to validate each routing decision.

The Open-Source Dimension of the AI Model Comparison Table

No complete ai model comparison table in 2026 ignores open-source models.

GLM-5 from Zhipu AI is the most significant open-source development of the year. With 744 billion parameters and an SWE-bench score of 77.8% — the highest among open-weight models — it competes directly with frontier proprietary models. GLM-5.1, its coding-focused refinement, scores 94.6% of Claude Opus performance at a small fraction of the subscription cost.

For developers and SaaS teams doing high-volume inference on budget, GLM-5.1 is the most disruptive entry in the ai model comparison table for 2026. Platforms like Hugging Face provide access to these models for teams that want to experiment with open-source options.

DeepSeek V4, with 1 trillion parameters and 40% memory reduction over V3, is another open-source entry reshaping the ai model comparison table for production infrastructure teams.

The competitive dynamic between open-source and proprietary models is the defining story of the 2026 ai model comparison table — and it’s still evolving fast.

Beyond the Table: Aizolo’s Philosophy of AI Model Selection

Most ai model comparison table guides end with a ranking. Aizolo’s approach goes further.

The Aizolo philosophy isn’t about picking a winner from the ai model comparison table and committing to it. It’s about building the habit of comparing before committing — using real prompts, real tasks, and real outputs to inform every AI decision you make.

This habit is what separates the users who get 10x productivity gains from AI from those who get marginally better Google searches. The ai model comparison table is the starting point. Real-world comparison is the practice. And Aizolo is the environment where that practice becomes a workflow.

Trusted by more than 5,000 AI enthusiasts worldwide, Aizolo has built a community of founders, developers, marketers, students, and freelancers who’ve stopped guessing which AI is best — and started knowing.

Follow Aizolo for practical tech and startup insights and stay ahead of every shift in the ai model comparison table as new models release.

Related Reading on Aizolo

If this ai model comparison table guide was useful, here are more expert resources to deepen your AI decision-making:

- Best AI Coding Models 2026 Comparison — for developers choosing between Claude, Gemini, and GPT for code tasks

- AI Model Benchmarks Comparison 2026 — for a deeper dive into the benchmark data behind the ai model comparison table

- Platforms to Compare Multiple AI Models Side by Side — for teams building live comparison workflows

- Benefits of Comparing AI Models — for anyone still on the fence about building a multi-model workflow

Conclusion: The AI Model Comparison Table Is Just the Beginning

The ai model comparison table gives you the map. Aizolo gives you the vehicle.

In 2026, no single AI model dominates every row of the ai model comparison table. GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro, and Grok 4 each win decisively at specific tasks — and lose at others. Open-source models like GLM-5 are closing the gap faster than most proprietary vendors want to admit.

The professionals, founders, developers, and creators who thrive in this environment won’t be the ones who picked the “best” AI. They’ll be the ones who built the smartest ai model comparison table workflow — routing each task to the strongest model, comparing outputs in real time, and adapting as the landscape shifts.

That’s exactly what Aizolo is built for.

For $9.90/month, you get access to every model in this ai model comparison table, a live side-by-side comparison interface, a smart prompt manager, AI memory, and a community of 5,000+ AI enthusiasts building smarter every day.

Stop juggling subscriptions. Stop guessing which model to use. Start working from a real, live ai model comparison table built on your actual work.

Start building smarter with Aizolo — free to try, no setup required.

Suggested Internal Links

- Best AI Coding Models 2026 Comparison

- AI Model Benchmarks Comparison 2026

- Platforms to Compare Multiple AI Models Side by Side

- Benefits of Comparing AI Models

- Best AI Models by Category 2026

Suggested External Links

- Artificial Analysis AI Model Rankings — multi-dimensional benchmark data including speed, cost, and intelligence index

- Vellum LLM Leaderboard — updated rankings for reasoning, coding, math, and multilingual tasks

- LogRocket AI Dev Tool Power Rankings March 2026 — developer-focused comparison with real-world coding benchmarks

- Hugging Face Model Hub — access to open-source models including GLM-5 and DeepSeek V4

- Build Fast With AI — Best AI Models May 2026 — full benchmark analysis with production routing architecture guidance