Table of Contents

The Night Vikram Lost a Client Because He Picked the Wrong AI

It was a Wednesday evening in Bangalore. Vikram, a 29-year-old freelance content strategist, had a deadline in three hours.

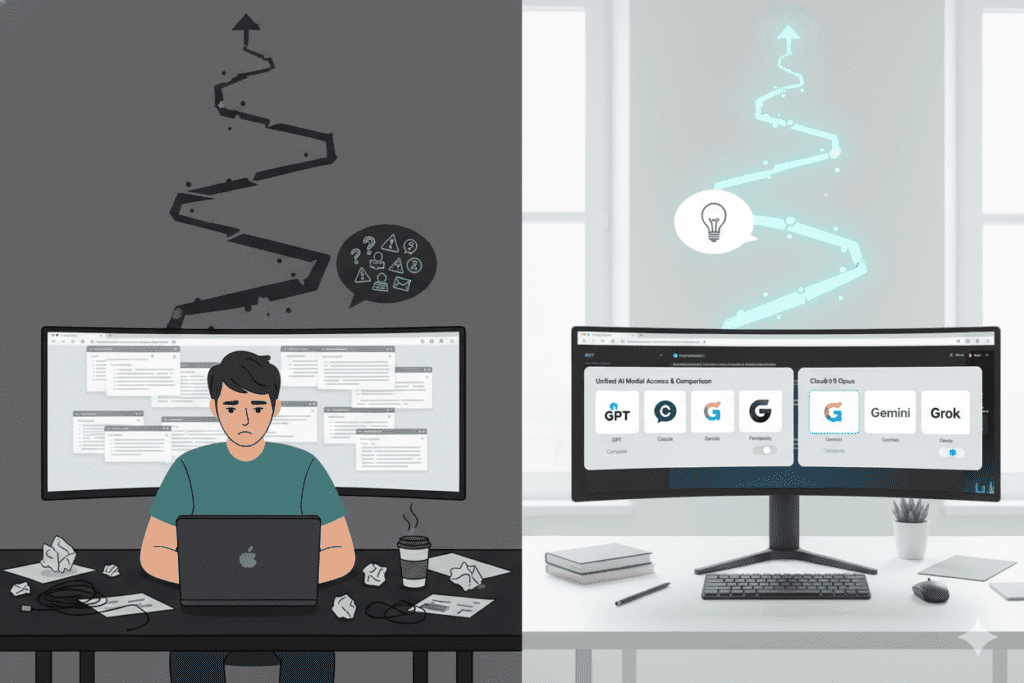

His client — a fast-growing SaaS startup — needed a landing page rewrite. He opened ChatGPT, typed his prompt, got an output. It felt generic. He closed the tab and opened Claude. Better tone, but the structure felt off. He switched to Gemini. By the time he’d manually cycled through three platforms to compare multiple AI models side by side, copy-pasting prompts each time, he was 90 minutes in. He hadn’t written a single word.

He missed the deadline. The client moved on.

Here’s the uncomfortable truth Vikram learned that night: the problem wasn’t which AI he chose. The problem was that he had no system for comparing them efficiently. He was using platforms to compare multiple AI models side by side in the slowest, most expensive, most frustrating way imaginable — manually.

In 2026, that approach costs you real money. And it costs you something even more precious: time.

This guide exists to fix that. We’ll walk through exactly why the need for platforms to compare multiple AI models side by side has exploded, what to look for in a comparison platform, and how tools like Aizolo have turned this painful manual process into a one-click superpower.

Why You Suddenly Need Platforms to Compare Multiple AI Models Side by Side

Three years ago, there was one dominant AI assistant. You used it. That was it.

Today, the landscape looks completely different. GPT-5, Claude Opus 4.6, Gemini 3.1 Pro, Grok, Perplexity, Mistral, DeepSeek — every few months, a new frontier model drops with a new capability claim. And here’s the real problem: no single model wins everything.

This isn’t marketing spin. It’s benchmark reality.

- Claude dominates long-form reasoning and nuanced writing

- GPT-5 excels at structured outputs and creative ideation

- Gemini leads on multimodal tasks involving images and documents

- Grok performs well on real-time web-aware tasks

- Perplexity shines for research with citations

For any professional who works with AI daily — developer, founder, marketer, freelancer, student, SaaS builder — the difference between using the right model and the wrong model is the difference between a great output and an average one. Between a saved hour and a wasted one.

That’s why platforms to compare multiple AI models side by side aren’t a “nice to have” in 2026. They are the foundational layer of an efficient AI workflow.

And yet most people are still doing this comparison manually. They’re paying $20 to $30 per subscription across four or five platforms, switching tabs constantly, re-entering context every single time, and making guesses about which model did better — instead of actually seeing the outputs next to each other.

Let’s fix that.

What Makes a Great Platform to Compare Multiple AI Models Side by Side

Not all platforms to compare multiple AI models side by side are built equal. Before we dive into the best options, here’s the framework you need to evaluate them.

Real-Time Simultaneous Output

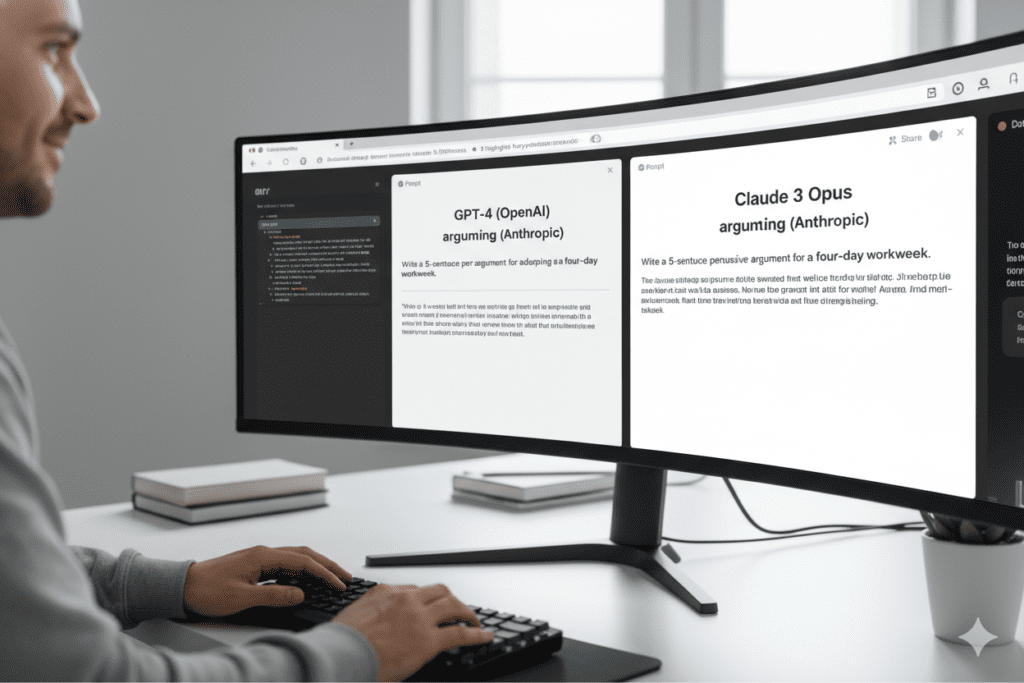

The gold standard for platforms to compare multiple AI models side by side is this: you type one prompt, and all selected models respond at the same time, in the same viewport. Not sequentially. Not in different tabs. Simultaneously.

Why does this matter? Because when you compare asynchronously — run Model A, wait, run Model B, wait — your evaluation is polluted. You’re not comparing apples to apples. You’re comparing faded memory to fresh impression. Platforms to compare multiple AI models side by side need to surface outputs synchronously to be genuinely useful.

Single Subscription Access

If a comparison platform requires you to bring your own subscriptions to every model, you haven’t solved the cost problem. The best platforms to compare multiple AI models side by side should give you access to GPT, Claude, Gemini, Grok, and others under one roof, under one bill.

The current market reality: paying individually for GPT-5, Claude, Gemini Pro, Grok, and Perplexity adds up to roughly $110 per month. A unified platform should cost a fraction of that — and platforms to compare multiple AI models side by side that don’t solve this cost problem are leaving you with half a solution.

Prompt Management and Memory

When you use platforms to compare multiple AI models side by side professionally, you run the same prompt (or variations of it) dozens of times. A smart comparison platform saves your best prompts, remembers your preferences, and lets you build a reusable library so you’re not rewriting context from scratch on every session.

A Growing Model Library

The AI model landscape changes every month. Platforms to compare multiple AI models side by side need to keep pace. Look for platforms that regularly add new models rather than locking you into a static roster from six months ago.

Custom API Key Support

For developers and power users, the ability to plug in your own API keys — securely encrypted — means you can extend your comparison platform into your production workflow. This is especially important for teams building AI-integrated products who need to test model behavior at scale.

The Real Cost of Not Using Platforms to Compare Multiple AI Models Side by Side

Before we get into specific tools, let’s talk about what this problem actually costs you — because it’s higher than most people realize.

Time cost: If you spend even 20 minutes per day manually switching between AI platforms to compare multiple AI models side by side, that’s over 120 hours per year. For a freelancer billing ₹3,000/hour, that’s ₹3.6 lakh in lost productivity — gone just from inefficient model switching.

Decision cost: When you can’t compare outputs side by side, you make guesses. You pick the model you’re most familiar with, not the model that’s best for this specific task. Over hundreds of decisions, that compounds into consistently suboptimal work.

Subscription cost: Paying for five separate AI subscriptions to get access to the models you need for platforms to compare multiple AI models side by side manually costs $110/month. That’s $1,320 per year — for a workflow that’s still friction-heavy.

The math is clear. Platforms to compare multiple AI models side by side that actually consolidate access aren’t an expense. They’re an investment that pays back within the first week.

The Best Platforms to Compare Multiple AI Models Side by Side in 2026

1. Aizolo — The Best All-in-One Platform to Compare Multiple AI Models Side by Side

If you’re serious about building an efficient AI workflow in 2026, Aizolo is the platform that was purpose-built for exactly this use case.

Aizolo’s core insight is simple and powerful: stop paying $110/month across five separate subscriptions and start accessing all of them — GPT-5, Claude, Gemini, Grok, Perplexity, and more — from a single unified workspace for $9.90/month.

But Aizolo isn’t just about cost savings. It’s about changing how you interact with AI entirely.

How Aizolo enables platforms to compare multiple AI models side by side:

- Simultaneous multi-model comparison: Type your prompt once and see responses from GPT, Claude, Gemini, and others rendered side by side in real time. No tab switching. No re-entering context. No mental gymnastics.

- 3,000,000 tokens per month on the Pro plan — enough for serious, high-volume use

- Smart Prompt Manager — save, categorize, and reuse your best prompts across all models instantly

- AI Memory — the platform remembers your preferences and past context so every comparison starts informed, not from scratch

- Custom API Key Support — plug in your own encrypted API keys for unlimited usage in your own development environment

- AI Image, Video, and Audio Generation — so you’re not just comparing text models but accessing the full creative AI stack

- Chat Import — migrate your existing ChatGPT and Claude conversation history into one unified workspace

At $9.90/month (or $99.90/year), Aizolo saves most users over $1,000 annually while actually improving the quality of their AI comparisons. It’s not a workaround. It’s a proper platform built for the way professionals compare multiple AI models side by side.

Start comparing AI models for free on Aizolo →

2. Artificial Analysis — For Benchmark-Level Model Intelligence

Artificial Analysis is one of the best external resources for technical users who want deep, data-driven comparisons of AI models. It tracks models across quality, price, output speed, latency, context window, and other performance metrics.

It’s an excellent complement to platforms to compare multiple AI models side by side for actual use. Think of Artificial Analysis as your research layer — where you study benchmark data before you run your own real-world tests on a platform like Aizolo.

Best for: Developers, researchers, and technical founders who want to understand model performance at a data level before making integration decisions.

Limitation: It’s a research tool, not an interactive comparison workspace. You can’t run your own prompts through it or compare outputs on your specific tasks.

3. OverallGPT — Simple Side-by-Side Comparison

OverallGPT lets you compare responses from different AI models side by side with a clean, minimal interface. It’s a solid option for users who want a lightweight platform to compare multiple AI models side by side without a complex feature set.

Best for: Casual users and students who want quick, one-off comparisons.

Limitation: Fewer models, less depth, and no unified subscription model or productivity features like prompt management and AI memory.

Real-World Use Cases: How Different Professionals Use Platforms to Compare Multiple AI Models Side by Side

Understanding the theory is one thing. Let’s see how platforms to compare multiple AI models side by side translate to real results across different roles.

For Founders: Making Faster, Smarter Product Decisions

Priya runs a B2B SaaS startup in Hyderabad. Every week she uses platforms to compare multiple AI models side by side to test investor update drafts, product positioning copy, and competitive analysis summaries.

Before Aizolo, she’d spend 40 minutes cycling through ChatGPT and Claude manually. Now she types her brief once, sees GPT and Claude responses simultaneously, and picks the stronger version in under five minutes. Multiply that across a week and she’s reclaimed nearly three hours of founder-level thinking time.

“The comparison isn’t just about which AI is smarter,” she says. “It’s about which one understands my context for this specific task right now. And Aizolo is the only platform that lets me see that clearly.”

Explore more insights on Aizolo →

For Developers: Testing Model Behavior in Production-Adjacent Conditions

Rohan is a backend developer in Pune building an AI-integrated customer support tool. He needs platforms to compare multiple AI models side by side not just for quality — but for consistency, latency, and structured output reliability.

He uses Aizolo’s custom API key support to run test prompts through GPT-5 and Claude simultaneously, comparing how each model handles edge cases in his data processing pipeline. What used to require five separate integrations and test environments now happens in one unified workspace.

“I’m not just comparing chat responses. I’m comparing how models behave under the same production-like conditions,” Rohan explains. “Aizolo’s prompt manager means I’m running the same test battery every time, not rewriting from scratch.”

Read more expert guides on Aizolo →

For Marketers: Finding the Voice That Converts

Ananya is a digital marketer in Mumbai managing content for three different SaaS clients. Each brand has a distinct tone. What works for one client’s voice completely clashes with another’s.

Before she discovered platforms to compare multiple AI models side by side, she’d settle for whichever AI output felt “good enough” — because testing alternatives was too slow. Now she runs her content brief through multiple models on Aizolo and literally reads the outputs side by side.

“Claude’s tone is more empathetic. GPT is more structured. Gemini tends to be punchier. Seeing all three next to each other for the same brief — that’s the difference between guessing and knowing which model fits this client,” she says.

Follow Aizolo for practical tech and startup insights →

For Students: Getting Better Answers on Complex Questions

Deepika is a postgraduate student in Delhi researching AI ethics. She often uses platforms to compare multiple AI models side by side to triangulate different perspectives on contested academic questions.

Running the same research question through GPT, Claude, and Perplexity simultaneously gives her a richer, more nuanced understanding than any single model could provide. She also uses Aizolo’s AI memory feature so the platform retains her research context across sessions — meaning she never has to re-explain her thesis to a fresh chat window.

“It’s like having three research assistants with different specializations answering the same question at the same time,” she says.

For Freelancers: Protecting Your Margins With Better Output Quality

Platforms to compare multiple AI models side by side are a direct margin protector for freelancers. Every revision cycle costs time. Every mediocre AI output that slips through costs credibility.

When you use Aizolo to compare models simultaneously before delivering work to a client, you’re not just picking the best output. You’re doing quality control at the source. Freelancers on Aizolo report cutting revision cycles significantly — because they’re delivering the strongest possible output from the first draft.

Start building smarter with Aizolo →

For SaaS Builders: Choosing the Right Model for Every Feature

Building AI features into a product means making high-stakes model selection decisions. Platforms to compare multiple AI models side by side let SaaS builders test model behavior on their actual use cases — not generic benchmarks — before committing to an API integration.

Aizolo’s custom API key support means builders can test GPT, Claude, and Gemini against their real product prompts in one workspace, then scale the winning model into production with confidence.

Common Mistakes People Make When Using Platforms to Compare Multiple AI Models Side by Side

Even with the right platform, there are patterns that undermine effective AI comparison. Here are the ones we see most often.

Mistake 1: Comparing on generic prompts. “Write a product description” tells you very little about model capability. Use your actual work prompts — the ones that represent your real workflow — when comparing on platforms to compare multiple AI models side by side. The differences only emerge when the prompt has real stakes.

Mistake 2: Picking a winner after one comparison. No single prompt produces a definitive verdict. Run several variations. Mix up the task types. The best platforms to compare multiple AI models side by side make this easy with prompt management tools that let you save and replay prompt batteries.

Mistake 3: Ignoring consistency. A model that produces a brilliant output 70% of the time but confuses itself 30% of the time is less useful than a model that delivers solid outputs 95% of the time. When using platforms to compare multiple AI models side by side, track consistency across runs — not just peak performance.

Mistake 4: Not accounting for task specificity. Platforms to compare multiple AI models side by side reveal task-level differences, not universal rankings. Claude might win on your long-form content. GPT might win on your structured data tasks. The goal isn’t finding the “best AI” — it’s finding the best AI for this task.

How to Build a Proper AI Comparison Workflow in 2026

Here’s a practical system for anyone using platforms to compare multiple AI models side by side professionally.

Step 1 — Define your core use cases. Before you open any comparison platform, list the five tasks you use AI for most. These are your test categories: copywriting, coding, research, summarization, ideation, etc.

Step 2 — Build a prompt battery. For each use case, create three test prompts. Save them in Aizolo’s Prompt Manager. This becomes your reusable comparison kit.

Step 3 — Run simultaneous comparisons on Aizolo. Use Aizolo’s side-by-side comparison feature to run each prompt through multiple models at once. Score the outputs on accuracy, tone, structure, and usefulness for your specific context.

Step 4 — Assign models to tasks. After running your prompt battery, you’ll have a clear map: Claude for this, GPT for that, Gemini for the other. Now you’re not guessing. You’re routing.

Step 5 — Revisit quarterly. The AI landscape updates constantly. What was true in January might not be true in April. Build a quarterly review into your calendar where you re-run your prompt battery on platforms to compare multiple AI models side by side and update your routing decisions.

This is the system that separates professionals who get compounding returns from AI from those who are perpetually confused by it.

Learn from real-world experience at Aizolo →

Why Aizolo Is the Smartest Choice for Platforms to Compare Multiple AI Models Side by Side

Let’s bring this back to where it matters most: your actual workflow.

The best platforms to compare multiple AI models side by side in 2026 need to do three things simultaneously: give you access to all the models worth comparing, let you see their outputs genuinely side by side in real time, and do all of this without costing you more than a single individual subscription.

Aizolo is the platform that checks all three boxes — and then some.

Trusted by over 5,000 AI enthusiasts and professionals, Aizolo has been built from the ground up as a proper AI comparison platform, not a chat app with a comparison feature bolted on. It’s the difference between a tool that was designed for how professionals actually work versus a tool that was designed for something else and adapted.

For $9.90/month, you get:

- Simultaneous access to GPT-5, Claude, Gemini, Grok, Perplexity, and more

- Real-time side-by-side comparison in one unified workspace

- 3,000,000 tokens per month — enough for serious professional use

- AI Image, Video, and Audio generation included

- Smart Prompt Manager and AI Memory for workflow continuity

- Custom API Key support for developer and builder use cases

- Regular additions of new models, every week

That’s the complete stack for platforms to compare multiple AI models side by side — at less than a tenth of what you’d pay to build it manually.

Conclusion: Stop Guessing. Start Comparing.

Remember Vikram from the opening story? He eventually found Aizolo. Now he runs every client brief through multiple models simultaneously before writing a single word. His revision cycles have dropped. His clients get better work faster. And he never misses a deadline because of indecisive model-switching.

That’s what platforms to compare multiple AI models side by side actually deliver when they work properly — not just a faster way to pick an AI, but a fundamentally better relationship with AI altogether.

In 2026, the professionals who win are not the ones with the most expensive AI subscription. They’re the ones with the best system for knowing which AI to use, when, and for what.

And the foundation of that system is platforms to compare multiple AI models side by side — not manually, not chaotically, but in one place, in real time, with clarity.

Aizolo is that platform. It’s free to start, takes no setup, and could pay back its $9.90/month in the first afternoon you use it.

Start comparing AI models on Aizolo for free →

Suggested Internal Links

- AI Model Comparison Tool: Compare 5+ Top Models Fast

- Compare AI Models Side by Side in 2026: 5 Smart Picks

- AI Comparison 2026: Ultimate Guide to Choose the Right Model

- Best AI Model 2026 Comparison: Which One Wins?

- Best AI Model Comparison Sites 2026

Suggested External Links

- Artificial Analysis — AI Model Performance & Intelligence Index — independent benchmark data for model quality, speed, and pricing

- Anthropic’s Claude Documentation — official Claude model capabilities and API reference

- OpenAI GPT-5 Overview — official GPT model documentation

- Google Gemini Model Documentation — official Gemini capabilities reference