Table of Contents

The $110 Question Nobody Asks Before Subscribing

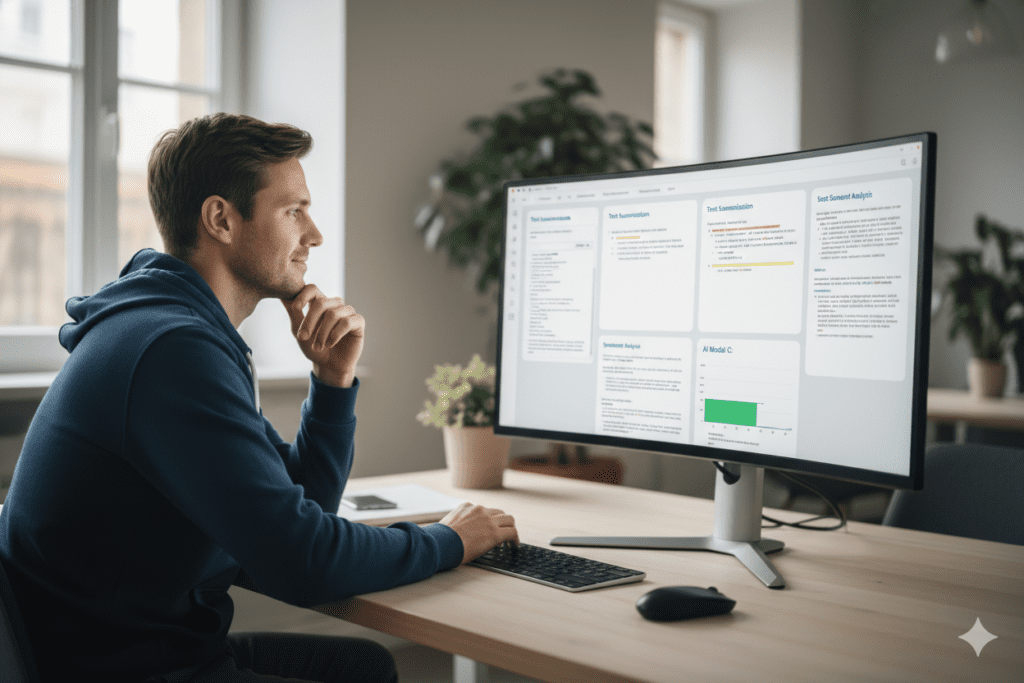

Picture this: It’s a Sunday night in Bengaluru. Riya, a 28-year-old freelance content strategist, is staring at her laptop screen. She’s subscribed to ChatGPT Plus, Claude Pro, and Gemini Advanced — three separate tabs, three separate monthly charges — and she’s still not sure which one actually wrote the better campaign copy for her client, revealing the benefits of comparing ai models.

She pastes the same brief into all three, gets three different outputs, and spends 40 minutes reading and re-reading them, toggling between tabs, losing her train of thought with each switch. Eventually, she picks one — not because it was clearly better, but because she was tired.

Sound familiar?

This is the hidden cost of ignoring the benefits of comparing AI models properly. Not just the money (although $110+ a month adds up fast), but the cognitive cost — the wasted time, the second-guessing, the missed clarity that a structured comparison would have given her in seconds.

In 2026, with GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro, Grok 4, and Perplexity Sonar Pro all competing at the frontier, the benefits of comparing AI models have never been more real — or more necessary. And yet, most professionals, founders, developers, and students are still skipping this step entirely.

This post is going to change that. Let’s dig into what the benefits of comparing AI models actually look like in practice — and how Aizolo makes the process smarter, faster, and dramatically more affordable.

Why No Single AI Model Wins Everything

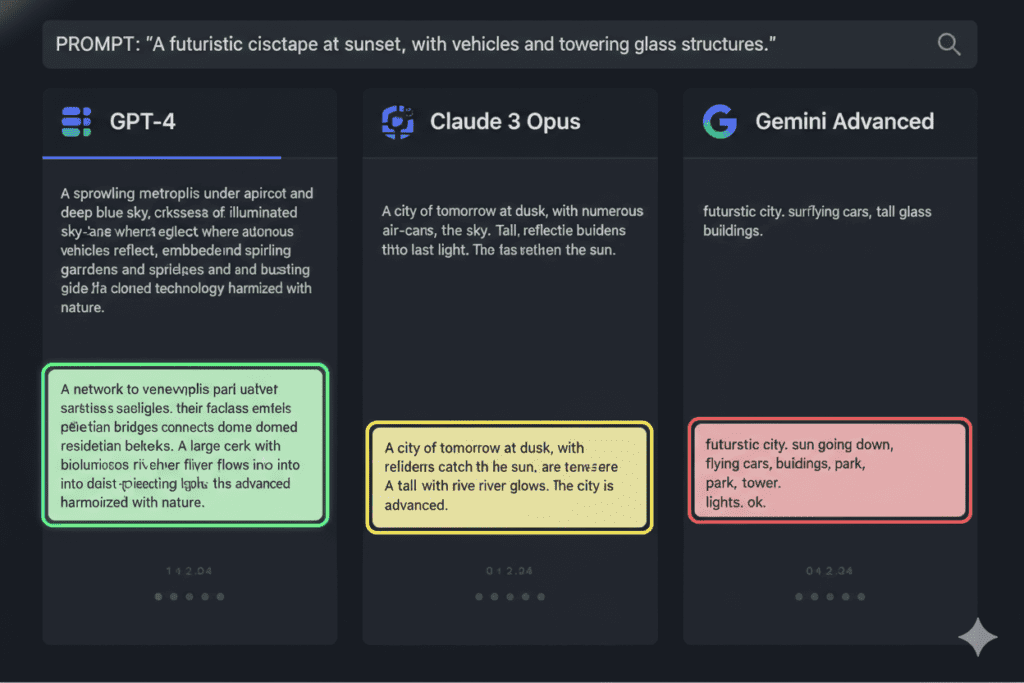

Here’s the honest truth that most AI marketing won’t tell you: no single model is the best at everything.

As Pluralsight’s 2026 AI Models Report puts it, the AI landscape today looks less like a marathon and more like the Olympics — different models win different events. GPT-5.4 is the strongest all-rounder. Claude Opus 4.6 dominates long-form writing and nuanced reasoning. Gemini 3.1 Pro leads on multimodal tasks and benchmark scores. Grok 4 has real-time internet access. Perplexity is built for research workflows.

And according to IQ-style benchmark data tracked by TrackingAI as of April 2026, the gap between the top models is razor-thin — just a few points separate Grok 4.20 Expert, GPT-5.4 Pro, and Gemini 3.1 Pro at the very top of the leaderboard.

That compression at the top means one thing: context decides the winner. For your specific task — your specific use case, budget, and workflow — a different model will be the right choice. And you can only discover that through comparison.

This is exactly why understanding the benefits of comparing AI models matters so much right now.

7 Real-World Benefits of Comparing AI Models

1. You Find the Right Tool for Every Task — Not Just the Most Popular One

The most popular model isn’t always the most useful model for your job.

A developer debugging a legacy Python codebase will get different results from Claude Opus than from GPT-5.4. A marketer writing ad copy for a fast-fashion brand will get different tones from Gemini versus Perplexity. A student summarizing a 50-page research paper might find one model far more accurate than another—this is exactly where the benefits of comparing ai models become clear.

One of the core benefits of comparing AI models is that it removes the assumption that one tool fits all situations. When you run the same prompt through multiple models and compare the outputs, patterns emerge quickly. You start to notice that Model A is better at structured logic, Model B writes more naturally for a human audience, and Model C is stronger at technical accuracy.

This kind of insight — earned through real comparison — builds a mental “model toolkit” that makes every future AI interaction more intentional and more productive.

2. You Stop Paying for Subscriptions You Don’t Fully Use

One of the most overlooked benefits of comparing AI models is financial clarity.

Most AI power users today are subscribed to multiple platforms. ChatGPT Plus at $20/month. Claude Pro at $20/month. Gemini Advanced at $20/month. Grok Premium at $30/month. That’s $90 to $110 every single month — over $1,300 a year — for tools that often overlap in capability.

When you actually compare these models side-by-side on your real tasks, you often discover that 80% of your workflow can be handled by one or two models well — and that the others you’re paying for rarely outperform them on the things you actually need.

Comparison breeds efficiency. It turns subscription sprawl into intentional tool selection.

This is one of the reasons Aizolo was built — to make this kind of structured, side-by-side comparison available for a single subscription at $9.90/month instead of $110+. More on that shortly.

3. You Catch Hallucinations and Inaccuracies Before They Cost You

AI models hallucinate. All of them do — some more than others, and some more on specific topics than others.

One of the most practical benefits of comparing AI models is a built-in accuracy check. When two or three models agree on a fact, you have much stronger confidence in its correctness. When they disagree — or when one model produces a confident but slightly different answer — that divergence is a signal to verify.

This is especially important for:

- Developers writing code where a subtle error can break production

- Founders using AI to generate market research or competitive analysis

- Students using AI to assist with research papers

- Marketers relying on AI for data-backed claims in content

Comparing model outputs before using them is like a second opinion in medicine. It doesn’t mean one model is “wrong” — it means you’ve done due diligence before committing to an output that has real consequences.

4. You Accelerate Your Creative and Technical Workflows

Speed is one of the most underrated benefits of comparing AI models — not speed of the models themselves, but the speed at which you arrive at a great output.

Without comparison, the process looks like this: prompt → mediocre output → refine prompt → slightly better output → refine again → good output. Three to five iterations. Twenty minutes lost, ignoring the benefits of comparing ai models.

With structured comparison, the process looks like this: prompt → three simultaneous outputs → pick the best elements → one iteration to refine. Five minutes. Done.

Developers building with the Faros AI model comparison framework have noted that teams getting the highest value in 2026 are the ones “treating models like a toolbox” — matching the right model to each task rather than defaulting to one. The teams that compare models are consistently faster and produce higher-quality output.

5. You Make Smarter API and Integration Decisions

For SaaS builders, developers, and startup founders, this benefit is perhaps the most financially significant of all.

Choosing which AI model to integrate into your product is a decision with long-term consequences. The wrong choice can mean:

- Higher API costs than projected

- Worse performance for your users

- A painful migration six months later

The benefits of comparing AI models at the evaluation stage — before you build — are enormous. When you run your actual use-case prompts through GPT-5.4, Claude Sonnet 4.6, and Gemini 3.1 Pro side-by-side, you get real data on response quality, tone consistency, and output structure. That data is worth far more than any benchmark chart.

As noted in LogRocket’s March 2026 AI developer power rankings, side-by-side model comparison with real prompts is increasingly the gold standard for technical teams evaluating AI integrations. The developers who skip this step often regret it when they discover edge cases in production.

6. You Build Genuine AI Literacy — Not Just Prompt Fluency

There’s a difference between being good at using one AI model and actually understanding AI.

Prompt fluency — knowing how to get good results from ChatGPT — is useful. But genuine AI literacy means understanding why different models behave differently, what their training priorities were, where their strengths come from, and how to map those strengths to your needs.

One of the deeper benefits of comparing AI models is the education that comes from the comparison itself.

When you run the same creative brief through Claude Opus 4.6 and GPT-5.4 and notice that Claude Opus 4.6 produces more nuanced, character-driven prose while GPT-5.4 produces more structured, on-brief output — you’ve just learned something real about both models, highlighting the benefits of comparing ai models.

That knowledge compounds. Over weeks and months of regular comparison, you develop an intuition for AI model behavior that makes you significantly more effective than someone who has only ever used one tool.

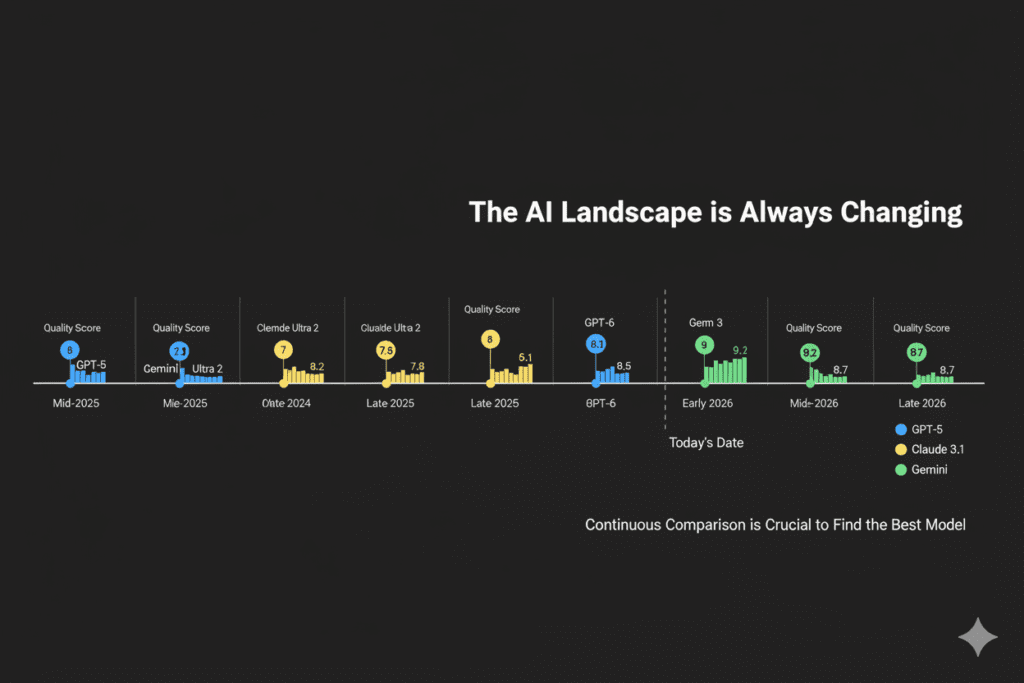

7. You Stay Ahead as the Landscape Evolves

The AI model landscape in 2026 changes monthly. New versions drop. Rankings shift. A model that was mediocre at coding in January may have overtaken the leader by March.

One of the lasting benefits of comparing AI models is that the habit of comparison keeps you current. Instead of discovering six months later that you’ve been using a tool that was quietly surpassed, you notice changes in quality in real time because you’re regularly comparing outputs.

This is particularly important for:

- Founders whose AI stack is a competitive advantage

- Developers maintaining production integrations

- Marketers who need the best content quality at the lowest cost

- Freelancers whose reputation depends on delivering excellent work

Staying informed through regular comparison is a professional discipline, not a luxury.

Why Most People Struggle to Compare AI Models Effectively

Understanding the benefits of comparing AI models intellectually is one thing. Actually doing it consistently is another.

Here’s why most people fail at this:

Multiple subscriptions are expensive. At $110/month for a full AI comparison stack, most individuals and small teams simply can’t afford to run all the top models simultaneously.

Manual tab-switching is cognitively exhausting. When you have to open four browser tabs, paste the same prompt four times, and then read four outputs while your memory of each one fades, the comparison process becomes more taxing than just picking a model and going.

There’s no unified interface. Each AI platform has its own UI, formatting, and quirks. Comparing responses across platforms means constantly adjusting to a new context, which reduces the clarity of the comparison.

There’s no structured output format. A true side-by-side comparison requires seeing outputs in a normalized format — same font, same layout, aligned for easy scanning. Manual comparison doesn’t offer that, which limits the benefits of comparing ai models.

The result? Most people don’t compare. They pick a model based on habit, popularity, or whichever one they subscribed to first — and they miss all the benefits of comparing AI models that could have been theirs.

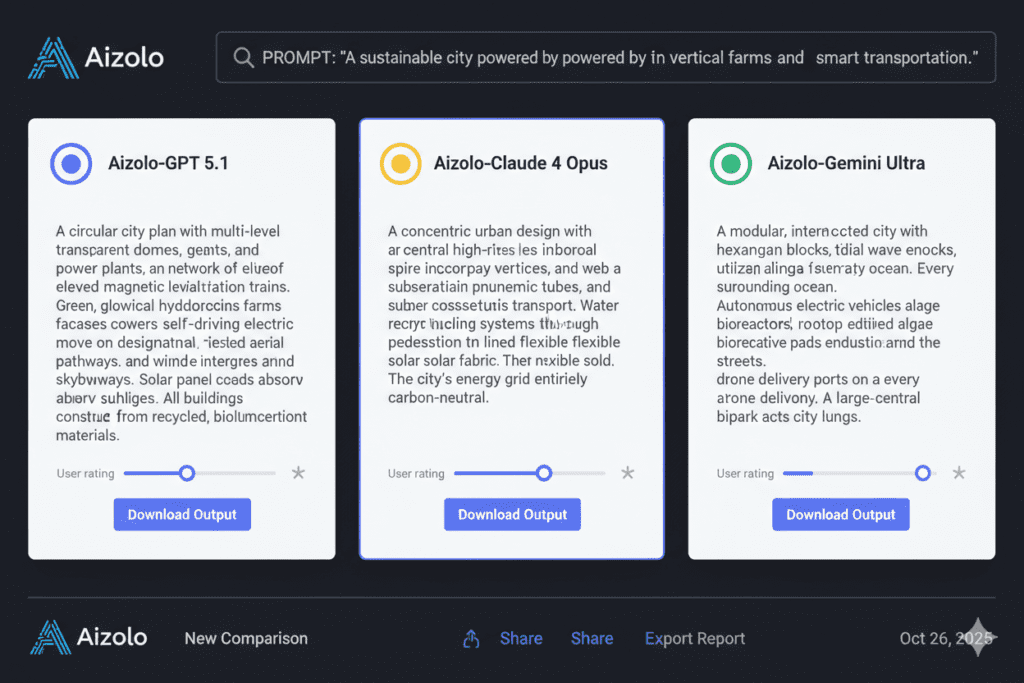

How Aizolo Makes AI Model Comparison Effortless

This is exactly the problem Aizolo was built to solve.

Aizolo is an all-in-one AI subscription platform that gives you access to GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro, Grok 4, Perplexity Sonar Pro, and more — not as separate subscriptions, but through a single unified dashboard for just $9.90/month.

Here’s what that means in practice:

Side-by-Side Comparison in Seconds

Instead of tab-switching, Aizolo lets you send the same prompt to multiple models simultaneously and view the responses side-by-side in one interface. The friction of comparison disappears. What used to take 20 minutes of manual effort takes 60 seconds.

This alone is worth the subscription — because it transforms the benefits of comparing AI models from theoretical to practical.

Access to All Premium Models for $9.90/Month

Aizolo replaces $110+ in individual subscriptions with a single $9.90/month plan. You get access to every major frontier model without managing multiple billing relationships, remembering multiple passwords, or deciding which subscription to cut.

This dramatically lowers the barrier to experiencing the benefits of comparing AI models for freelancers, students, early-stage founders, and anyone else working with a tight budget.

Smart Prompt Manager to Standardize Comparisons

Aizolo’s Prompt Manager lets you save and reuse your best prompts. For comparison purposes, this means you can run the same standardized prompt across models over time — tracking how outputs evolve as models update. It’s systematic AI comparison, not ad hoc.

AI Memory for Personalized Context

Aizolo’s AI Memory feature means the platform remembers your preferences, past conversations, and context across sessions. The more you use it, the more personalized and accurate the comparison context becomes — giving you results tailored to your specific workflow.

Custom API Keys for Power Users

If you already have API keys for specific models, Aizolo supports encrypted custom API key integration for unlimited usage. SaaS builders and developers can test production-level prompts through the same comparison interface without extra cost.

Real-World Use Cases: Who Benefits Most from Comparing AI Models?

Founders Building AI Products

You’re evaluating which model to integrate into your SaaS before committing to an API contract. Aizolo lets you run your core product prompts through five models simultaneously and score quality, consistency, and tone before writing a single line of integration code.

Read more expert guides on Aizolo about AI model cost vs performance comparison to make smarter product decisions.

Developers Debugging and Building

You’re writing a complex function and want to know whether Claude Opus 4.6 or GPT-5.4 produces more reliable, production-safe code for your specific stack. Side-by-side comparison tells you in one session what months of single-model experience might not.

Marketers Creating High-Converting Content

You’re writing a landing page, an email campaign, or an ad sequence. Different models have different strengths in persuasive writing — GPT-5.4 tends toward structure, Claude toward nuance and narrative. Comparing outputs gives you the raw material for a hybrid draft that outperforms either model alone.

Students and Researchers

You’re summarizing academic papers, generating literature reviews, or drafting essays. Running the same source material through multiple models and checking for factual consistency dramatically reduces the risk of hallucination-based errors making it into your final work.

Freelancers Delivering Client Work

Your reputation depends on the quality of what you deliver. Running client briefs through multiple models and selecting the best output — or combining the strongest elements — elevates your work above what any single model would have produced.

SaaS Builders Optimizing for Cost

You’re weighing whether Claude Sonnet 4.6 at a lower cost per token delivers 95% of the quality of Claude Opus 4.6. Side-by-side comparison on your actual use cases gives you data-backed cost optimization — potentially saving thousands of dollars a month in API costs at scale.

Explore more insights on Aizolo about the most popular AI model comparison platforms in 2026 to see how professionals are building smarter AI workflows.

The Comparison Habit: How to Build It Into Your Workflow

Understanding the benefits of comparing AI models is step one. Building it as a habit is step two.

Here’s a simple framework:

For creative tasks: Run your brief through at least two models. Read both outputs without editing. Identify the strongest 3–4 sentences or ideas in each. Combine and refine.

For technical tasks: Run your prompt through two models. Check for logical consistency and test any code snippets. Flag any divergence between outputs as a signal for manual verification.

For research tasks: Run your query through three models. Cross-reference any specific facts or statistics that appear in only one output before using them.

For ongoing model evaluation: Save one standard benchmark prompt in Aizolo’s Prompt Manager. Run it monthly across models to track quality changes over time.

This is what it looks like to treat AI comparison not as a one-time exercise, but as a professional discipline — one that compounds in value the longer you practice it.

Follow Aizolo for practical tech and startup insights on how to build this discipline into your daily workflow.

The Bottom Line: Comparison Is the Skill Nobody Teaches You

The AI world tells you which model is best. It rarely tells you that “best” is always context-dependent, always temporary, and always a question that you can only answer for yourself by actually comparing.

The benefits of comparing AI models aren’t abstract. They’re financial (lower subscription costs, smarter API decisions). They’re practical (better outputs, faster workflows). They’re professional (stronger AI literacy, better client work). And they’re strategic (staying ahead as the landscape evolves monthly).

The problem has never been a lack of capable AI models. The problem has been the friction of comparison — the cost, the tab-switching, the lack of a unified interface.

Aizolo exists to remove that friction entirely. One dashboard. All the top models. Side-by-side comparison. $9.90/month.

Start building smarter with Aizolo — because the benefits of comparing AI models are only available to people who actually compare them.

Suggested Internal Links

- AI Model Cost vs Performance Comparison 2026

- Most Popular AI Model Comparison Platforms 2026

- Side by Side AI Comparison in 2026

- Claude AI Strengths Compared to Other Models 2026

- AI Model Benchmarks Comparison 2026