Table of Contents

The $3,000 API Bill Nobody Saw Coming

It was a Thursday evening in Bengaluru when Rohan, a 27-year-old SaaS developer, refreshed his cloud billing dashboard and felt his stomach drop.

Three thousand dollars. In a single month.

He had been building a document-processing pipeline for a client — pulling in long legal PDFs, summarising them, and routing key findings to a dashboard. He had picked a popular closed-model API because it was “the obvious choice.” He had never really thought through the meta ai models api differences comparison 2026 options. He had just… used what everyone else was using.

The problem? His pipeline was hammering the API with millions of tokens daily, and the per-token cost had quietly scaled his bill into territory that wiped out his entire project margin.

If Rohan had spent two hours understanding the Meta AI models API differences comparison landscape for 2026 — specifically the Llama 4 family — he would have likely built the same pipeline for under $300.

This post is that two-hour study, condensed into one clear guide.

Whether you are a developer choosing infrastructure, a founder building a product, a marketer automating content workflows, or a student experimenting with AI, understanding the meta ai models api differences comparison 2026 is no longer optional. It is the difference between building profitably and burning budget.

At Aizolo, we work with thousands of AI enthusiasts who use multiple models every day — comparing them side by side, running them through real tasks, and making real decisions about which one to pay for. What follows is the honest, practical meta ai models api differences comparison 2026 guide that the generic benchmarking articles never give you.

What Is the Meta AI Model Family in 2026?

Before diving into meta ai models api differences comparison 2026 specifics, let us set the foundation.

Meta’s AI model ecosystem sits in a unique position. Unlike OpenAI, Anthropic, or Google — which keep their weights proprietary — Meta has historically committed to open-weight releases. The Llama family is the flagship expression of that strategy.

In 2026, the Llama 4 generation is the headline act. It introduces a fundamentally new architecture — Mixture of Experts (MoE) — that changes everything about how you think about cost, context, and capability when making your meta ai models api differences comparison 2026 decisions.

The three models currently in the Llama 4 family are:

- Llama 4 Scout — the efficiency specialist

- Llama 4 Maverick — the performance flagship

- Llama 4 Behemoth — the research giant (still in training as of April 2026)

And then there is the recently launched Meta Muse Spark — a closed-weight, proprietary model that marks a dramatic departure from Meta’s open-source identity.

Understanding the meta ai models api differences comparison 2026 means understanding all four, and knowing when each one earns its place in your stack.

The MoE Architecture: Why It Changes Everything

Every serious meta ai models api differences comparison 2026 article needs to explain MoE, because without it, the numbers make no sense.

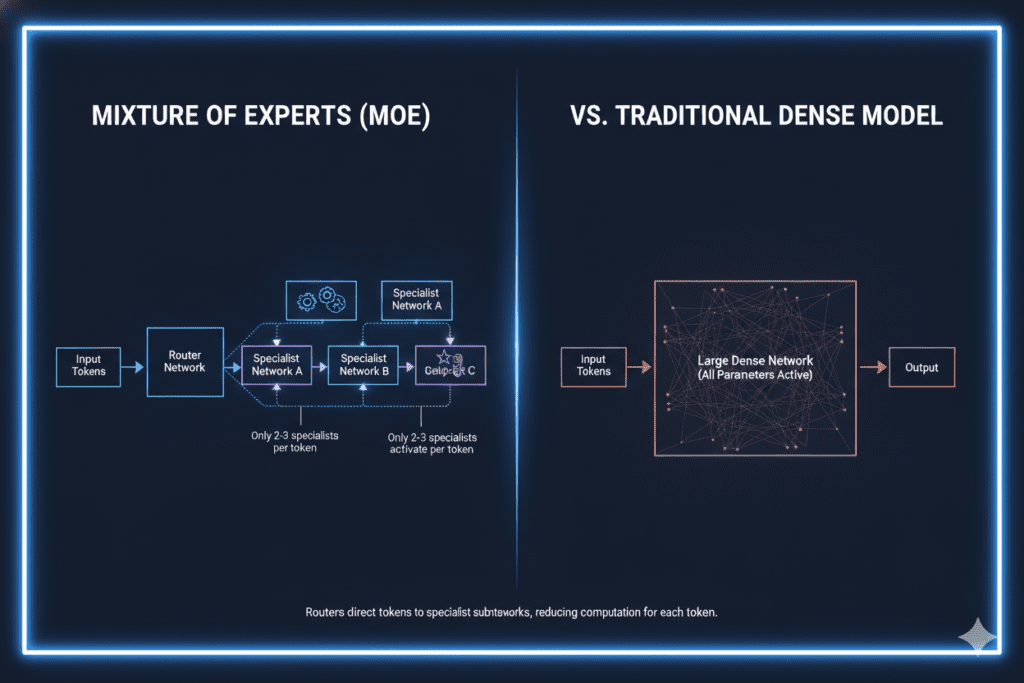

Traditional “dense” language models activate all their parameters for every single token. A 70-billion-parameter dense model spends 70 billion parameter computations on every word it generates. That is expensive and slow at scale.

MoE architecture works differently. The model contains a large pool of specialist sub-networks called “experts.” A router examines each incoming token and activates only the most relevant experts — a small fraction of the total pool. This means a 400-billion-parameter MoE model might only use 17 billion active parameters per token.

The result: frontier-class reasoning quality at near-small-model inference cost.

This is the engine behind Llama 4, and it is why the meta ai models api differences comparison 2026 question is so interesting right now. You can potentially get GPT-level quality at a fraction of the price — if you pick the right model for the right task.

Llama 4 Scout: The Long-Memory Efficiency Model

What Scout Is

Scout is the practical workhorse of the Llama 4 family. In the meta ai models api differences comparison 2026 context, it is the model you reach for when cost and context length are your primary concerns.

Architecture: 109 billion total parameters, 17 billion active per token, 16 experts in the MoE pool.

Context window: 10 million tokens — the largest of any open-weight model, and larger than any proprietary model available commercially as of April 2026.

Deployment: Scout is optimised to run on a single NVIDIA H100 GPU, which makes it significantly more accessible for self-hosting than Maverick.

What Scout Actually Does Well

In any honest meta ai models api differences comparison 2026, Scout shines on:

- Long-document processing — loading entire codebases, multi-year financial reports, or full legal contract libraries in a single inference call

- Retrieval-augmented generation (RAG) — its massive context window reduces chunking complexity dramatically

- Batch classification — high-volume tasks like tagging, categorising, or routing text at scale

- Customer-facing chatbots — where speed and cost efficiency matter more than peak reasoning quality

- Summarisation pipelines — exactly the use case that burned Rohan at the start of this story

Scout API Pricing (April 2026)

API costs for Scout vary by provider, but as a reference point, Groq hosts Scout at approximately $0.11 per million input tokens — making it one of the cheapest multimodal-capable models available through any public API. It is worth noting that Meta’s direct Llama API was still on a waitlist as of early 2026, so most developers access Scout through partners like Groq, Together AI, or Fireworks.

Llama 4 Maverick: The Performance Flagship

What Maverick Is

Maverick is where things get impressive in the meta ai models api differences comparison 2026 story. It is the model Meta itself uses inside Facebook, Instagram, and WhatsApp for consumer-facing AI interactions.

Architecture: 400 billion total parameters, 17 billion active per token, 128 experts in the MoE pool.

Context window: 1 million tokens — still enormous, and practical for most enterprise use cases.

Multimodal: Native image and text understanding, built into the architecture from pretraining — not retrofitted.

Why 128 Experts Matters

The jump from Scout’s 16 experts to Maverick’s 128 experts is the key insight in any meta ai models api differences comparison 2026 analysis. More experts means more specialisation. Each expert develops deeper domain knowledge, and the router can route tokens to genuinely more capable specialist networks.

Maverick was also trained through a process called codistillation from Behemoth — Meta’s 2-trillion-parameter teacher model. This knowledge transfer gives Maverick reasoning quality that outstrips what you would expect from a model with only 17 billion active parameters.

Maverick’s Benchmark Performance

Independent evaluations as of March 2026 place Maverick’s GPQA Diamond score at 69.8 — putting it in the same competitive range as GPT-4o on reasoning benchmarks. On code generation tasks (HumanEval, SWE-bench), Maverick matches or exceeds the benchmark, reflecting Meta’s emphasis on training with large proportions of code data.

Where Maverick trails: multi-step stateful coding tasks. The MoE architecture’s expert-routing mechanism is naturally less suited to tracking state across many sequential reasoning steps — something worth factoring into your meta ai models api differences comparison 2026 decision if you are building a coding copilot.

Maverick API Pricing (April 2026)

Maverick is available through Together AI and other providers at approximately $0.20 per million input tokens and $0.60 per million output tokens. For context: GPT-4o through OpenAI’s API typically costs significantly more per token, making Maverick a compelling alternative for teams processing high volumes.

The crossover point where self-hosting Maverick becomes cheaper than paying API costs is approximately 1–2 billion tokens per month for most organisations. Below that threshold, managed API providers are almost always the more economical choice.

Llama 4 Behemoth: The Research Giant

Behemoth is the third member of the Llama 4 family and remains in training as of April 2026. It is not yet publicly available, but it is worth including in any forward-looking meta ai models api differences comparison 2026 analysis.

Scale: 2 trillion parameters — making it the largest model Meta has ever trained.

Context window: 256,000 tokens (more constrained than Scout or Maverick despite its size).

Primary use case: Research, large-scale enterprise applications, and as a teacher model for distilling capability into smaller models.

Behemoth’s most immediate impact on the meta ai models api differences comparison 2026 landscape is indirect — it is the source from which Maverick was codistilled, giving Maverick much of its reasoning capability. When Behemoth eventually becomes available through APIs, it will likely compete directly with frontier proprietary models like GPT-5 and Claude Opus 4.

Meta Muse Spark: The Wild Card

No meta ai models api differences comparison 2026 is complete without acknowledging Muse Spark — launched April 8, 2026 — because it represents a pivot that caught the AI developer community off guard.

For three years, Meta’s identity in the AI space was synonymous with open-source releases. Llama 1, 2, 3, and 4 all shipped with downloadable weights. Then came Muse Spark: a closed-weight, proprietary model built by Meta Superintelligence Labs, available only through meta.ai with no public weights.

Benchmark position: Muse Spark scores approximately 52 on the Artificial Analysis Intelligence Index — below frontier models like GPT-5 (57) and Gemini 3.1 Pro. It is not yet competitive at the highest tier of reasoning benchmarks.

Access: Consumer-only through meta.ai; no public API as of April 2026.

For developers making meta ai models api differences comparison 2026 decisions, Muse Spark is currently more of a signal than a tool. It tells you that Meta is hedging its bets — maintaining the open Llama ecosystem while also building a proprietary track. The long-term implications for the developer community are significant, particularly given reports that internal teams were moving toward closed-source approaches as of late 2025.

The Llama 3.x Legacy: Still Relevant in 2026

Before Llama 4 arrived, the Llama 3 family was the backbone of countless production deployments. In a complete meta ai models api differences comparison 2026, you need to know where these models still earn their place.

Llama 3.3 — strong dialogue quality and multilingual support, broadly available across cloud platforms. Still a solid choice for conversational applications where Llama 4’s context advantages are not needed.

Llama 3.2 — added image processing for vision-enabled workflows. Remains relevant for teams that need multimodal capability but are not ready to migrate infrastructure to Llama 4.

Llama 3.1 — includes 405B parameter variants with large context support. The 405B version competed meaningfully with Claude 3 on math benchmarks when it was released. Still valuable for organisations that have already built fine-tuned pipelines on it.

The practical advice for the meta ai models api differences comparison 2026 decision: unless you have a specific reason to stay on Llama 3.x (existing fine-tunes, deployment constraints, or team familiarity), the migration case to Llama 4 Scout or Maverick is strong for new projects.

Real-World Use Cases: Who Should Use Which Model

This is where the meta ai models api differences comparison 2026 conversation gets practical. Different people have different problems. Here is how to match the model to the person.

For Developers and Engineers

If you are building a coding assistant or debugging tool, Maverick is the better choice — its deeper expert pool handles complex reasoning chains better than Scout.

But if you are building a RAG system that needs to ingest enormous codebases, Scout’s 10-million-token context means you can load hundreds of files in a single call and dramatically simplify your chunking architecture.

For high-volume classification, log analysis, or routing tasks that run thousands of times per day, Scout via Groq is likely your most cost-efficient path.

For Founders and SaaS Builders

The meta ai models api differences comparison 2026 question for founders is fundamentally about margin. Maverick at $0.20 per million input tokens versus GPT-4o-class pricing can translate to meaningful cost differences at scale.

A smart tiered approach works well: use Scout for Tier 3 high-volume simple tasks, Maverick for Tier 2 general analysis and documentation, and reserve proprietary frontier models (Claude Opus, GPT-5) for Tier 1 complex reasoning where quality is non-negotiable.

For Marketers and Content Teams

If your workflow involves processing long research documents, competitor content, or large content briefs — Scout’s long context means you can feed the model an entire content strategy document and get a coherent response that accounts for all of it.

For creative generation, multilingual content, or tasks requiring deeper understanding, Maverick’s superior reasoning quality justifies the modest additional cost.

For Students and Independent Learners

The open-weight nature of Llama 4 means you can run Scout locally on accessible hardware (with quantisation), experiment with the architecture, fine-tune it on your own datasets, and build genuine AI experience without paying per-token API costs. This is the meta ai models api differences comparison 2026 advantage that proprietary models simply cannot match.

For Freelancers

Freelancers building client deliverables need to control costs carefully. The meta ai models api differences comparison 2026 recommendation: use Scout via a managed API for document processing and research tasks, Maverick for tasks where reasoning quality directly affects output quality, and compare both side by side on Aizolo before committing to a stack for a client project.

The EU Licensing Issue: What Developers Must Know

Any honest meta ai models api differences comparison 2026 must flag this: there is a geographic restriction buried in the Llama 4 Community License that affects European developers specifically.

EU users cannot use Llama 4’s vision features — Scout and Maverick’s multimodal capabilities. Text features remain available in the EU, but if your product serves EU users and uses Llama 4’s image-processing capabilities (for example, letting users upload screenshots for analysis), you would be in technical violation of the licence terms.

This is a real constraint that many meta ai models api differences comparison 2026 articles gloss over. If you have significant EU user exposure and multimodal requirements, you need either a different model or a legal review of your specific deployment.

The Self-Hosting vs API Question

For the meta ai models api differences comparison 2026, the self-hosting question is directly linked to volume economics.

At 10 million tokens per month: Use a managed API. Fixed infrastructure costs far outweigh the per-token savings.

At 500 million tokens per month: The breakeven point for Scout begins to emerge. Engineering overhead and GPU rental costs start to compete with API pricing.

At 1+ billion tokens per month: Self-hosting Scout (or Maverick with appropriate GPU allocation) becomes economically rational. Additionally, self-hosting brings compliance benefits — sensitive data never leaves your infrastructure.

The practical setup: Scout runs on a single H100 GPU (INT4 quantisation gets you approximately 130K context on one GPU, which is sufficient for most production tasks). Maverick in FP8 precision requires 8× H100 GPUs minimum — a significant infrastructure investment that only makes sense at scale.

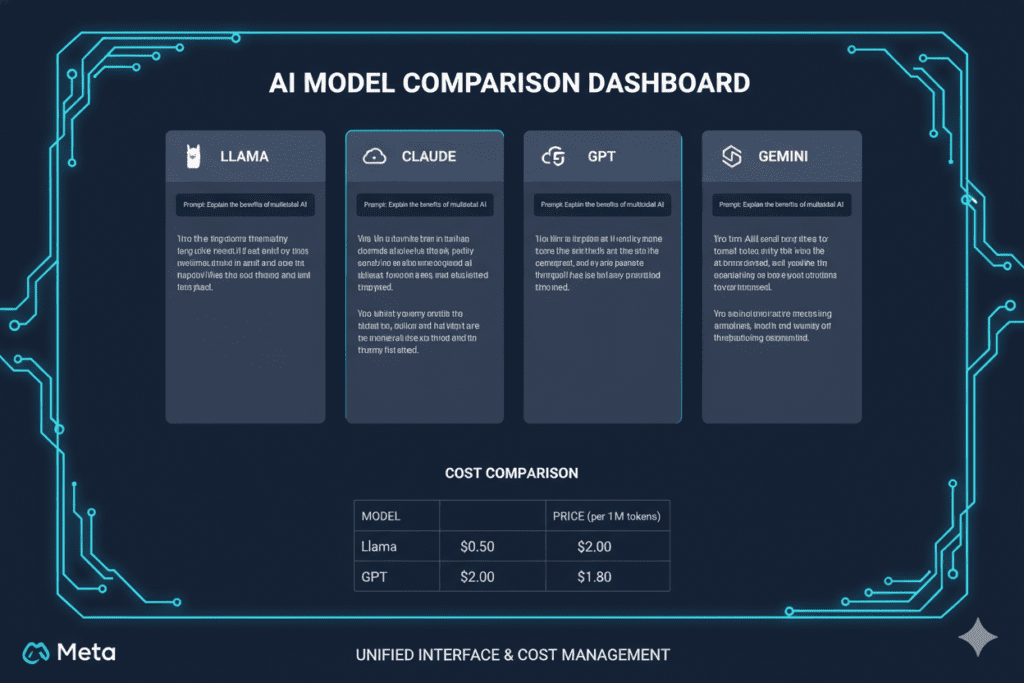

How Aizolo Helps You Navigate the Meta AI Models API Differences

This is exactly the kind of complexity that Aizolo was built to simplify.

Instead of managing separate API accounts, separate billing, and separate interfaces for every model in your meta ai models api differences comparison 2026 research, Aizolo gives you a unified platform where you can:

- Compare Meta’s Llama models alongside GPT, Claude, Gemini, and Grok in the same interface, side by side

- Run the same prompt across multiple models and see which one gives you the best response for your specific task

- Use your own API keys (including Meta’s Llama API, when available) with encrypted storage — no extra cost per token beyond what the provider charges

- Save and reuse prompts across your model comparisons with the Smart Prompt Manager

- Access all premium AI models through a single subscription at a fraction of what you would pay running them individually

For a developer doing their own meta ai models api differences comparison 2026 due diligence, the ability to fire the same prompt at Scout, Maverick, Claude Sonnet, and GPT-5 simultaneously — and see where each one shines and stumbles — is genuinely invaluable. It turns what used to be a week of experimental API calls into a ten-minute side-by-side evaluation.

Explore more expert AI model comparisons and guides on Aizolo’s blog — covering everything from open-source model selection to SaaS cost optimisation.

A Practical Decision Framework for Meta AI Models API Comparison 2026

Before you finalise your meta ai models api differences comparison 2026 decision, run through this framework:

Step 1: Define your primary workload type

- Stateful multi-step coding copilot? → Claude Sonnet or GPT-5 still hold an edge

- Long-document processing, RAG, summarisation? → Scout is your first call

- Reasoning-intensive analysis, content generation, enterprise tasks? → Maverick

- Research or large-scale knowledge distillation? → Wait for Behemoth or use frontier proprietary models

Step 2: Estimate your monthly token volume

- Under 100M tokens/month → Managed API is almost certainly cheaper

- Over 500M tokens/month → Start modelling self-hosting costs

Step 3: Check your geographic constraints

- EU users + vision features needed → Do not use Llama 4; choose an alternative

- Global deployment, text-only → Full Llama 4 family available

Step 4: Compare live on a real task

- Do not rely on benchmarks alone. Benchmarks measure standardised tasks; your production prompts are not standardised. Test your actual prompt, on your actual task, across multiple models.

Aizolo’s comparison platform makes Step 4 effortless — one interface, all models, your prompts.

The Bigger Picture: Why 2026 Is a Turning Point for Meta AI Models API Differences

The meta ai models api differences comparison 2026 conversation is happening against a backdrop that is genuinely historic.

Open-source models have closed the gap with proprietary frontier models to single-digit percentage points on most benchmarks. The cost difference between running a Llama 4 Maverick workload versus a GPT-4o workload can be an order of magnitude for high-volume use cases. And the compliance and data-privacy advantages of self-hosted open-weight models are increasingly attractive to enterprise buyers.

At the same time, Meta’s launch of Muse Spark suggests that even the most committed open-source lab in the frontier AI space is hedging toward closed models. The Llama 5 roadmap is uncertain. Developers who build deep dependencies on Meta’s open-weight strategy should think carefully about provider-agnostic architectures that can swap models without major refactoring.

The smartest builders in 2026 are not betting on a single model. They are building tiered, routing-based architectures where the right model gets the right task — and they are using comparison platforms to stay updated as the landscape shifts monthly.

Conclusion: Your Meta AI Models API Differences Comparison 2026 Starts Here

The meta ai models api differences comparison 2026 landscape is the most interesting it has ever been.

Llama 4 Scout gives you a 10-million-token context window at a fraction of the cost of proprietary alternatives — ideal for long-document processing, RAG pipelines, and high-volume classification.

Llama 4 Maverick gives you frontier-class reasoning and native multimodal capability with a 128-expert MoE architecture — Meta’s own apps run on it. Llama 4 Behemoth is coming for the most demanding research and enterprise use cases.

And Muse Spark is signalling that Meta’s open-source future is less certain than it was two years ago.

For Rohan, the developer whose $3,000 API bill opened this story: his pipeline was a perfect Scout use case. Long documents, high volume, summarisation output. He would have saved roughly 85% of his costs — and kept his project profitable — if he had done this meta ai models api differences comparison 2026 analysis before writing a single line of code.

Do not make Rohan’s mistake.

Start building smarter with Aizolo — compare Llama 4 Scout, Maverick, Claude, GPT-5, Gemini, and more in a single interface, with one affordable subscription that costs less than a single proprietary model plan.

Read more expert guides on Aizolo to stay ahead of the AI model landscape as it evolves — because in 2026, the model you choose is a business decision, not just a technical one.

Suggested Internal Links

- Smartest AI Model 2026 Comparison — related: choosing between frontier models

- Most Intelligent AI Model 2026 Comparison — related: GPT-5, Claude Opus, Gemini benchmarked

- Mistral AI Models 2026 — related: open-source model landscape

- Best AI Models for Product Research and Comparison 2026 — related: model selection for founders

Suggested External Links

- Meta Llama 4 Official Model Page — primary source for architecture specs and benchmarks

- NVIDIA Technical Blog: Llama 4 Scout and Maverick — authoritative hardware performance data

- LLM Stats — AI Model Updates Tracker — live tracking of model releases, API changes, and pricing updates

- TokenCost: Llama 4 Scout vs Maverick API Pricing — real-time API cost comparisons across providers