Table of Contents

The Bill That Made Priya Rethink Everything

Priya is a SaaS founder based in Hyderabad. She’d been building an AI-powered legal document analyzer for six months — the kind of product that actually needed deep reasoning, not just surface-level text generation.

To keep her project sustainable, she researched the cheapest way to access GPT 5.1 thinking model API, exploring affordable plans and optimized usage strategies so she could leverage advanced reasoning without breaking her budget.

To make her solution cost-effective, she researched the cheapest way to access GPT 5.1 thinking model API, ensuring she could leverage advanced reasoning capabilities without breaking the budget.

By exploring affordable API plans and optimizing token usage, Priya was able to integrate high-level AI reasoning into her product efficiently. This approach not only reduced development costs but also allowed her to scale her AI features as her user base grew.

When she finally integrated GPT-5.1 Thinking into her backend and let it run for a week, she was thrilled with the quality. The model caught nuances in contracts that simpler models completely missed.

By finding the cheapest way to access GPT 5.1 thinking model API, Priya was able to run these high-reasoning processes without exploding her costs, making advanced AI truly practical for her startup.

Then the OpenAI invoice arrived.

$680 for seven days of usage. At that burn rate, her AI infrastructure costs alone would eat through her runway in under three months — before she’d even acquired her first paying customer.

Determined to find a solution, she started looking for the cheapest way to access GPT 5.1 thinking model API, exploring affordable subscription plans, free trial options, and optimized token usage strategies.

By carefully comparing different providers, she discovered ways to reduce costs without compromising the advanced reasoning capabilities her product required. This approach gave her the breathing room to continue development while staying financially sustainable and preparing for her first paying users.

Sound familiar?

If you’ve ever tried to build something serious with the GPT-5.1 Thinking model API, you’ve probably had a similar gut-drop moment.

That’s why many developers and startups spend time researching the cheapest way to access GPT 5.1 thinking model API, ensuring they can leverage its advanced reasoning capabilities without incurring overwhelming costs.

The model is extraordinary. The costs, if left unmanaged, can be brutal. That’s why many developers spend time researching the cheapest way to access GPT 5.1 thinking model API, finding strategies to leverage its advanced reasoning capabilities without blowing their budget.

And that’s exactly what this guide is about — the cheapest way to access the GPT-5.1 thinking model API without sacrificing the quality that makes it worth using in the first place.

What Actually Makes GPT-5.1 Thinking Different (And Expensive)

Before we talk cost strategy, let’s be clear about what you’re dealing with.

GPT-5.1 is OpenAI’s flagship model, released in late 2025, and it comes in two primary modes: Instant (fast, conversational, adaptive reasoning on simpler tasks) and Thinking (deep, extended reasoning for complex problem-solving).

For startups and developers concerned about cost, finding the cheapest way to access GPT 5.1 thinking model API has become a crucial step to harness the model’s powerful reasoning capabilities without overspending.

When you’re accessing GPT-5.1 Thinking via the API, you’re using the model with its reasoning effort cranked up — and that’s where the pricing gets complicated.

To manage costs effectively, many developers actively search for the cheapest way to access GPT 5.1 thinking model API, exploring optimized usage, subscription plans, and cost-saving strategies without sacrificing the deep reasoning power of the model.

Here’s the core issue: the Thinking mode doesn’t just generate a response. It generates reasoning tokens first — an internal chain-of-thought that the model works through before producing your final output. Those reasoning tokens are billed at the output token rate, which on the official OpenAI API sits at $10 per million output tokens, with input tokens at $1.25 per million.

A single complex query in high-reasoning mode can generate thousands of invisible reasoning tokens before a single word of your actual answer appears.

That’s why developers and startups are always on the lookout for the cheapest way to access GPT 5.1 thinking model API, optimizing token usage and exploring cost-effective plans to leverage the model’s deep reasoning power without breaking the budget.

For a legal document analysis, a multi-step coding task, or a complex agentic workflow, this can mean paying for 5,000–15,000 reasoning tokens per request — tokens the user never sees, but you absolutely pay for.

That’s the “Reasoning Token Tax,” and it catches most developers completely off guard.

Why Most People Overpay for GPT-5.1 Thinking API Access

There are a few common traps that inflate costs unnecessarily:

Defaulting to maximum reasoning effort for every task. The GPT-5.1 API supports configurable reasoning levels: none, low, medium, and high. Most developers, excited about the model’s capabilities, set it to high for everything — even tasks that don’t need it. Setting reasoning effort to low for simpler subtasks can cut reasoning token generation by 60–80%.

Ignoring prompt caching. OpenAI offers a 90% discount on cached input tokens — bringing the price down from $1.25 to just $0.125 per million tokens. If your system prompts are long and consistent (which they often are for document analysis or code review tools), caching can dramatically reduce costs.

But this only works if you keep those prompts standardized. Changing even small parts of your system prompt breaks the cache.

To avoid unnecessary cost spikes, many developers look for the cheapest way to access GPT 5.1 thinking model API, combining prompt standardization with optimized usage strategies to get maximum reasoning output without overspending.

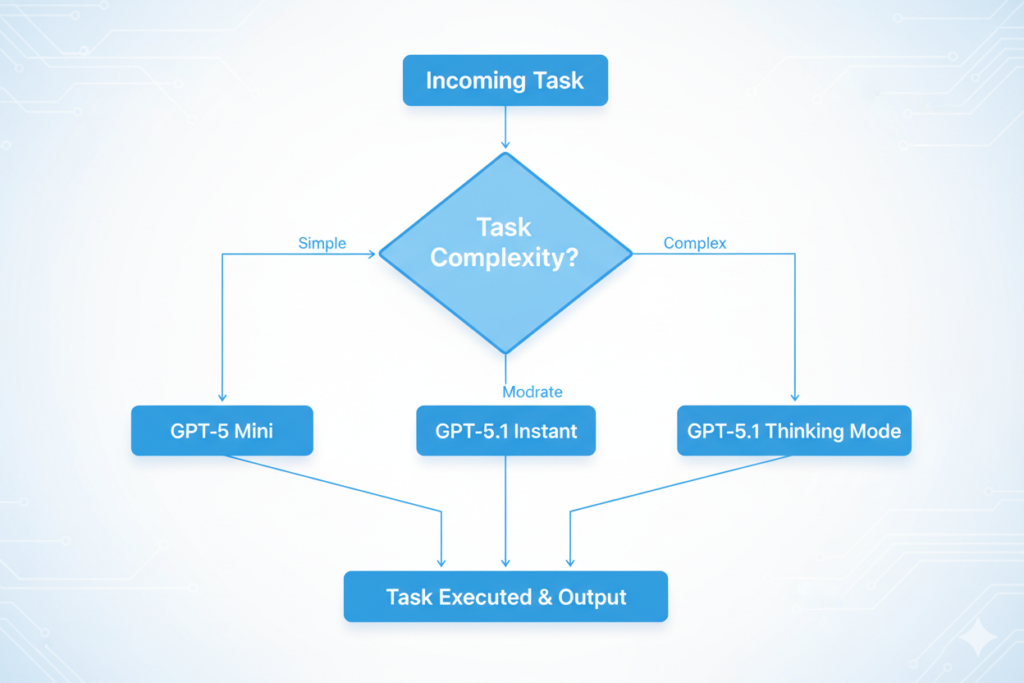

Running GPT-5.1 Thinking for tasks that don’t need it. Complex reasoning is powerful, but not every step in your pipeline needs it. A developer who routes every request — including simple data extraction and formatting — through the Thinking model is essentially using a sledgehammer to crack a walnut.

Building a tiered routing layer that directs only genuinely complex tasks to Thinking mode, while simpler tasks go to GPT-5.1 Instant or GPT-5 mini, can cut costs by 70% with zero quality loss on most workloads. Many developers combine this strategy with research into the cheapest way to access GPT 5.1 thinking model API, ensuring they can leverage deep reasoning for critical tasks while keeping overall AI infrastructure spending under control.

Ignoring the Batch API. OpenAI’s Batch API offers up to 50% cost reduction for asynchronous workloads. If you’re processing documents, running analysis jobs, or doing anything that doesn’t need real-time responses, batching is one of the most overlooked cost-reduction tools available.

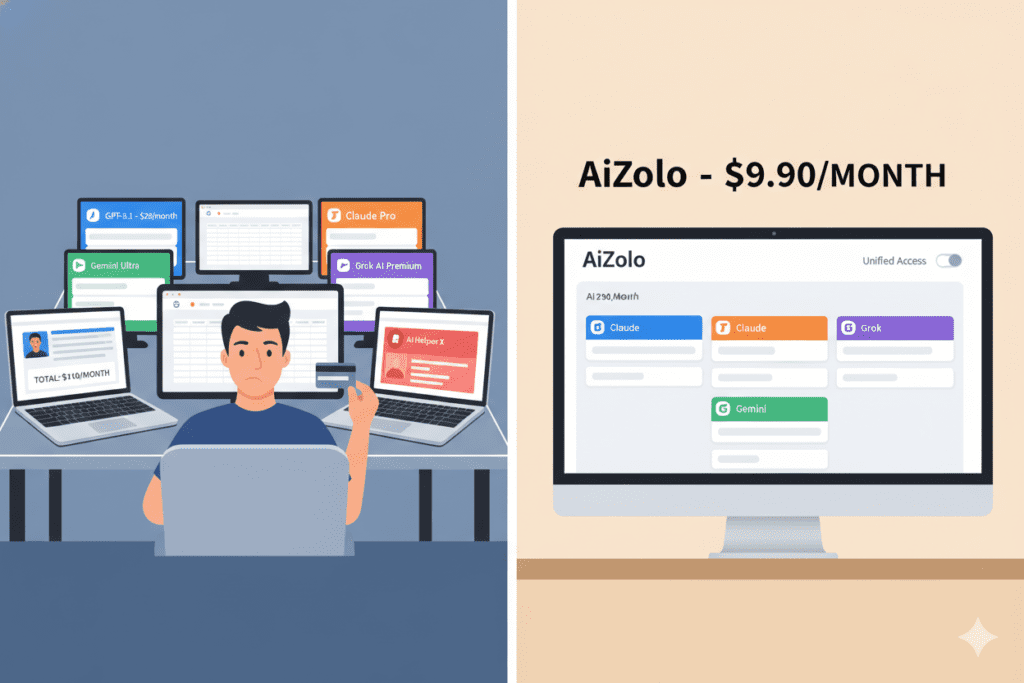

Paying for multiple separate subscriptions. Many developers and founders end up paying for ChatGPT Plus, a direct API account, and sometimes even separate subscriptions to Claude or Gemini — all to compare outputs and pick the best one manually. That’s not a workflow; that’s financial self-harm.

The Smartest Cost-Reduction Strategies for GPT-5.1 Thinking API Access

1. Build a Reasoning Effort Router

This is the single highest-impact optimization you can make. Instead of sending every API request with reasoning_effort: "high", build a lightweight classifier that determines appropriate reasoning effort before routing the request.

A simple version works like this: short factual queries get none, single-step tasks get low, multi-step analysis gets medium, and only the most complex tasks — deep research, code architecture reviews, complex legal or financial analysis — get high. This alone can reduce your reasoning token spend by half or more.

2. Optimize for Prompt Cache Hits

Structure your API calls so that the largest portion of your input (the system prompt, document context, background information) comes first and remains identical across requests.

Doing this not only improves response consistency but also helps control costs, which is why many developers explore the cheapest way to access GPT 5.1 thinking model API, combining efficient input structuring with affordable API options to maximize value from every call.

OpenAI’s prompt caching is automatic, but it only activates when inputs share a common prefix of at least 1,024 tokens.

For a document analysis tool, this means: keep your system prompt constant, pass the document as a consistent second block, and only vary the final user query. A developer who implements this correctly can see cached input rates of 60–80%, effectively reducing their input token cost by 85%+ on those portions.

3. Use the Batch API for Non-Real-Time Workloads

If you’re building anything involving bulk processing — document review, content analysis, data extraction — you almost certainly don’t need synchronous API calls for every item.

Many developers optimize costs by batching requests or using asynchronous workflows, and they also research the cheapest way to access GPT 5.1 thinking model API to ensure they can handle large-scale operations without overspending on high-reasoning calls.

The OpenAI Batch API processes requests asynchronously within 24 hours and delivers 50% off standard pricing. For a startup processing 10,000 documents a month, this is not a small saving.

To make the most of such cost reductions, many developers also look for the cheapest way to access GPT 5.1 thinking model API, combining batch processing with affordable API plans to maximize efficiency without breaking their budget.

4. Set max_tokens Aggressively

The model’s default behavior can generate verbose, lengthy responses even when you need concise outputs. Always set max_tokens based on what you actually need.

If you’re extracting structured data, you don’t need 4,000 tokens of output. Setting tight output limits prevents unnecessary generation and directly reduces your bill.

5. Choose the Right Access Platform

This is where the biggest savings often hide — and it’s the strategy most developers overlook entirely.

The Platform Advantage: Why Direct API Isn’t Always the Cheapest Option

Here’s a truth that surprises a lot of developers: the cheapest way to access the GPT-5.1 thinking model API isn’t always going directly to OpenAI’s platform.

OpenRouter, for example, provides access to GPT-5.1 at competitive rates while adding features like automatic fallback to alternative models, multi-provider routing, and support for the reasoning_details array that lets you inspect the model’s thinking process in your application — useful for debugging and for surfacing reasoning transparency to end users.

But for most developers, marketers, founders, and students who are using AI daily — across multiple models, for multiple purposes — the most cost-effective solution is a unified platform that collapses all of your AI access into a single, affordable subscription.

Many users pair this approach with research into the cheapest way to access GPT 5.1 thinking model API, ensuring they can tap into deep reasoning capabilities without juggling multiple accounts or overspending on high-tier API calls.

This is exactly what AiZolo is built for.

How AiZolo Solves the GPT-5.1 Thinking Cost Problem

AiZolo is an all-in-one AI platform trusted by over 5,000 users that gives you access to all the major premium AI models — including GPT-5.1 — for a single subscription of $9.90/month.

For those looking to maximize value, AiZolo provides the cheapest way to access GPT 5.1 thinking model API, letting developers, startups, and students leverage deep reasoning capabilities without paying the high costs typical of direct API usage.

Compare that to the $110+ per month most people spend juggling separate ChatGPT Plus, Claude Pro, Gemini Advanced, and Grok subscriptions.

By using a unified platform like AiZolo, which also offers the cheapest way to access GPT 5.1 thinking model API, users can consolidate all their AI tools into a single affordable subscription, saving hundreds of dollars while still accessing advanced reasoning features.

But the cost savings aren’t even the most interesting part.

For developers building with GPT-5.1 Thinking, AiZolo’s Custom API Keys feature is a game-changer. You can bring your own OpenAI API key to the platform, use all of AiZolo’s interface and comparison features, and route your calls precisely as needed — all through AiZolo’s unified dashboard.

Your keys are encrypted and stored securely. You get the clean interface and workflow without the platform overhead.

This means a developer like Priya can use AiZolo to:

- Compare GPT-5.1 Thinking responses against Claude Sonnet or Gemini 2.0 Pro side by side, before deciding which model to deploy in production

- Use the Prompt Manager to keep system prompts consistent and cache-optimized

- Access the model that best fits each task without paying five separate subscriptions

- Scale through her own API key when she’s ready, without leaving the platform

For Priya specifically, the combined approach — AiZolo Pro for daily use at $9.90/month plus a carefully optimized direct API integration with caching and reasoning tier routing — brought her monthly AI costs down from $680 to under $90. Same quality. Fraction of the price.

Start building smarter with AiZolo

Real-World Use Cases: Who Benefits Most

Founders and SaaS Builders

If you’re building a product powered by GPT-5.1 Thinking — legal tech, financial analysis, AI tutoring, code review tools — your margins depend on API cost optimization.

That’s why developers and startups actively search for the cheapest way to access GPT 5.1 thinking model API, combining smart usage strategies with affordable subscription options to keep costs low while still leveraging the model’s powerful reasoning capabilities.

Implement reasoning tier routing from day one, use the Batch API wherever possible, and use AiZolo to prototype and compare model outputs before committing to production infrastructure. Explore more insights on Aizolo to find guides specifically tailored for SaaS builders.

Developers and Engineers

The hidden cost of reasoning tokens is the single biggest billing surprise in the GPT-5.1 ecosystem. Build logging into your application to track reasoning token usage per request type — you’ll quickly identify which task categories are generating disproportionate reasoning overhead.

Then route accordingly. AiZolo’s multi-model interface is also excellent for rapid A/B testing of prompts across GPT-5.1 Thinking vs. Claude’s extended thinking mode. For teams looking to manage costs while experimenting, AiZolo provides the cheapest way to access GPT 5.1 thinking model API, making it easy to test, iterate, and optimize without overspending on high-reasoning API calls.

Marketers and Content Teams

GPT-5.1 Thinking might feel like overkill for content work — and for most tasks, it is. But for genuinely complex tasks like competitive analysis, multi-layered campaign strategy, or synthesizing large research documents into actionable briefs, the Thinking model delivers results that simpler models miss.

The trick is using it selectively, not reflexively. With AiZolo’s side-by-side comparison, marketers can see in real time when Thinking mode actually outperforms Instant for their specific use case.

By combining this approach with the cheapest way to access GPT 5.1 thinking model API, teams can harness deep reasoning only when needed, optimizing costs while still getting maximum value from the model.

Students and Researchers

Students often have access to educational API credits or free-tier access, but those can run out fast when using reasoning-heavy models.

That’s why many students and learners look for the cheapest way to access GPT 5.1 thinking model API, combining affordable subscriptions with optimized usage to continue building projects and experimenting with deep reasoning AI without exhausting their credits.

The cheapest way to access GPT-5.1 thinking model API for academic use is to combine free-tier API access with judicious use of AiZolo’s unified platform — using the model for the tasks that genuinely benefit from deep reasoning (literature synthesis, complex problem sets, research paper analysis) while saving cheaper models for simpler tasks. Read more expert guides on Aizolo to find student-specific AI optimization tips.

Freelancers

If you’re delivering AI-powered services to clients — writing, coding, data analysis, research — your competitive advantage is getting the best output at the lowest operational cost.

That’s why many service providers explore the cheapest way to access GPT 5.1 thinking model API, combining cost-efficient subscriptions with optimized usage strategies to deliver top-quality results while keeping expenses under control.

That’s why many service providers actively explore the cheapest way to access GPT 5.1 thinking model API, combining cost-effective subscriptions with smart usage strategies to deliver high-quality results while keeping overhead low.

Using GPT-5.1 Thinking through AiZolo means you can offer premium-quality deliverables without a premium cost structure. One subscription, all models, none of the tab-switching chaos.

For teams and freelancers looking to save, AiZolo also provides the cheapest way to access GPT 5.1 thinking model API, making it easy to leverage advanced reasoning capabilities without inflating operational expenses.

The Hidden Advantage: Model Comparison Before You Commit

One of the underappreciated benefits of using AiZolo to access GPT-5.1 Thinking is the ability to run genuine, side-by-side comparisons before you decide which model to put in your production pipeline.

By combining this with the cheapest way to access GPT 5.1 thinking model API, teams can experiment, optimize, and select the best-performing model without incurring unnecessary high costs.

Developers spend thousands of dollars testing models in production because they didn’t have a good pre-production comparison environment.

By exploring the cheapest way to access GPT 5.1 thinking model API, they can run comprehensive tests, compare outputs, and optimize performance without incurring excessive costs.

By using platforms that provide the cheapest way to access GPT 5.1 thinking model API, they can run extensive pre-production tests, compare outputs across models, and optimize usage without blowing their budget.

AiZolo’s simultaneous multi-model comparison lets you send the same complex prompt to GPT-5.1 Thinking, Claude’s reasoning mode, and Gemini simultaneously — and evaluate which delivers the best result for your specific use case.

That pre-selection process alone can save significant API spend by preventing you from deploying the wrong model at scale.

Learn from real-world experience at Aizolo

A Quick Cost Comparison: What You’re Actually Spending

Let’s run the numbers clearly.

Direct OpenAI API (unoptimized):

- Input: $1.25/million tokens

- Output (including reasoning tokens): $10/million tokens

- A complex 5,000 token request + 8,000 reasoning tokens + 2,000 output tokens = roughly $0.10 per call

- At 500 calls/day: ~$50/day → $1,500/month

Direct OpenAI API (optimized with caching + batching + tier routing):

- Effective rate drops significantly with 70% cache hit rate, batch processing, and intelligent routing

- Same 500 calls/day: ~$180–300/month depending on workload distribution

AiZolo Pro + optimized own API key:

- $9.90/month for platform access + optimized API costs

- Full control, full optimization, plus side-by-side model comparison for pre-production decisions

- Most developers see 70–85% cost reduction vs. unoptimized direct API

The message is clear: the cheapest way to access GPT 5.1 thinking model API is never just about where you buy access — it’s about how you use it, and what tools you use to manage it.

By combining smart usage strategies, cost-efficient platforms, and proper workflow design, developers can maximize the value of GPT-5.1 Thinking without overspending.

What to Do Right Now

Here’s your action plan, simplified:

Step 1: Sign up for AiZolo’s free tier at chat.aizolo.com and start comparing GPT-5.1 Thinking against other models side by side for your actual use case.

Step 2: Add your own OpenAI API key through AiZolo’s encrypted key manager to access your own API billing while using the platform’s interface.

Step 3: Implement reasoning tier routing in your application code. Start with low as the default and only escalate to high for tasks you’ve confirmed genuinely need deep reasoning.

Step 4: Audit your system prompts for cache optimization. Standardize and front-load them to maximize cache hit rates.

Step 5: Move all non-real-time processing to the Batch API for the automatic 50% cost reduction.

Follow Aizolo for practical tech & startup insights to stay updated as OpenAI’s pricing and model capabilities continue to evolve.

The Bottom Line

The GPT-5.1 Thinking model is genuinely one of the most capable AI systems available today for complex reasoning tasks. Developers and founders who use it well will build products that competitors using simpler models simply cannot match.

To make this achievable without overspending, many actively seek the cheapest way to access GPT 5.1 thinking model API, combining cost-effective plans with smart usage strategies to harness its full reasoning potential.

But “using it well” means being intentional — about reasoning effort levels, prompt caching, batching strategy, and platform selection.

Many developers combine these best practices with the cheapest way to access GPT 5.1 thinking model API, ensuring they can leverage the model’s deep reasoning efficiently without inflating operational costs.

The cheapest way to access the GPT-5.1 thinking model API is never a single trick. It’s a combination of smart routing, cache optimization, batch processing, and using the right platform to compare, test, and manage your usage. For most builders, AiZolo provides the unified foundation that makes all of those strategies easier to implement and sustain.

Priya’s $680 bill became a $90 bill. The model got better — not the other way around. Your turn.

Start building smarter with Aizolo today →

Suggested Internal Links

- Cheapest Way to Use GPT-5.1 API

- How to Compare Gemini vs Claude vs ChatGPT in One App

- The Ultimate AI Workspace for Long Form Content with Multiple Models

Suggested External Links

- OpenAI GPT-5.1 Official Model Page — Official pricing and model documentation

- OpenAI Prompt Caching Guide — Official documentation for cache optimization

- OpenAI Batch API Guide — Official documentation for batch processing cost reduction

- OpenRouter GPT-5.1 Page — Alternative API access with reasoning token details

- OpenAI GPT-5.1 Launch Announcement — Background on Thinking vs. Instant modes