Table of Contents

The Puzzle That Started an Argument

It was a Tuesday afternoon. Priya, a data science student from Hyderabad, dropped a classic logic puzzle into two AI chat windows at once. She wanted to compare Grok 4.1 and Claude 4.5 for logic puzzles to see which model solved it faster and more accurately.

Watching the responses side by side, she analyzed the reasoning steps, noting where each AI excelled and where one stumbled. This experiment gave her clear insights into the strengths and weaknesses of both models for solving complex logic problems.

“A bat and a ball cost $1.10 together. The bat costs $1 more than the ball. How much does the ball cost?”

The intuitive answer — the one that fires instantly in most human brains — is $0.10. It’s wrong. The correct answer is $0.05. To test AI reasoning, many researchers like Priya often compare Grok 4.1 and Claude 4.5 for logic puzzles, analyzing how each model handles these tricky, counterintuitive problems.

By examining their step-by-step reasoning, you can see which AI better mirrors human logic, catches common mistakes, and explains answers clearly.

Grok 4.1 answered immediately, explained the common cognitive trap clearly, and got it right.

Claude 4.5 also got it right — but went further, explaining why the human brain defaults to the wrong answer, walking through the algebra step by step, and even noting the psychological phenomenon at play (the “cognitive reflection test”).

Priya stared at both answers and thought: which one is actually better at logic?

That question — how to compare Grok 4.1 and Claude 4.5 for logic puzzles — is exactly what this post digs into. If you’re a developer, student, founder, or anyone who relies on AI for complex thinking, this breakdown is for you.

We’ll explore their accuracy, reasoning style, and step-by-step problem-solving abilities, so you can see which model performs better under different types of logic challenges. By the end, you’ll know which AI to trust for tricky puzzles and why.

Why Logic Puzzle Performance Actually Matters

Logic is the backbone of everything from debugging code to making product decisions. When you prompt an AI to help you architect a system, debug a recursive function, or analyze a business trade-off, it’s not really a creative task — it’s a logic task wearing a creative costume.

To compare Grok 4.1 and Claude 4.5 for logic puzzles, you can see how each model handles structured reasoning, multi-step problem-solving, and complex logic tasks, helping you choose the right AI for different analytical challenges.

That’s why the logic puzzle benchmark isn’t just a party trick. It reveals how a model handles:

- Multi-step reasoning (can it hold multiple premises in mind at once?)

- Error detection (does it catch its own flawed assumptions?)

- Transparency (does it show its work so you can verify it?)

- Reliability under pressure (does it stay accurate on harder variants of the same puzzle?)

Most blog posts that compare Grok 4.1 and Claude 4.5 for logic puzzles look at headline benchmarks. This one goes deeper — into real behavior, practical use cases, and what each model’s approach actually feels like to work with. We’ll analyze how each AI handles step-by-step reasoning, edge cases, and counterintuitive problems, highlighting their strengths and weaknesses.

By seeing them in action on real logic puzzles, you get a clearer picture of which model suits different problem-solving styles.

Who Are These Models, Really?

Before we run through the comparisons, it’s worth understanding each model’s design philosophy, because that philosophy explains a lot of the results.

To compare Grok 4.1 and Claude 4.5 for logic puzzles, knowing how each AI approaches reasoning, structures its output, and prioritizes steps helps explain why their solutions differ and which one may suit your problem-solving style better.

To compare Grok 4.1 and Claude 4.5 for logic puzzles, we need to see not just the answers they give, but how they approach reasoning, handle ambiguity, and break down complex problems step by step. This context helps explain why one model might excel in certain puzzle types while the other performs better in different scenarios.

Grok 4.1: Reasoning with Reach

xAI’s Grok 4.1 was built with an emphasis on breadth. It uses a hybrid attention architecture with specialized processing heads for mathematics, science, logic, and code. It integrates real-time web browsing — which means when a puzzle has context that exists “in the world,” Grok can go find it. It also brings a distinctly unfiltered, high-energy personality to its answers: direct, fast, and confident.

On major benchmarks, Grok 4.1 scores around 94% on AIME (the American Invitational Mathematics Examination) and achieves a 4.22% hallucination rate — a significant improvement over earlier versions.

Its 2-million-token context window is the largest among frontier models, and it sits at the top of the LMArena leaderboard, just behind Google’s Gemini 3.

To compare Grok 4.1 and Claude 4.5 for logic puzzles, it’s important to consider how such capabilities affect reasoning over complex, multi-step problems. A larger context window can help track details and dependencies across the puzzle, influencing accuracy and consistency in the solutions each model produces.

Claude 4.5 (Sonnet): Reasoning with Transparency

Anthropic’s Claude 4.5 Sonnet was built for reliability and explainability. It prioritizes making its reasoning visible — showing the logical chain so you can audit it, not just receive an answer.

To compare Grok 4.1 and Claude 4.5 for logic puzzles, examining this transparency helps highlight where Claude excels in step-by-step reasoning, making it easier to understand complex solutions and spot potential errors.

It achieves a 77.2% score on SWE-bench Verified (real-world code debugging), excels at long-context tasks with its 200K-token window, and maintains a 98.8% harmless response rate.

When you compare Grok 4.1 and Claude 4.5 for logic puzzles, these performance metrics give important context: reasoning over multiple steps, maintaining consistency, and avoiding errors are critical in puzzle-solving, and understanding each model’s strengths helps predict which will perform better in complex scenarios.

Claude doesn’t just solve logic puzzles — it tutors you through them. Its responses are built for situations where being wrong would be expensive, and where understanding the reasoning matters as much as the final answer.

To compare Grok 4.1 and Claude 4.5 for logic puzzles, it’s important to evaluate not only the correctness of the solutions but also how clearly each model explains its reasoning and guides users through complex problem-solving steps. This helps highlight the differences in approach and usability between the two AIs.

Head-to-Head: How Grok 4.1 and Claude 4.5 Handle Logic Puzzles

Classic Deductive Reasoning

On standard deductive puzzles — the kind that follow an “if A, then B” structure — both models perform well. But the differences emerge in how they present solutions.

When you compare Grok 4.1 and Claude 4.5 for logic puzzles, you notice that one may prioritize step-by-step reasoning while the other emphasizes brevity or alternative approaches. Observing these differences helps users choose the model that best fits their style of thinking and the type of logic challenges they face.

Grok 4.1 tends to fire out the answer first, then back into the explanation. It’s like a math student who knows the answer intuitively and writes the proof afterward. The result is often fast and correct, but sometimes the explanation feels stitched together rather than genuinely derived.

When you compare Grok 4.1 and Claude 4.5 for logic puzzles, this approach contrasts with Claude’s step-by-step reasoning, helping you understand which model is better suited for speed versus detailed, audit-friendly explanations.

Claude 4.5 builds the proof forward. It states assumptions, derives intermediate steps, and arrives at the conclusion in a way that feels genuinely earned. For anyone who needs to understand the logic (students, educators, developers writing logic-heavy code), this approach has a meaningful advantage.

Edge: Claude 4.5 for transparent, teachable reasoning.

Multi-Step Logic Chains

When puzzles require holding multiple conditions in mind simultaneously — like “Five friends each own a different pet, live in different houses, and prefer different drinks” type problems — both models show their depth.

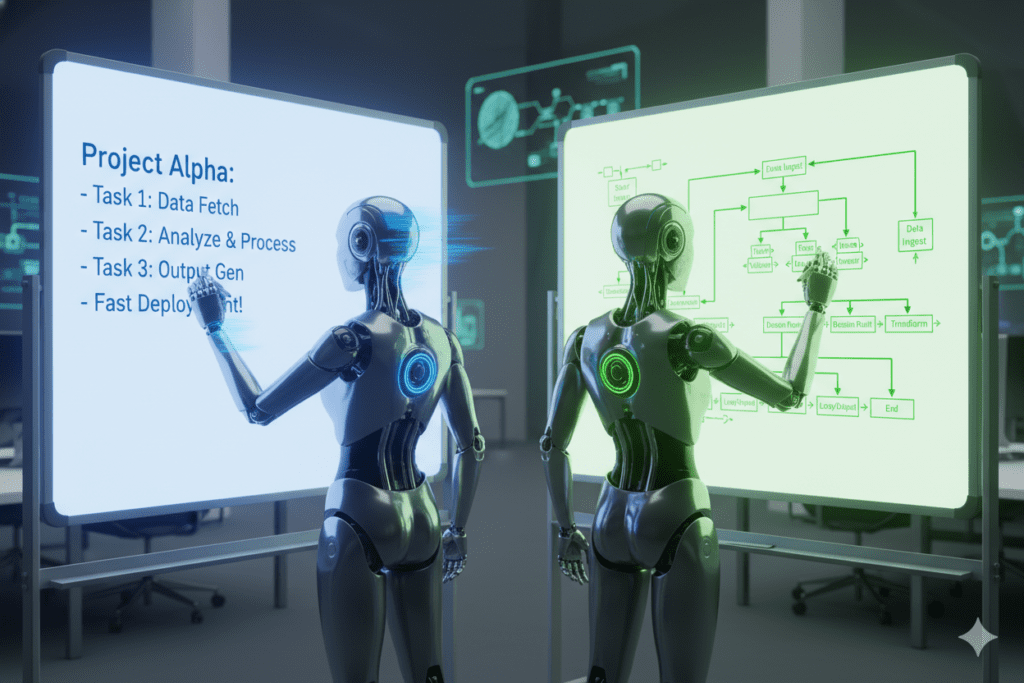

Grok 4.1 tends to systematically eliminate possibilities and document its work in a structured, table-like format. The visual organization actually makes it easy to follow and verify.

To compare Grok 4.1 and Claude 4.5 for logic puzzles, it’s important to see how this methodical style contrasts with Claude’s more narrative, step-by-step reasoning. Evaluating both approaches shows which model better suits different types of logic challenges and user preferences.

Claude 4.5 narrates the elimination process in prose, which is more readable but can become harder to cross-check on very complex puzzles. When you compare Grok 4.1 and Claude 4.5 for logic puzzles, this difference in presentation style becomes clear:

Grok’s structured tables make verification straightforward, while Claude’s narrative explanations are easier to digest but may require extra effort to track every logical step. Choosing between them depends on whether you value clarity of reasoning or readability of explanation.

Edge: Grok 4.1 for visual structure on multi-variable puzzles.

Catching the Trick

Some logic puzzles are designed to exploit cognitive shortcuts — like the bat-and-ball problem above. The ability to catch and name the trap matters a lot for educational contexts.

To compare Grok 4.1 and Claude 4.5 for logic puzzles, it’s useful to see which model better identifies these cognitive traps, explains why they’re misleading, and guides users toward the correct reasoning. This comparison highlights differences in precision, clarity, and teaching effectiveness between the two AIs.

In independent tests, both models correctly flag the cognitive illusion. But Claude 4.5 consistently goes deeper, naming the psychological mechanism (often the “System 1 vs System 2” thinking framework) and explaining why the wrong answer feels so right. This kind of meta-reasoning is something Grok sometimes skips in favor of speed.

Edge: Claude 4.5 for meta-cognitive depth.

Real-Time and Contextual Puzzles

Here’s where Grok 4.1 has a structural advantage. Because it has live web access baked into its reasoning, when a puzzle references current real-world data — market logic, recent events, or social context — Grok can fetch what it needs and incorporate it seamlessly.

Claude 4.5 doesn’t have this native real-time ability. For puzzles that require current knowledge, you’d need to supply that context manually. When you compare Grok 4.1 and Claude 4.5 for logic puzzles, this limitation becomes important, as Grok may handle up-to-date information more effectively. Understanding how each model deals with context and external data helps determine which is better suited for dynamic or evolving logic challenges.

Edge: Grok 4.1 for contextually grounded, real-world reasoning.

Where Each Model Stumbles

No honest comparison of Grok 4.1 and Claude 4.5 for logic puzzles skips the failure modes.

Grok 4.1 has been noted in independent benchmarks to occasionally fumble simple logic questions — the kind where overconfidence or an “unfiltered” personality leads to a confident wrong answer.

The recommendation from reviewers: always verify Grok’s technical answers, especially on edge cases in formal logic. When you compare Grok 4.1 and Claude 4.5 for logic puzzles, it becomes clear that while both models are strong, checking Grok’s outputs ensures accuracy, particularly for tricky or unconventional problems where reasoning steps may be complex.

Claude 4.5 can sometimes over-engineer its responses. On a puzzle where a two-sentence answer would suffice, you might get six paragraphs. For rapid-fire use cases or time-sensitive workflows, this verbosity can slow you down.

Both models are excellent. But knowing their weaknesses is what separates a power user from someone who just got surprised by a wrong answer at the worst possible time. To compare Grok 4.1 and Claude 4.5 for logic puzzles, understanding where each model excels and where it struggles allows you to leverage their strengths effectively and avoid costly mistakes in complex problem-solving.

Real-World Use Cases: Who Should Use Which?

For Students and Educators

If you’re studying formal logic, philosophy, or mathematics, Claude 4.5’s step-by-step, teachable reasoning style is your best friend. It doesn’t just solve the puzzle — it explains the underlying principle, which is exactly what learning requires. Use it to check your own proofs, understand where your reasoning broke down, or generate practice problems with explanations.

For Developers and Engineers

When you’re debugging a system, the logic puzzle you’re facing is usually: “Why does this function behave differently from how I expect it to?” Grok 4.1’s speed and structured output make it excellent for rapid iteration.

To compare Grok 4.1 and Claude 4.5 for logic puzzles, it’s useful to see how each model approaches problem-solving, tracks reasoning steps, and presents solutions, helping you choose the best tool for different debugging or logic challenges.

Claude 4.5’s transparency and completeness make it better when you need to understand a complex codebase or explain your reasoning to a team.

When you compare Grok 4.1 and Claude 4.5 for logic puzzles, this difference becomes evident: Claude excels at narrating thought processes clearly, making it ideal for collaborative problem-solving or teaching, while Grok’s structured approach is faster for step-by-step elimination and verification.

Many experienced engineers use both: Grok for quick logic checks, Claude for deep architectural reasoning.

For Founders and Product Builders

Decision-making under uncertainty is a form of applied logic. When comparing go-to-market options, prioritizing features, or stress-testing a business model, you want an AI that shows its reasoning — because you need to challenge it, not just accept it. Claude 4.5 wins here for its auditability.

For Marketers and Analysts

If your logic problems are rooted in real-world data — “What does this trend mean? What’s the most likely customer behavior?” — Grok 4.1’s live web access and strong analytical structure give it an edge.

To compare Grok 4.1 and Claude 4.5 for logic puzzles, it’s important to note that Grok excels at data-driven reasoning and timely insights, while Claude focuses more on clarity and step-by-step explanation, making each model better suited for different types of logic challenges.

Its table-like output also makes it easy to drop reasoning directly into presentations or reports.

For SaaS Builders and Freelancers

If you’re building an AI-powered product and need reliable reasoning as part of a workflow, Claude 4.5’s consistency, safety ratings, and transparent reasoning chains make it the safer choice for production environments. Grok 4.1’s speed and low cost make it a compelling option for high-volume tasks where a small error rate is acceptable.

The Smart Move: Don’t Choose — Compare Both

Here’s the insight that most comparison posts miss: the question isn’t which model wins at logic puzzles. The better question is: which model is right for this specific puzzle, right now?

To compare Grok 4.1 and Claude 4.5 for logic puzzles, you need to consider the type of puzzle, the context, and the reasoning style you prefer, rather than just looking at overall scores or benchmarks. This approach ensures you pick the AI that best fits your immediate problem-solving needs.

And the most practical answer is: you should be able to ask both and compare their reasoning side by side.

That’s exactly what Aizolo makes possible. Instead of paying $20–$30 per month for each AI separately, Aizolo gives you access to Grok, Claude, ChatGPT, Gemini, and more in a single unified interface for just $9.90/month — with side-by-side comparison built in.

When you compare Grok 4.1 and Claude 4.5 for logic puzzles in real time on the same platform, you stop guessing and start seeing the difference directly. You learn which model fits your thinking style.

And over time, by comparing Grok 4.1 and Claude 4.5 for logic puzzles across different problem types, you develop an intuition for which one to reach for — and when, making your logic-solving far more efficient and accurate.

Explore more AI model insights and comparisons on Aizolo’s blog.

Benchmark Summary: Grok 4.1 vs Claude 4.5 for Logic

| Category | Grok 4.1 | Claude 4.5 |

|---|---|---|

| AIME Math Score | ~94% | ~92.8% |

| Hallucination Rate | ~4.22% | Very low (98.8% harmless) |

| Reasoning Transparency | Moderate | High |

| Real-Time Context | Yes (native) | No (manual input) |

| Context Window | 2M tokens | 200K tokens |

| Output Style | Fast, structured | Detailed, narrative |

| Best For Logic | Multi-variable, real-world | Step-by-step, educational |

How to Test This Yourself Right Now

You don’t have to take anyone’s word for it — including ours. Here’s a practical logic test you can run yourself when you compare Grok 4.1 and Claude 4.5 for logic puzzles.

By testing both models on the same problem, you can observe their reasoning styles, accuracy, and step-by-step explanations, helping you understand which AI is better suited for different types of logic challenges.

Test 1 (Classic): “There are 5 houses in a row. Each house is a different color. The people in each house are of different nationalities, drink different beverages, smoke different brands of cigarettes, and have different pets. Use the following clues to determine who has the fish.” (Then give 10 classic Einstein riddle clues.)

Test 2 (Self-Referential): “This sentence is false. Is the above statement true or false? Explain your reasoning.”

Test 3 (Probabilistic Logic): “A doctor tests a patient for a rare disease that affects 1 in 1,000 people. The test is 99% accurate. The patient tests positive. What is the probability they actually have the disease?”

Run all three in the same session on both models. Look at how each builds its reasoning, where it expresses uncertainty, and whether it catches the counterintuitive answer in Test 3 (it’s roughly 9%, not 99% — thanks to Bayes’ Theorem).

Start running side-by-side tests at AiZolo — free to start, no setup required.

Why This Matters More in 2026

The gap between AI models is narrowing at the surface level, but it’s widening in specific, nuanced capabilities. Logic puzzle performance is a microcosm of something bigger: can you trust this model’s reasoning when the stakes are high?

Both Grok 4.1 and Claude 4.5 are genuinely impressive. The right choice depends on your workflow, your tolerance for verbosity vs speed, and whether you need live context or deep transparency.

To compare Grok 4.1 and Claude 4.5 for logic puzzles, consider how each model handles reasoning, step-by-step problem-solving, and explanation clarity, so you can select the one that best fits your logic-solving style and specific challenges.

What’s clear is that choosing one and hoping for the best is a strategy built on incomplete information. The smarter approach is to have both available — and to know when to use each.

When you compare Grok 4.1 and Claude 4.5 for logic puzzles, you can identify which model excels at specific problem types and reasoning styles, giving you the flexibility to leverage their strengths rather than relying on a single AI for every puzzle.

Learn from real-world AI experience at Aizolo. Follow Aizolo for practical tech and startup insights as the AI landscape keeps evolving.

Conclusion: Who Wins When You Compare Grok 4.1 and Claude 4.5 for Logic Puzzles?

If you came looking for a clean winner, here’s the answer: it depends on what kind of logic you’re doing.

When you compare Grok 4.1 and Claude 4.5 for logic puzzles, Grok wins for speed, real-time grounding, structured multi-variable output, and handling puzzles with live-world context.

To compare Grok 4.1 and Claude 4.5 for logic puzzles effectively, it’s helpful to test both models on the same problems, observing how each handles reasoning steps, data integration, and complexity, so you can pick the best AI for your specific logic-solving needs.

Claude 4.5 wins for step-by-step transparency, educational depth, meta-cognitive reasoning, and reliability in high-stakes environments.

When you compare Grok 4.1 and Claude 4.5 for logic puzzles, these strengths become clear, showing that Claude is ideal when understanding the reasoning process and explaining solutions is just as important as arriving at the correct answer.

For most people reading this — developers, students, founders, and builders — the right answer isn’t picking one. It’s having both. And testing them until you develop your own intuition for which brain to borrow.

When you compare Grok 4.1 and Claude 4.5 for logic puzzles, using both models in tandem helps you leverage Grok’s speed and real-time context alongside Claude’s clarity and step-by-step reasoning for the most effective problem-solving.

Start building smarter with Aizolo. Compare Grok 4.1 and Claude 4.5 side by side, alongside GPT, Gemini, and more — all in one platform for less than the cost of a single AI subscription. Try Aizolo free today →

Suggested Internal Links

- How to Compare Gemini vs Claude vs ChatGPT in One App — directly related: same theme of model comparison in a unified interface

- The Ultimate AI Workspace for Long Form Content with Multiple Models — relevant for developers and SaaS builders using multiple models

Suggested External Links

- Humanity’s Last Exam benchmark (Scale AI) — authoritative source for reasoning benchmark data referenced in post

- SWE-bench Verified leaderboard — official benchmark for code reasoning scores

- Bayes’ Theorem explainer — Khan Academy — relevant to the Bayesian logic puzzle example in the “test it yourself” section

- LMArena leaderboard — authoritative third-party benchmark referenced in the model comparison